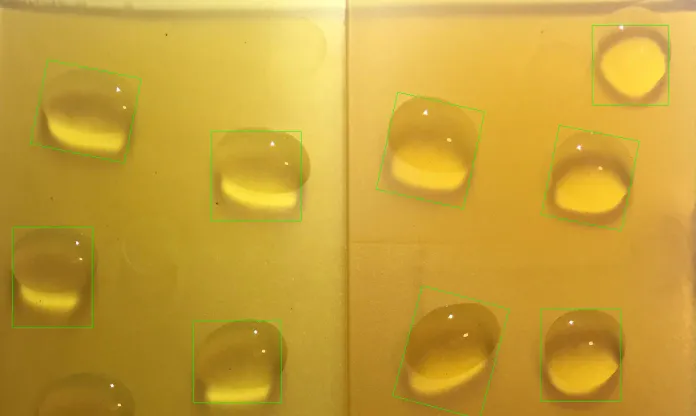

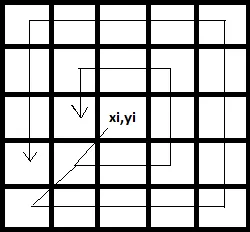

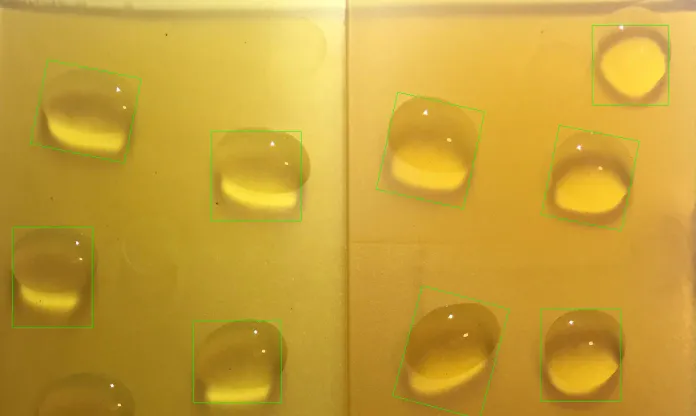

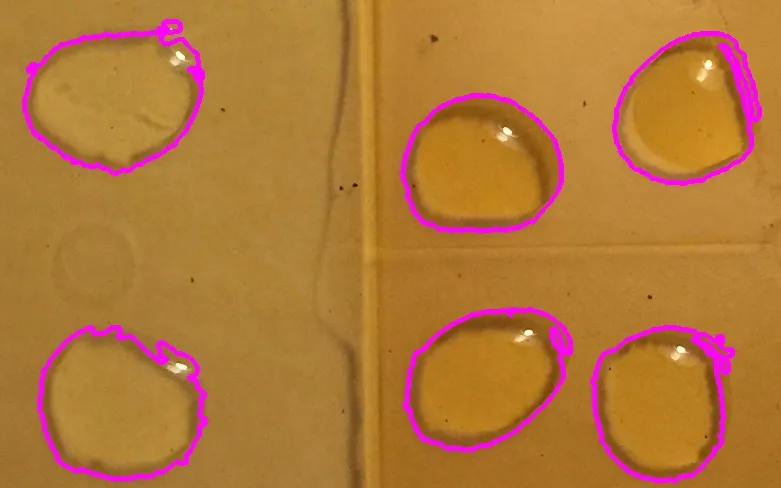

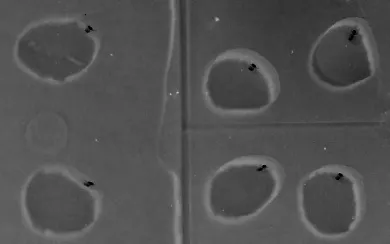

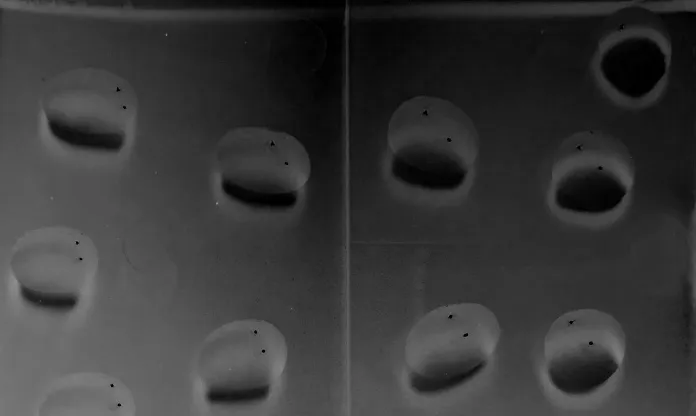

由于输入图像之间存在很大的差异,算法应该能够适应各种情况。由于Canny算法基于检测高频率,我的算法将图像的锐度作为预处理适应的参数。我不想花费一周时间来找出所有数据的函数,所以我应用了一个简单的线性函数,基于两个图像,然后用第三个图像进行测试。以下是我的结果:

请注意,这只是一种基本方法,仅用于证明一个观点。它需要实验、测试和改进。该想法是使用Sobel算子,并对获取的所有像素求和。将其除以图像的大小,应该可以基本估计图像的高频响应。现在,通过实验,我找到了适用于CLAHE滤波器的clipLimit值,在两个测试案例中发现了与输入的高频响应连接的

线性函数,产生了良好的结果。

sobel = get_sobel(img)

clip_limit = (-2.556) * np.sum(sobel)/(img.shape[0] * img.shape[1]) + 26.557

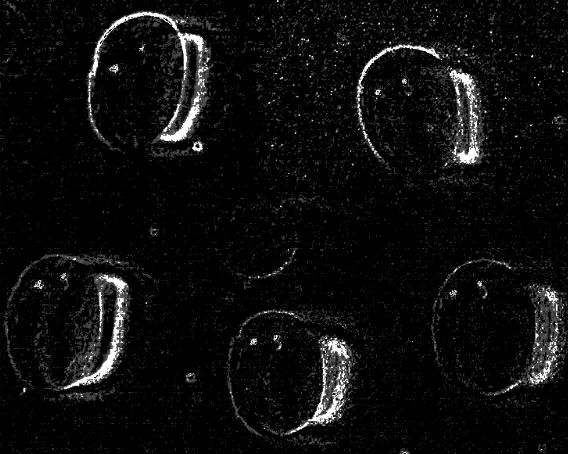

那是自适应部分。现在来说轮廓。我花了一些时间才找到一种正确的方法来过滤噪音。我选择了一个简单的技巧:使用两次轮廓查找。首先,我使用它来滤掉不必要的嘈杂轮廓。然后,我继续使用一些形态学魔术,以得到正确的对象检测斑点(代码中有更多详细信息)。最后一步是根据计算出的平均值过滤边界矩形,因为在所有样本中,斑点的大小相对较为相似。

import cv2

import numpy as np

def unsharp_mask(img, blur_size = (5,5), imgWeight = 1.5, gaussianWeight = -0.5):

gaussian = cv2.GaussianBlur(img, (5,5), 0)

return cv2.addWeighted(img, imgWeight, gaussian, gaussianWeight, 0)

def smoother_edges(img, first_blur_size, second_blur_size = (5,5), imgWeight = 1.5, gaussianWeight = -0.5):

img = cv2.GaussianBlur(img, first_blur_size, 0)

return unsharp_mask(img, second_blur_size, imgWeight, gaussianWeight)

def close_image(img, size = (5,5)):

kernel = np.ones(size, np.uint8)

return cv2.morphologyEx(img, cv2.MORPH_CLOSE, kernel)

def open_image(img, size = (5,5)):

kernel = np.ones(size, np.uint8)

return cv2.morphologyEx(img, cv2.MORPH_OPEN, kernel)

def shrink_rect(rect, scale = 0.8):

center, (width, height), angle = rect

width = width * scale

height = height * scale

rect = center, (width, height), angle

return rect

def clahe(img, clip_limit = 2.0):

clahe = cv2.createCLAHE(clipLimit=clip_limit, tileGridSize=(5,5))

return clahe.apply(img)

def get_sobel(img, size = -1):

sobelx64f = cv2.Sobel(img,cv2.CV_64F,2,0,size)

abs_sobel64f = np.absolute(sobelx64f)

return np.uint8(abs_sobel64f)

img = cv2.imread("blobs4.jpg")

imgc = img.copy()

resize_times = 5

img = cv2.resize(img, None, img, fx = 1 / resize_times, fy = 1 / resize_times)

img = cv2.cvtColor(img, cv2.COLOR_BGR2GRAY)

sobel = get_sobel(img)

clip_limit = (-2.556) * np.sum(sobel)/(img.shape[0] * img.shape[1]) + 26.557

if(clip_limit < 1.0):

clip_limit = 0.1

if(clip_limit > 8.0):

clip_limit = 8

img = clahe(img, clip_limit)

img = unsharp_mask(img)

img_blurred = (cv2.GaussianBlur(img.copy(), (2*2+1,2*2+1), 0))

canny = cv2.Canny(img_blurred, 35, 95)

_, cnts, _ = cv2.findContours(canny.copy(), cv2.RETR_LIST, cv2.CHAIN_APPROX_SIMPLE)

canvas = np.ones(img.shape, np.uint8)

for c in cnts:

l = cv2.arcLength(c, False)

x,y,w,h = cv2.boundingRect(c)

aspect_ratio = float(w)/h

if l > 500:

continue

if l < 20:

continue

if aspect_ratio < 0.2:

continue

if aspect_ratio > 5:

continue

if l > 150 and (aspect_ratio > 10 or aspect_ratio < 0.1):

continue

cv2.drawContours(canvas, [c], -1, (255, 255, 255), 2)

canvas = close_image(canvas, (7,7))

img_blurred = cv2.GaussianBlur(canvas, (8*2+1,8*2+1), 0)

img_blurred = smoother_edges(img_blurred, (9,9))

kernel = np.ones((3,3), np.uint8)

eroded = cv2.erode(img_blurred, kernel)

_, im_th = cv2.threshold(eroded, 50, 255, cv2.THRESH_BINARY)

canny = cv2.Canny(im_th, 11, 33)

_, cnts, _ = cv2.findContours(canny.copy(), cv2.RETR_EXTERNAL, cv2.CHAIN_APPROX_SIMPLE)

sum_area = 0

rect_list = []

for i,c in enumerate(cnts):

rect = cv2.minAreaRect(c)

_, (width, height), _ = rect

area = width*height

sum_area += area

rect_list.append(rect)

mean_area = sum_area / len(cnts)

for rect in rect_list:

_, (width, height), _ = rect

box = cv2.boxPoints(rect)

box = np.int0(box * 5)

area = width * height

if(area > mean_area*0.6):

rect = shrink_rect(rect, 0.8)

box = cv2.boxPoints(rect)

box = np.int0(box * resize_times)

cv2.drawContours(imgc, [box], 0, (0,255,0),1)

imgc = cv2.resize(imgc, None, imgc, fx = 0.5, fy = 0.5)

cv2.imshow("imgc", imgc)

cv2.imwrite("result3.png", imgc)

cv2.waitKey(0)

总的来说,我认为这是一个非常有趣的问题,但涉及面有点太广,无法在此回答。我提出的方法只能作为路标而不是完整的解决方案。其基本思想是:

自适应预处理。

两次查找轮廓:一次用于过滤,一次用于实际分类。

根据均值大小过滤斑点。

感谢您的阅读,祝好运!

i.stack.imgur.com,谢谢。 - Siarhei