我想用LSTM单元建模RNN,以便基于多个输入时间序列预测多个输出时间序列。具体而言,我有4个输出时间序列y1[t]、y2[t]、y3[t]、y4[t],每个长度为3,000(t=0,...,2999)。我还有3个输入时间序列x1[t]、x2[t]、x3[t],每个长度为3,000秒(t=0,...,2999)。目标是使用截至当前时间点的所有输入时间序列来预测y1[t]、.. y4[t]。

y1[t] = f1(x1[k],x2[k],x3[k], k = 0,...,t)

y2[t] = f2(x1[k],x2[k],x3[k], k = 0,...,t)

y3[t] = f3(x1[k],x2[k],x3[k], k = 0,...,t)

y4[t] = f3(x1[k],x2[k],x3[k], k = 0,...,t)

为了使模型具有长期内存,我按照 keras-stateful-lstm的方法创建了一个有状态的RNN模型。 我的情况与 keras-stateful-lstm 的区别在于:

- 有多个输出时间序列

- 有多个输入时间序列

- 目标是预测连续时间序列

我的代码正在运行。 但是,即使使用简单数据,模型的预测结果也很差。 因此,我想请问您是否有任何错误。

以下是我的示例代码。

在玩具示例中,我们的输入时间序列是简单的余弦和正弦波:

import numpy as np

def random_sample(len_timeseries=3000):

Nchoice = 600

x1 = np.cos(np.arange(0,len_timeseries)/float(1.0 + np.random.choice(Nchoice)))

x2 = np.cos(np.arange(0,len_timeseries)/float(1.0 + np.random.choice(Nchoice)))

x3 = np.sin(np.arange(0,len_timeseries)/float(1.0 + np.random.choice(Nchoice)))

x4 = np.sin(np.arange(0,len_timeseries)/float(1.0 + np.random.choice(Nchoice)))

y1 = np.random.random(len_timeseries)

y2 = np.random.random(len_timeseries)

y3 = np.random.random(len_timeseries)

for t in range(3,len_timeseries):

## the output time series depend on input as follows:

y1[t] = x1[t-2]

y2[t] = x2[t-1]*x3[t-2]

y3[t] = x4[t-3]

y = np.array([y1,y2,y3]).T

X = np.array([x1,x2,x3,x4]).T

return y, X

def generate_data(Nsequence = 1000):

X_train = []

y_train = []

for isequence in range(Nsequence):

y, X = random_sample()

X_train.append(X)

y_train.append(y)

return np.array(X_train),np.array(y_train)

请注意,在时间点t,y1的值只是x1在t-2时刻的值。 同时,请注意,在时间点t,y3的值只是x1在前两个时刻的值。

使用这些函数,我生成了100组时间序列y1、y2、y3、x1、x2、x3、x4。其中一半用于训练数据,另一半用于测试数据。

Nsequence = 100

prop = 0.5

Ntrain = Nsequence*prop

X, y = generate_data(Nsequence)

X_train = X[:Ntrain,:,:]

X_test = X[Ntrain:,:,:]

y_train = y[:Ntrain,:,:]

y_test = y[Ntrain:,:,:]

X和y都是三维的,每个包含:

#X.shape = (N sequence, length of time series, N input features)

#y.shape = (N sequence, length of time series, N targets)

print X.shape, y.shape

> (100, 3000, 4) (100, 3000, 3)

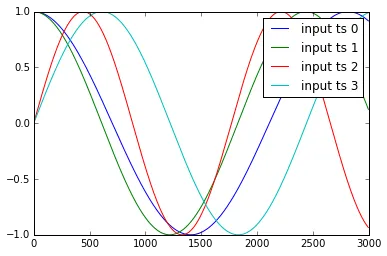

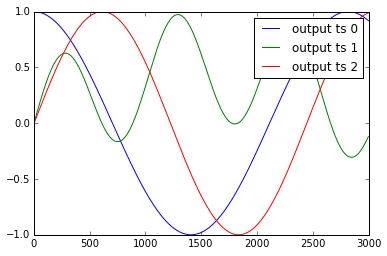

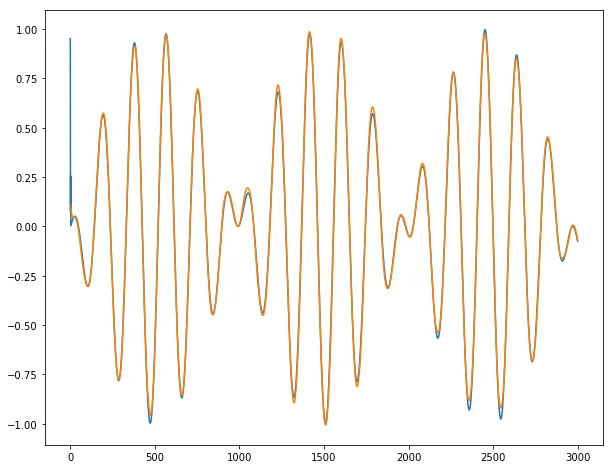

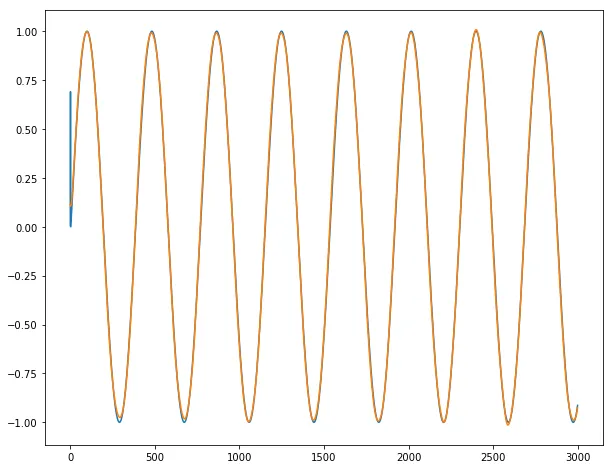

以下是时间序列y1,..y4和x1,..,x3的示例:

我对这些数据进行了标准化处理:

我对这些数据进行了标准化处理:def standardize(X_train,stat=None):

## X_train is 3 dimentional e.g. (Nsample,len_timeseries, Nfeature)

## standardization is done with respect to the 3rd dimention

if stat is None:

featmean = np.array([np.nanmean(X_train[:,:,itrain]) for itrain in range(X_train.shape[2])]).reshape(1,1,X_train.shape[2])

featstd = np.array([np.nanstd(X_train[:,:,itrain]) for itrain in range(X_train.shape[2])]).reshape(1,1,X_train.shape[2])

stat = {"featmean":featmean,"featstd":featstd}

else:

featmean = stat["featmean"]

featstd = stat["featstd"]

X_train_s = (X_train - featmean)/featstd

return X_train_s, stat

X_train_s, X_stat = standardize(X_train,stat=None)

X_test_s, _ = standardize(X_test,stat=X_stat)

y_train_s, y_stat = standardize(y_train,stat=None)

y_test_s, _ = standardize(y_test,stat=y_stat)

创建一个有10个LSTM隐藏神经元的有状态RNN模型

from keras.models import Sequential

from keras.layers.core import Dense, Activation, Dropout

from keras.layers.recurrent import LSTM

def create_stateful_model(hidden_neurons):

# create and fit the LSTM network

model = Sequential()

model.add(LSTM(hidden_neurons,

batch_input_shape=(1, 1, X_train.shape[2]),

return_sequences=False,

stateful=True))

model.add(Dropout(0.5))

model.add(Dense(y_train.shape[2]))

model.add(Activation("linear"))

model.compile(loss='mean_squared_error', optimizer="rmsprop",metrics=['mean_squared_error'])

return model

model = create_stateful_model(10)

现在以下代码用于训练和验证RNN模型:

def get_R2(y_pred,y_test):

## y_pred_s_batch: (Nsample, len_timeseries, Noutput)

## the relative percentage error is computed for each output

overall_mean = np.nanmean(y_test)

SSres = np.nanmean( (y_pred - y_test)**2 ,axis=0).mean(axis=0)

SStot = np.nanmean( (y_test - overall_mean)**2 ,axis=0).mean(axis=0)

R2 = 1 - SSres / SStot

print "<R2 testing> target 1:",R2[0],"target 2:",R2[1],"target 3:",R2[2]

return R2

def reshape_batch_input(X_t,y_t=None):

X_t = np.array(X_t).reshape(1,1,len(X_t)) ## (1,1,4) dimention

if y_t is not None:

y_t = np.array([y_t]) ## (1,3)

return X_t,y_t

def fit_stateful(model,X_train,y_train,X_test,y_test,nb_epoch=8):

'''

reference: http://philipperemy.github.io/keras-stateful-lstm/

X_train: (N_time_series, len_time_series, N_features) = (10,000, 3,600 (max), 2),

y_train: (N_time_series, len_time_series, N_output) = (10,000, 3,600 (max), 4)

'''

max_len = X_train.shape[1]

print "X_train.shape(Nsequence =",X_train.shape[0],"len_timeseries =",X_train.shape[1],"Nfeats =",X_train.shape[2],")"

print "y_train.shape(Nsequence =",y_train.shape[0],"len_timeseries =",y_train.shape[1],"Ntargets =",y_train.shape[2],")"

print('Train...')

for epoch in range(nb_epoch):

print('___________________________________')

print "epoch", epoch+1, "out of ",nb_epoch

## ---------- ##

## training ##

## ---------- ##

mean_tr_acc = []

mean_tr_loss = []

for s in range(X_train.shape[0]):

for t in range(max_len):

X_st = X_train[s][t]

y_st = y_train[s][t]

if np.any(np.isnan(y_st)):

break

X_st,y_st = reshape_batch_input(X_st,y_st)

tr_loss, tr_acc = model.train_on_batch(X_st,y_st)

mean_tr_acc.append(tr_acc)

mean_tr_loss.append(tr_loss)

model.reset_states()

##print('accuracy training = {}'.format(np.mean(mean_tr_acc)))

print('<loss (mse) training> {}'.format(np.mean(mean_tr_loss)))

## ---------- ##

## testing ##

## ---------- ##

y_pred = predict_stateful(model,X_test)

eva = get_R2(y_pred,y_test)

return model, eva, y_pred

def predict_stateful(model,X_test):

y_pred = []

max_len = X_test.shape[1]

for s in range(X_test.shape[0]):

y_s_pred = []

for t in range(max_len):

X_st = X_test[s][t]

if np.any(np.isnan(X_st)):

## the rest of y is NA

y_s_pred.extend([np.NaN]*(max_len-len(y_s_pred)))

break

X_st,_ = reshape_batch_input(X_st)

y_st_pred = model.predict_on_batch(X_st)

y_s_pred.append(y_st_pred[0].tolist())

y_pred.append(y_s_pred)

model.reset_states()

y_pred = np.array(y_pred)

return y_pred

model, train_metric, y_pred = fit_stateful(model,

X_train_s,y_train_s,

X_test_s,y_test_s,nb_epoch=15)

输出结果如下:

X_train.shape(Nsequence = 15 len_timeseries = 3000 Nfeats = 4 )

y_train.shape(Nsequence = 15 len_timeseries = 3000 Ntargets = 3 )

Train...

___________________________________

epoch 1 out of 15

<loss (mse) training> 0.414115458727

<R2 testing> target 1: 0.664464304688 target 2: -0.574523052322 target 3: 0.526447813052

___________________________________

epoch 2 out of 15

<loss (mse) training> 0.394549429417

<R2 testing> target 1: 0.361516087033 target 2: -0.724583671831 target 3: 0.795566178787

___________________________________

epoch 3 out of 15

<loss (mse) training> 0.403199136257

<R2 testing> target 1: 0.09610702779 target 2: -0.468219774909 target 3: 0.69419269042

___________________________________

epoch 4 out of 15

<loss (mse) training> 0.406423777342

<R2 testing> target 1: 0.469149270848 target 2: -0.725592048946 target 3: 0.732963522766

___________________________________

epoch 5 out of 15

<loss (mse) training> 0.408153116703

<R2 testing> target 1: 0.400821776652 target 2: -0.329415365214 target 3: 0.2578432553

___________________________________

epoch 6 out of 15

<loss (mse) training> 0.421062678099

<R2 testing> target 1: -0.100464591586 target 2: -0.232403824523 target 3: 0.570606489959

___________________________________

epoch 7 out of 15

<loss (mse) training> 0.417774856091

<R2 testing> target 1: 0.320094445321 target 2: -0.606375769083 target 3: 0.349876223119

___________________________________

epoch 8 out of 15

<loss (mse) training> 0.427440851927

<R2 testing> target 1: 0.489543715713 target 2: -0.445328806611 target 3: 0.236463139804

___________________________________

epoch 9 out of 15

<loss (mse) training> 0.422931671143

<R2 testing> target 1: -0.31006468223 target 2: -0.322621276474 target 3: 0.122573123871

___________________________________

epoch 10 out of 15

<loss (mse) training> 0.43609803915

<R2 testing> target 1: 0.459111316554 target 2: -0.313382405804 target 3: 0.636854743292

___________________________________

epoch 11 out of 15

<loss (mse) training> 0.433844655752

<R2 testing> target 1: -0.0161015052703 target 2: -0.237462995323 target 3: 0.271788109459

___________________________________

epoch 12 out of 15

<loss (mse) training> 0.437297314405

<R2 testing> target 1: -0.493665758658 target 2: -0.234236263092 target 3: 0.047264439493

___________________________________

epoch 13 out of 15

<loss (mse) training> 0.470605045557

<R2 testing> target 1: 0.144443089961 target 2: -0.333210874982 target 3: -0.00432615142135

___________________________________

epoch 14 out of 15

<loss (mse) training> 0.444566756487

<R2 testing> target 1: -0.053982119103 target 2: -0.0676577449316 target 3: -0.12678037186

___________________________________

epoch 15 out of 15

<loss (mse) training> 0.482106208801

<R2 testing> target 1: 0.208482181828 target 2: -0.402982670798 target 3: 0.366757778713

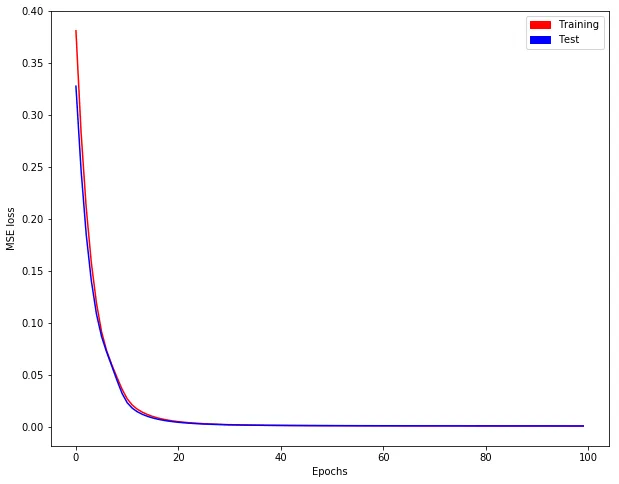

正如您所看到的,训练损失并没有减少!!

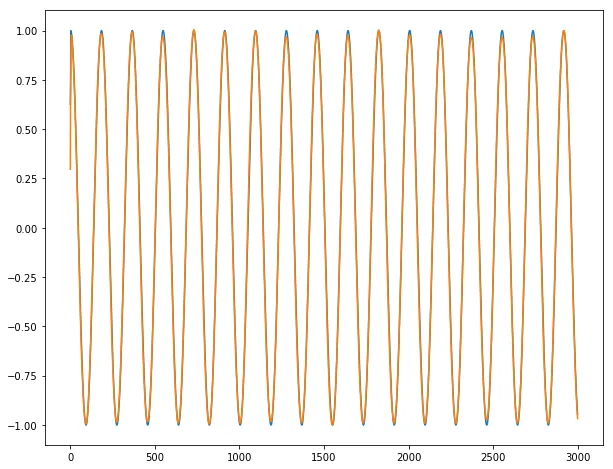

由于目标时间序列1和3与输入时间序列有非常简单的关系(y1[t] = x1[t-2],y3[t] = x4[t-3]),我预期会有完美的预测性能。然而,每个时期测试R2显示情况并非如此。最后一次时期的R2仅约为0.2和0.36。显然,算法没有收敛。我对这个结果感到非常困惑。请告诉我我错过了什么,以及为什么算法没有收敛。

hyperopt包或hyperas包装器进行一些超参数优化呢? - StatsSorceress