我正在使用全卷积自编码器将黑白图像着色,但输出结果存在棋盘格状图案,我想要去除它。到目前为止,我看到的棋盘格伪影比我的大得多,通常消除伪影的方法是用双线性上采样替换所有的反池化操作(有人告诉过我这个方法)。

但我不能简单地替换反池化操作,因为我使用不同大小的图像,因此需要反池化操作,否则输出张量可能与原始张量的大小不同。

TLDR: 如何在不更换反池化操作的情况下消除这些棋盘格伪影?

但我不能简单地替换反池化操作,因为我使用不同大小的图像,因此需要反池化操作,否则输出张量可能与原始张量的大小不同。

TLDR: 如何在不更换反池化操作的情况下消除这些棋盘格伪影?

class AE(nn.Module):

def __init__(self):

super(AE, self).__init__()

self.leaky_reLU = nn.LeakyReLU(0.2)

self.pool = nn.MaxPool2d(kernel_size=2, stride=2, padding=1, return_indices=True)

self.unpool = nn.MaxUnpool2d(kernel_size=2, stride=2, padding=1)

self.softmax = nn.Softmax2d()

self.conv1 = nn.Conv2d(in_channels=3, out_channels=64, kernel_size=3, stride=1, padding=1)

self.conv2 = nn.Conv2d(in_channels=64, out_channels=128, kernel_size=3, stride=1, padding=1)

self.conv3 = nn.Conv2d(in_channels=128, out_channels=256, kernel_size=3, stride=1, padding=1)

self.conv4 = nn.Conv2d(in_channels=256, out_channels=512, kernel_size=3, stride=1, padding=1)

self.conv5 = nn.Conv2d(in_channels=512, out_channels=1024, kernel_size=3, stride=1, padding=1)

self.conv6 = nn.ConvTranspose2d(in_channels=1024, out_channels=512, kernel_size=3, stride=1, padding=1)

self.conv7 = nn.ConvTranspose2d(in_channels=512, out_channels=256, kernel_size=3, stride=1, padding=1)

self.conv8 = nn.ConvTranspose2d(in_channels=256, out_channels=128, kernel_size=3, stride=1, padding=1)

self.conv9 = nn.ConvTranspose2d(in_channels=128, out_channels=64, kernel_size=3, stride=1, padding=1)

self.conv10 = nn.ConvTranspose2d(in_channels=64, out_channels=2, kernel_size=3, stride=1, padding=1)

def forward(self, x):

# encoder

x = self.conv1(x)

x = self.leaky_reLU(x)

size1 = x.size()

x, indices1 = self.pool(x)

x = self.conv2(x)

x = self.leaky_reLU(x)

size2 = x.size()

x, indices2 = self.pool(x)

x = self.conv3(x)

x = self.leaky_reLU(x)

size3 = x.size()

x, indices3 = self.pool(x)

x = self.conv4(x)

x = self.leaky_reLU(x)

size4 = x.size()

x, indices4 = self.pool(x)

######################

x = self.conv5(x)

x = self.leaky_reLU(x)

x = self.conv6(x)

x = self.leaky_reLU(x)

######################

# decoder

x = self.unpool(x, indices4, output_size=size4)

x = self.conv7(x)

x = self.leaky_reLU(x)

x = self.unpool(x, indices3, output_size=size3)

x = self.conv8(x)

x = self.leaky_reLU(x)

x = self.unpool(x, indices2, output_size=size2)

x = self.conv9(x)

x = self.leaky_reLU(x)

x = self.unpool(x, indices1, output_size=size1)

x = self.conv10(x)

x = self.softmax(x)

return x

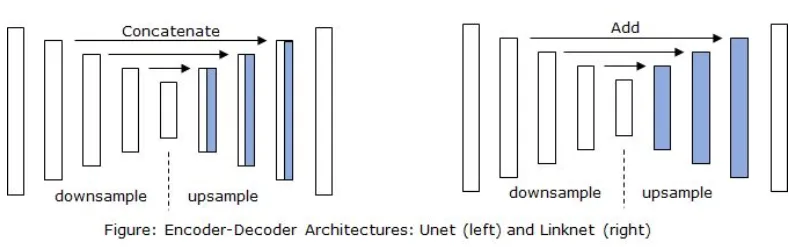

forward函数中,您可以为每个编码器层分配相同的名称,以便您可以在相应的解码器层中访问编码器层的输出特征,并将该特征与解码器层中的上采样特征连接/相加。我不确定,但应该使用ConvTranspose2d进行上采样,同时使用stride=2,这比MaxUnpool2d更好,因为在ConvTranspose2d的情况下,会学习到上采样的特征。正如我已经提到的,先使用上采样,然后添加/连接,最后进行卷积。 - Kaushik Roy