我使用了

rgl包来创建一个从动作手势数据集中生成的动画。虽然这不是一个专门用于手势数据的包,但你可以用它来处理这种数据。

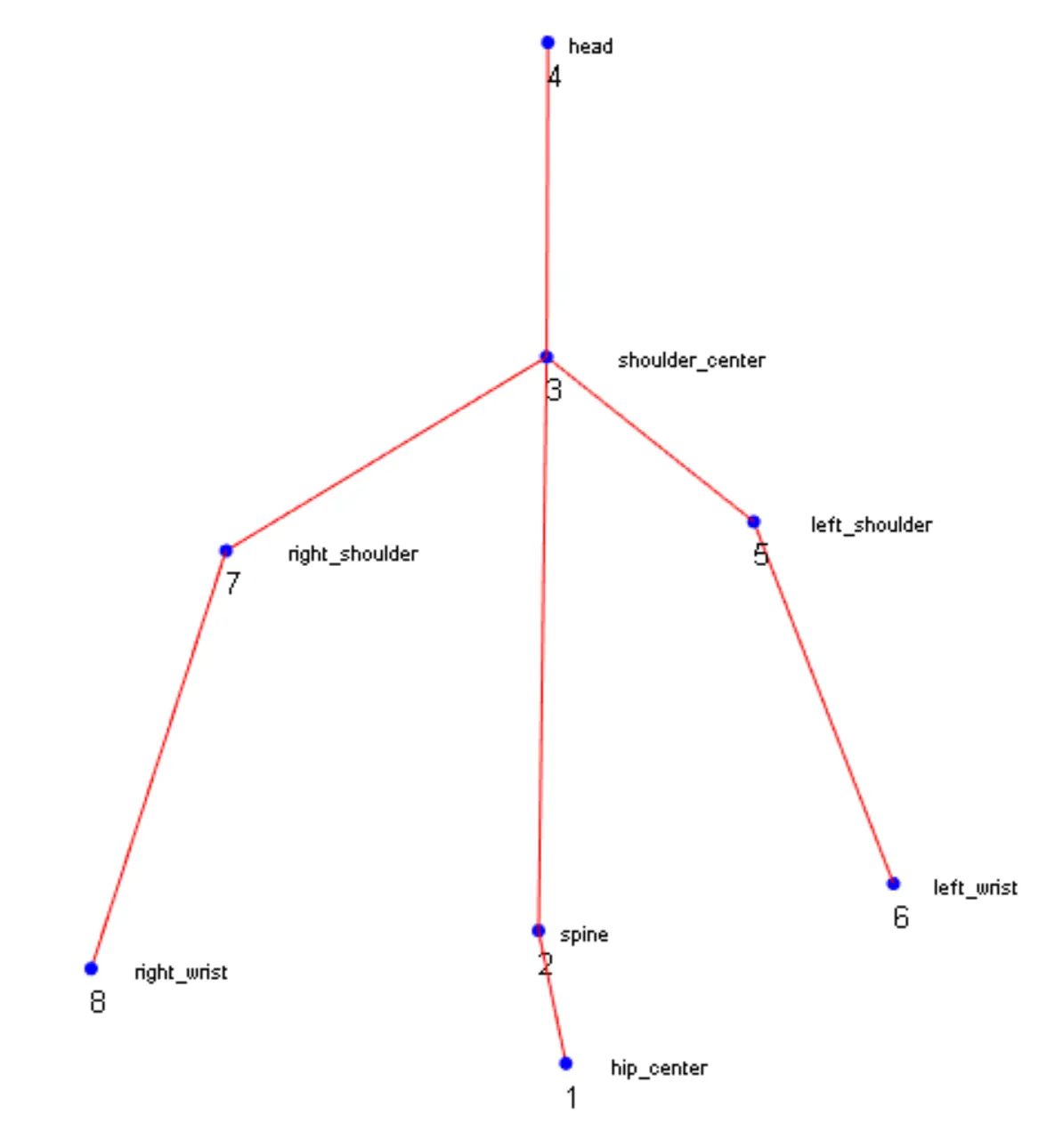

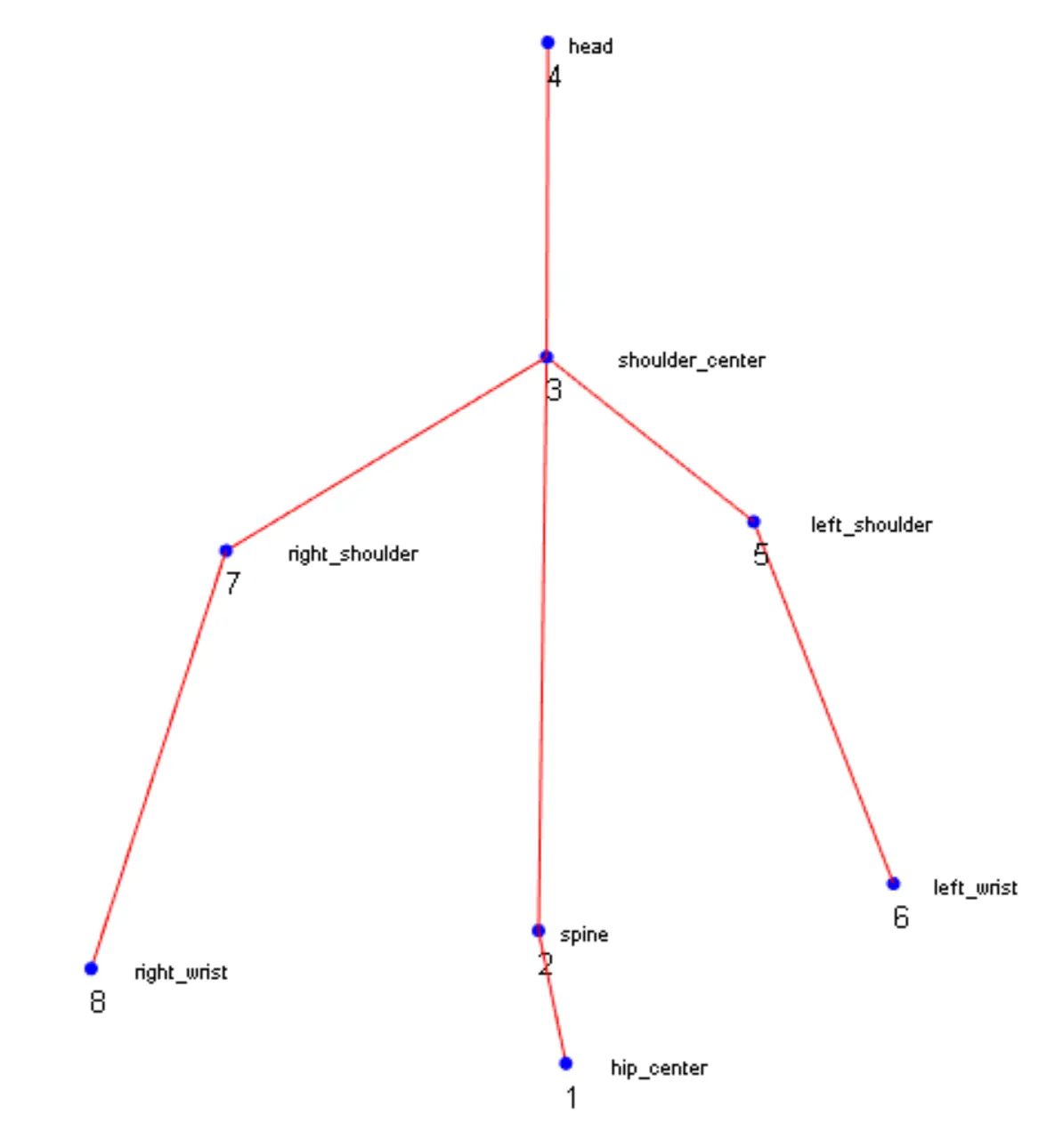

在下面的示例中,我们有8个点的手势数据,分别是:脊柱、肩中心、头部、左肩、左手腕、右肩和右手腕。主体将双手放下,右臂向上运动。

我将数据集限制在6个时间观察(秒)内,否则它会变得太大而无法在此处发布。

原始数据集中的每一行对应一个时间观察,每个身体点的坐标被定义为4组数据(每四列是一个身体点)。因此,在每一行中,我们有“x”、“y”、“z”、“br”表示脊柱,然后是“x”、“y”、“z”、“br”表示肩中心,以此类推。其中,“br”始终为1,以便分离每个身体部位的三个坐标(x,y,z)。

以下是原始(限制)数据集:

DATA.time.obs<-rbind(c(-0.06431,0.101546,2.990067,1,-0.091378,0.165703,3.029513,1,-0.090019,0.518603,3.022399,1,-0.042211,0.687271,2.987086,1,-0.231384,0.419869,2.953286,1,-0.299824,0.173991,2.882627,1,0.063367,0.399478,3.136306,1,0.134907,0.176191,3.159998,1),

c(-0.067185,0.102249,2.990185,1,-0.095083,0.166589,3.028688,1,-0.093098,0.519146,3.019775,1,-0.043808,0.687041,2.987671,1,-0.234622,0.417481,2.94581,1,-0.300324,0.169313,2.869782,1,0.056816,0.398384,3.135578,1,0.134536,0.180875,3.162843,1),

c(-0.069282,0.102964,2.989943,1,-0.098594,0.167465,3.027638,1,-0.097184,0.52169,3.019556,1,-0.046626,0.695406,2.989244,1,-0.23478,0.417057,2.943475,1,-0.300101,0.168628,2.860515,1,0.053793,0.395444,3.143226,1,0.134175,0.182816,3.172053,1),

c(-0.070924,0.102948,2.989369,1,-0.101156,0.167554,3.026474,1,-0.100244,0.522901,3.018919,1,-0.049834,0.696996,2.987933,1,-0.235301,0.416329,2.939331,1,-0.301339,0.170203,2.85497,1,0.04762,0.390872,3.142792,1,0.14041,0.186844,3.182172,1),

c(-0.071973,0.103372,2.988788,1,-0.103215,0.16776,3.025409,1,-0.102334,0.52281,3.019341,1,-0.051298,0.697003,2.991192,1,-0.235497,0.414859,2.935161,1,-0.297678,0.15788,2.833734,1,0.045973,0.386249,3.147609,1,0.14408,0.1916,3.204443,1),

c(-0.073223,0.104598,2.988132,1,-0.106597,0.168971,3.022554,1,-0.106778,0.522688,3.015138,1,-0.051867,0.697781,2.990767,1,-0.236137,0.414773,2.931317,1,-0.297552,0.153462,2.827027,1,0.039316,0.39146,3.166831,1,0.175061,0.214336,3.207459,1))

对于每个时间点,我们可以创建一个矩阵,其中每一行都是一个身体点,列是坐标:

time.point<-1

coordinates<-4

body.points<-dim(DATA.time.obs)[2]/coordinates

total.time<-dim(DATA.time.obs)[1]

DATA.matrix<-matrix(DATA.time.obs[1,],c(body.points,coordinates),byrow = TRUE)

colnames(DATA.matrix)<-c("x","y","z","br")

rownames(DATA.matrix)<-c("hip_center","spine","shoulder_center","head",

"left_shoulder","left_wrist","right_shoulder",

"right_wrist")

所以,我们在每个时间点都有一个类似这样的矩阵:

x y z br

hip_center -0.064310 0.101546 2.990067 1

spine -0.091378 0.165703 3.029513 1

shoulder_center -0.090019 0.518603 3.022399 1

head -0.042211 0.687271 2.987086 1

left_shoulder -0.231384 0.419869 2.953286 1

left_wrist -0.299824 0.173991 2.882627 1

right_shoulder 0.063367 0.399478 3.136306 1

right_wrist 0.134907 0.176191 3.159998 1

现在我们使用rgl来绘制来自该矩阵的数据:

library(rgl)

x<-unlist(DATA.matrix[,1])

y<-unlist(DATA.matrix[,2])

z<-unlist(DATA.matrix[,3])

open3d()

rgl.viewpoint(userMatrix=rotationMatrix(0,0,0,0))

U <- par3d("userMatrix")

par3d(userMatrix = rotate3d(U, -0.7*pi, 0,1,0))

points3d(x=x,y=y,z=z,size=6,col="blue")

text3d(x=x,y=y,z=z,texts=1:8,adj=c(-0.1,1.5),cex=0.8)

CONNECTOR<-c(1,2,2,3,3,4,3,5,3,7,5,6,7,8)

segments3d(x=x[CONNECTOR],y=y[CONNECTOR],z=z[CONNECTOR],col="red")

然后,我们有这个:

为了创建动画,我们可以把所有这些放到一个函数中,并使用

lapply。

movement.points<-function(DATA,time.point,CONNECTOR,body.points,coordinates){

DATA.time<-DATA[time.point,]

DATA.time<-matrix(DATA.time,c(body.points,coordinates),byrow = TRUE)

x<-unlist(DATA.time[,1])

y<-unlist(DATA.time[,2])

z<-unlist(DATA.time[,3])

next3d(reuse=FALSE)

points3d(x=x,y=y,z=z,size=6,col="blue")

segments3d(x=c(x,x[CONNECTOR]),y=c(y,y[CONNECTOR]),z=c(z,z[CONNECTOR]),col="red")

Sys.sleep(0.5)

}

我知道这个解决方案不太优雅,但它有效。

library(sos); findFn("{motion capture}")没有找到任何有用的东西。这里存在文化问题:使用R可能可以做很酷的事情,但如果所有从事动作捕捉工作的专业人士都在使用MATLAB或Python,那么这就是完成任务的地方。我肯定会看一下Python中已经完成的工作,并查看如何将Python与R进行接口连接,以便进行任何统计分析方面的重要工作,这些工作在R中尚未实现... - Ben Bolker