你可以使用

mllib包来计算每行TF-IDF的

L2范数。然后将表格自乘,以得到余弦相似度,即两个

L2范数的点积:

1. RDDrdd = sc.parallelize([[1, "Delhi, Mumbai, Gandhinagar"],[2, " Delhi, Mandi"], [3, "Hyderbad, Jaipur"]])

documents = rdd.map(lambda l: l[1].replace(" ", "").split(","))

from pyspark.mllib.feature import HashingTF, IDF

hashingTF = HashingTF()

tf = hashingTF.transform(documents)

您可以在 HashingTF 中指定特征数以使特征矩阵更小(列数更少)。

tf.cache()

idf = IDF().fit(tf)

tfidf = idf.transform(tf)

from pyspark.mllib.feature import Normalizer

labels = rdd.map(lambda l: l[0])

features = tfidf

normalizer = Normalizer()

data = labels.zip(normalizer.transform(features))

通过将矩阵与自身相乘来计算余弦相似度:

from pyspark.mllib.linalg.distributed import IndexedRowMatrix

mat = IndexedRowMatrix(data).toBlockMatrix()

dot = mat.multiply(mat.transpose())

dot.toLocalMatrix().toArray()

array([[ 0. , 0. , 0. , 0. ],

[ 0. , 1. , 0.10794634, 0. ],

[ 0. , 0.10794634, 1. , 0. ],

[ 0. , 0. , 0. , 1. ]])

或者: 使用numpy数组的笛卡尔积和函数dot:

data.cartesian(data)\

.map(lambda l: ((l[0][0], l[1][0]), l[0][1].dot(l[1][1])))\

.sortByKey()\

.collect()

[((1, 1), 1.0),

((1, 2), 0.10794633570596117),

((1, 3), 0.0),

((2, 1), 0.10794633570596117),

((2, 2), 1.0),

((2, 3), 0.0),

((3, 1), 0.0),

((3, 2), 0.0),

((3, 3), 1.0)]

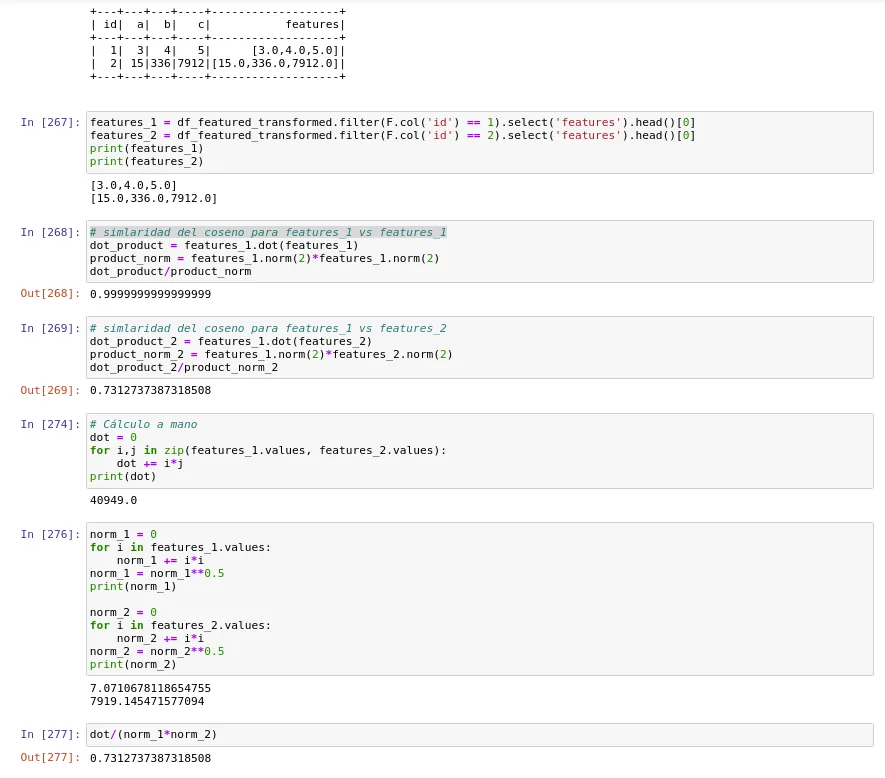

2. DataFrame

由于您似乎在使用数据框,因此可以使用spark ml包代替:

import pyspark.sql.functions as psf

df = rdd.toDF(["ID", "Office_Loc"])\

.withColumn("Office_Loc", psf.split(psf.regexp_replace("Office_Loc", " ", ""), ','))

from pyspark.ml.feature import HashingTF, IDF

hashingTF = HashingTF(inputCol="Office_Loc", outputCol="tf")

tf = hashingTF.transform(df)

idf = IDF(inputCol="tf", outputCol="feature").fit(tf)

tfidf = idf.transform(tf)

计算L2范数:

from pyspark.ml.feature import Normalizer

normalizer = Normalizer(inputCol="feature", outputCol="norm")

data = normalizer.transform(tfidf)

计算矩阵乘积:

from pyspark.mllib.linalg.distributed import IndexedRow, IndexedRowMatrix

mat = IndexedRowMatrix(

data.select("ID", "norm")\

.rdd.map(lambda row: IndexedRow(row.ID, row.norm.toArray()))).toBlockMatrix()

dot = mat.multiply(mat.transpose())

dot.toLocalMatrix().toArray()

或:使用连接和 UDF 函数 dot:

dot_udf = psf.udf(lambda x,y: float(x.dot(y)), DoubleType())

data.alias("i").join(data.alias("j"), psf.col("i.ID") < psf.col("j.ID"))\

.select(

psf.col("i.ID").alias("i"),

psf.col("j.ID").alias("j"),

dot_udf("i.norm", "j.norm").alias("dot"))\

.sort("i", "j")\

.show()

+---+---+-------------------+

| i| j| dot|

+---+---+-------------------+

| 1| 2|0.10794633570596117|

| 1| 3| 0.0|

| 2| 3| 0.0|

+---+---+-------------------+

本教程列举了不同的方法来对大规模矩阵进行乘法运算:https://labs.yodas.com/large-scale-matrix-multiplication-with-pyspark-or-how-to-match-two-large-datasets-of-company-1be4b1b2871e