在keras.layers.activations的文档中,您可以看到:

tf.keras.activations.sigmoid(x)

tf.keras.activations.softmax(x, axis=-1)

tf.keras.activations.relu(x, alpha=0.0, max_value=None, threshold=0.0)

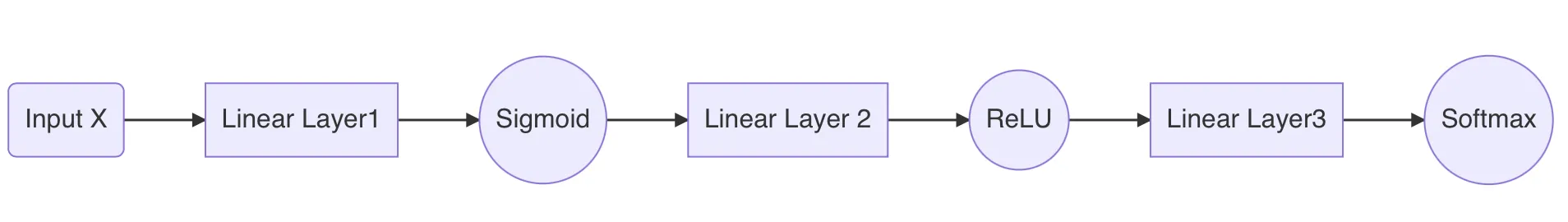

因此,代码可能是:

import tensorflow as tf

model = tf.keras.Sequential(name='sequential_2')

model.add(tf.keras.layers.Dense(300, name='dense_5', input_shape=(784,)))

model.add(tf.keras.layers.Activation(tf.keras.activations.sigmoid, name='activation_5'))

model.add(tf.keras.layers.Dense(300, name='dense_6'))

model.add(tf.keras.layers.Activation(tf.keras.activations.relu, name='activation_6'))

model.add(tf.keras.layers.Dense(10, name='dense_7'))

model.add(tf.keras.layers.Activation(tf.keras.activations.softmax, name='activation_7'))

model.summary()

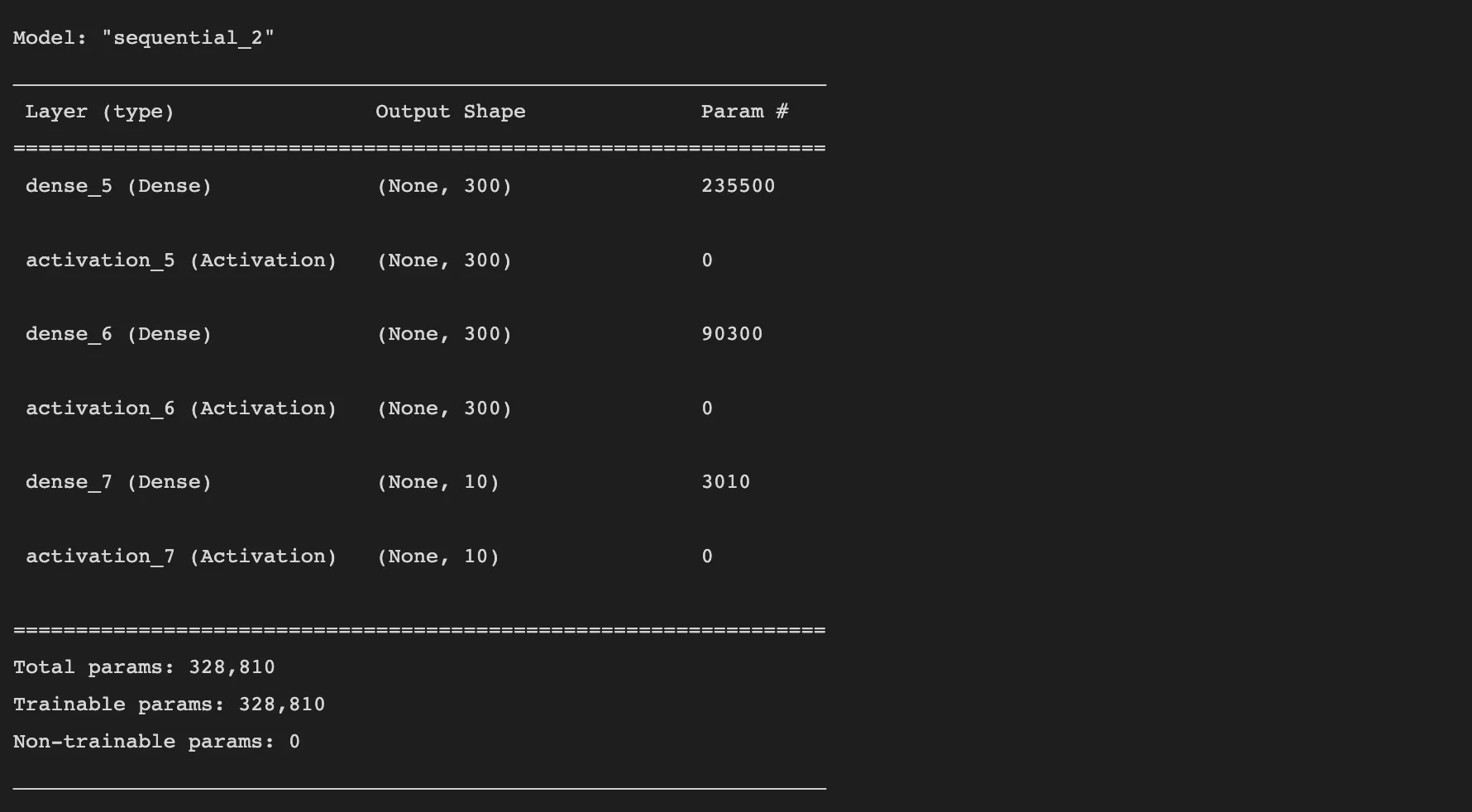

结果:

Model: "sequential_2"

_________________________________________________________________

Layer (type) Output Shape Param #

=================================================================

dense_5 (Dense) (None, 300) 235500

activation_5 (Activation) (None, 300) 0

dense_6 (Dense) (None, 300) 90300

activation_6 (Activation) (None, 300) 0

dense_7 (Dense) (None, 10) 3010

activation_7 (Activation) (None, 10) 0

=================================================================

Total params: 328,810

Trainable params: 328,810

Non-trainable params: 0

_________________________________________________________________

使用不同的

导入可以减少代码量,同时仍然获得相同的

总结:

from keras import Sequential

from keras.layers import Dense, Activation

from keras.activations import sigmoid, relu, softmax

model = Sequential(name='sequential_2')

model.add(Dense(300, name='dense_5', input_shape=(784,)))

model.add(Activation(sigmoid, name='activation_5'))

model.add(Dense(300, name='dense_6'))

model.add(Activation(relu, name='activation_6'))

model.add(Dense(10, name='dense_7'))

model.add(Activation(softmax, name='activation_7'))

model.summary()

但您也可以减少代码来获得相同的模型:

from keras import Sequential

from keras.layers import Dense

model = Sequential(name='sequential_2')

model.add(Dense(300, name='dense_5', activation='sigmoid', input_shape=(784,)))

model.add(Dense(300, name='dense_6', activation='relu'))

model.add(Dense(10, name='dense_7', activation='softmax'))

model.summary()

但是summary也会被缩短:

Model: "sequential_2"

_________________________________________________________________

Layer (type) Output Shape Param #

=================================================================

dense_5 (Dense) (None, 300) 235500

dense_6 (Dense) (None, 300) 90300

dense_7 (Dense) (None, 10) 3010

=================================================================

Total params: 328,810

Trainable params: 328,810

Non-trainable params: 0

_________________________________________________________________

您也可以省略 name= 以减少代码。

from keras import Sequential

from keras.layers import Dense

model = Sequential()

model.add(Dense(300, activation='sigmoid', input_shape=(784,)))

model.add(Dense(300, activation='relu'))

model.add(Dense(10, activation='softmax'))

model.summary()

顺便说一下:

如果你想使用具有形状为(28,28)的图像的MNIST,那么您可以添加层Flatten来自动将形状(28,28)转换为形状(784)。

from keras import Sequential

from keras.layers import Dense, Flatten

model = Sequential()

model.add(Flatten(input_shape=(28,28)))

model.add(Dense(300, activation='sigmoid'))

model.add(Dense(300, activation='relu'))

model.add(Dense(10, activation='softmax'))

model.summary()

结果:

Model: "sequential"

_________________________________________________________________

Layer (type) Output Shape Param #

=================================================================

flatten (Flatten) (None, 784) 0

dense (Dense) (None, 300) 235500

dense_1 (Dense) (None, 300) 90300

dense_2 (Dense) (None, 10) 3010

=================================================================

Total params: 328,810

Trainable params: 328,810

Non-trainable params: 0

_________________________________________________________________

如果在fit()之后使用summary(),那么您甚至可以跳过Flatten()中的input_shape=(28,28),因为fit()会设置它。

编辑:

完整可用代码。

已测试通过tensorflow 2.8.0、Python 3.10、Linux Mint 21.0(基于Ubuntu 22.04)。

import tensorflow as tf

from keras import Sequential

from keras.layers import Dense, Flatten

from keras.utils.np_utils import to_categorical

from keras.datasets import mnist

import numpy as np

print('\n--- version ---\n')

print('tensorflow:', tf.__version__)

print('\n--- data ---\n')

(x_train, y_train), (x_test, y_test) = mnist.load_data()

print('train.shape :', x_train.shape)

print('test.shape :', x_test.shape)

print('image.shape :', x_train[0].shape)

print('image.flatten:', x_train[0].flatten().shape)

y_train = to_categorical(y_train)

y_test = to_categorical(y_test)

print('\n--- model ---\n')

model = Sequential()

model.add(Flatten())

model.add(Dense(300, activation='sigmoid'))

model.add(Dense(300, activation='relu'))

model.add(Dense(10, activation='softmax'))

model.compile(loss='categorical_crossentropy', optimizer='adam', metrics=['accuracy'])

print('\n--- fit/train ---\n')

batch_size = 20

epochs = 5

model.fit(x_train, y_train, batch_size=batch_size, epochs=epochs, verbose=True, validation_data=(x_test, y_test))

print('\n--- evaluate/test ---\n')

score = model.evaluate(x_test, y_test, verbose=True)

print(score)

print('\n--- predict ---\n')

items = x_test[0].reshape(1,28,28)

print('items.shape:', items.shape)

results = model.predict(items)

for item in results:

print('predict:', item)

print('max :', np.max(item))

print('argmax :', np.argmax(item))

print('\n--- summary ---\n')

model.summary()

结果:

--- version ---

tensorflow: 2.8.0

--- data ---

train.shape : (60000, 28, 28)

test.shape : (10000, 28, 28)

image.shape : (28, 28)

image.flatten: (784,)

--- model ---

--- fit/train ---

Epoch 1/5

3000/3000 [==============================] - 26s 8ms/step - loss: 0.4459 - accuracy: 0.8600 - val_loss: 0.3425 - val_accuracy: 0.8907

Epoch 2/5

3000/3000 [==============================] - 31s 10ms/step - loss: 0.3133 - accuracy: 0.9011 - val_loss: 0.2753 - val_accuracy: 0.9128

Epoch 3/5

3000/3000 [==============================] - 22s 7ms/step - loss: 0.2702 - accuracy: 0.9153 - val_loss: 0.2291 - val_accuracy: 0.9271

Epoch 4/5

3000/3000 [==============================] - 22s 7ms/step - loss: 0.2482 - accuracy: 0.9218 - val_loss: 0.2142 - val_accuracy: 0.9346

Epoch 5/5

3000/3000 [==============================] - 21s 7ms/step - loss: 0.2246 - accuracy: 0.9292 - val_loss: 0.2061 - val_accuracy: 0.9362

--- evaluate/test ---

313/313 [==============================] - 2s 6ms/step - loss: 0.2061 - accuracy: 0.9362

[0.20612983405590057, 0.9362000226974487]

--- predict ---

items.shape: (1, 28, 28)

predict: [2.2065036e-08 3.6986236e-09 2.2580415e-04 2.4656517e-06 6.2174437e-12

6.3476205e-07 1.2726037e-15 9.9974352e-01 1.7498225e-07 2.7370381e-05]

max : 0.9997435

maxarg : 7

--- summary ---

Model: "sequential"

_________________________________________________________________

Layer (type) Output Shape Param #

=================================================================

flatten (Flatten) (None, 784) 0

dense (Dense) (None, 300) 235500

dense_1 (Dense) (None, 300) 90300

dense_2 (Dense) (None, 10) 3010

=================================================================

Total params: 328,810

Trainable params: 328,810

Non-trainable params: 0

_________________________________________________________________

tf.keras.activations.sigmoid(x),tf.keras.activations.softmax(x, axis=-1),tf.keras.activations.relu(x, alpha=0.0, max_value=None, threshold=0.0)。 - furasactivations的文档中,您还可以看到Dense(..., activation='softmax'),Dense(..., activation='relu')等。 - furasdigits的大小是多少?在第一层中,您应该使用input_shape=(digit_size,)。 - furas