这是我在Stack上发布的第一篇文章,对于我的笨拙,请提前道歉。如果可以,敬请告知如何改进我的问题。

► 我想要实现的目标(长期目标):

我想使用OpenCV for Unity和激光指针来操作我的Unity3d演示。

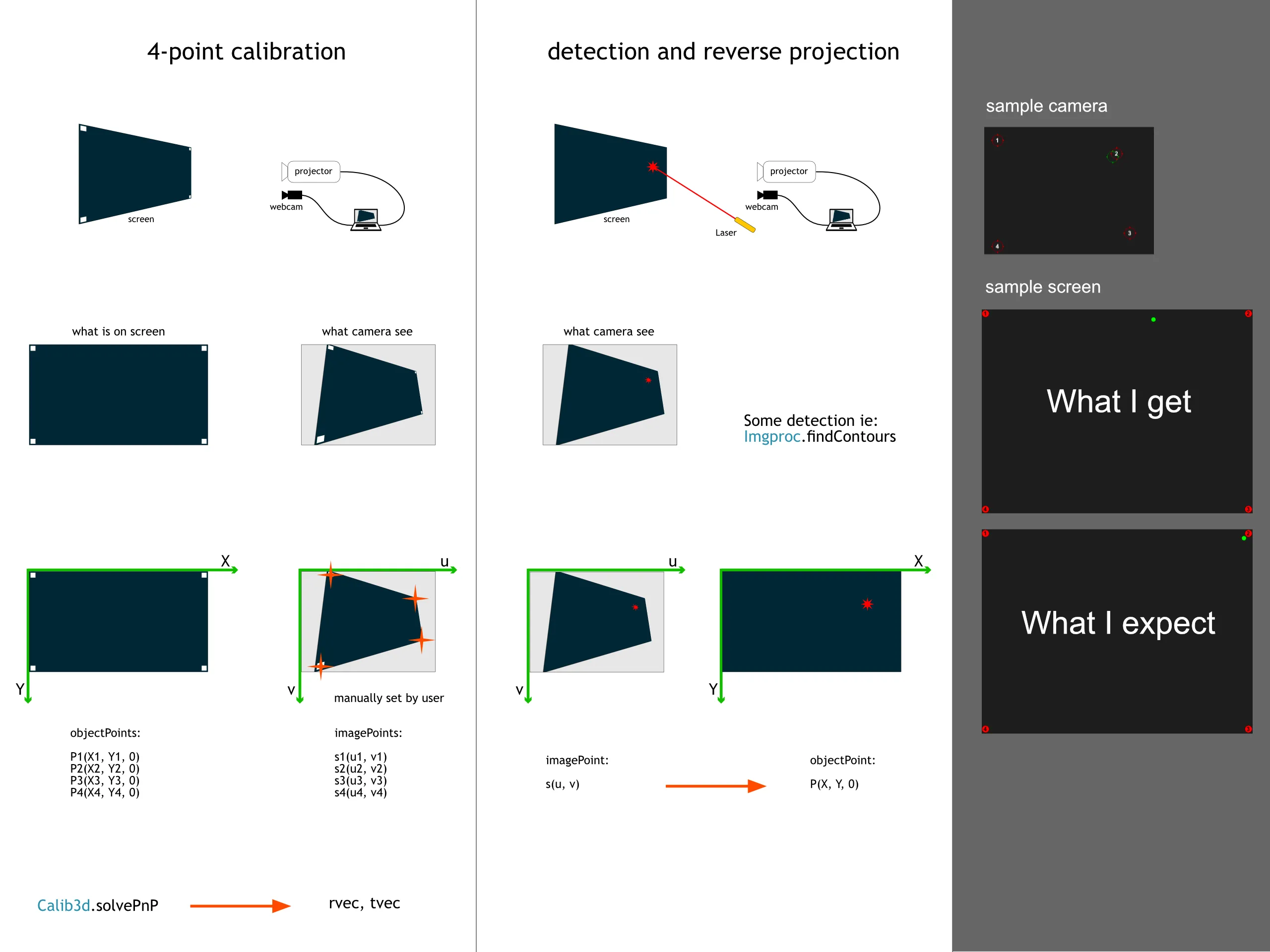

我相信一张图片胜过千言万语,所以这应该说明问题:

► 问题是什么:

我尝试从相机视图(某种梯形)进行简单的四点校准(投影)到平面空间。

我认为这应该是非常基础和容易的事情,但我没有使用OpenCV的经验,也无法使其工作。

► 示例:

我制作了一个更简单的示例,没有任何激光检测和其他杂项。只有4个点的梯形,我试图将其重新投影到平面空间。

链接到整个示例项目:https://1drv.ms/u/s!AiDsGecSyzmuujXGQUapcYrIvP7b

我的示例中的核心脚本:

using OpenCVForUnity;

using System.Collections;

using System.Collections.Generic;

using UnityEngine;

using UnityEngine.UI;

using System;

public class TestCalib : MonoBehaviour

{

public RawImage displayDummy;

public RectTransform[] handlers;

public RectTransform dummyCross;

public RectTransform dummyResult;

public Vector2 webcamSize = new Vector2(640, 480);

public Vector2 objectSize = new Vector2(1024, 768);

private Texture2D texture;

Mat cameraMatrix;

MatOfDouble distCoeffs;

MatOfPoint3f objectPoints;

MatOfPoint2f imagePoints;

Mat rvec;

Mat tvec;

Mat rotationMatrix;

Mat imgMat;

void Start()

{

texture = new Texture2D((int)webcamSize.x, (int)webcamSize.y, TextureFormat.RGB24, false);

if (displayDummy) displayDummy.texture = texture;

imgMat = new Mat(texture.height, texture.width, CvType.CV_8UC3);

}

void Update()

{

imgMat = new Mat(texture.height, texture.width, CvType.CV_8UC3);

Test();

DrawImagePoints();

Utils.matToTexture2D(imgMat, texture);

}

void DrawImagePoints()

{

Point[] pointsArray = imagePoints.toArray();

for (int i = 0; i < pointsArray.Length; i++)

{

Point p0 = pointsArray[i];

int j = (i < pointsArray.Length - 1) ? i + 1 : 0;

Point p1 = pointsArray[j];

Imgproc.circle(imgMat, p0, 5, new Scalar(0, 255, 0, 150), 1);

Imgproc.line(imgMat, p0, p1, new Scalar(255, 255, 0, 150), 1);

}

}

private void DrawResults(MatOfPoint2f resultPoints)

{

Point[] pointsArray = resultPoints.toArray();

for (int i = 0; i < pointsArray.Length; i++)

{

Point p = pointsArray[i];

Imgproc.circle(imgMat, p, 5, new Scalar(255, 155, 0, 150), 1);

}

}

public void Test()

{

float w2 = objectSize.x / 2F;

float h2 = objectSize.y / 2F;

/*

objectPoints = new MatOfPoint3f(

new Point3(-w2, -h2, 0),

new Point3(w2, -h2, 0),

new Point3(-w2, h2, 0),

new Point3(w2, h2, 0)

);

*/

objectPoints = new MatOfPoint3f(

new Point3(0, 0, 0),

new Point3(objectSize.x, 0, 0),

new Point3(objectSize.x, objectSize.y, 0),

new Point3(0, objectSize.y, 0)

);

imagePoints = GetImagePointsFromHandlers();

rvec = new Mat(1, 3, CvType.CV_64FC1);

tvec = new Mat(1, 3, CvType.CV_64FC1);

rotationMatrix = new Mat(3, 3, CvType.CV_64FC1);

double fx = webcamSize.x / objectSize.x;

double fy = webcamSize.y / objectSize.y;

double cx = 0; // webcamSize.x / 2.0f;

double cy = 0; // webcamSize.y / 2.0f;

cameraMatrix = new Mat(3, 3, CvType.CV_64FC1);

cameraMatrix.put(0, 0, fx);

cameraMatrix.put(0, 1, 0);

cameraMatrix.put(0, 2, cx);

cameraMatrix.put(1, 0, 0);

cameraMatrix.put(1, 1, fy);

cameraMatrix.put(1, 2, cy);

cameraMatrix.put(2, 0, 0);

cameraMatrix.put(2, 1, 0);

cameraMatrix.put(2, 2, 1.0f);

distCoeffs = new MatOfDouble(0, 0, 0, 0);

Calib3d.solvePnP(objectPoints, imagePoints, cameraMatrix, distCoeffs, rvec, tvec);

Mat uv = new Mat(3, 1, CvType.CV_64FC1);

uv.put(0, 0, dummyCross.anchoredPosition.x);

uv.put(1, 0, dummyCross.anchoredPosition.y);

uv.put(2, 0, 0);

Calib3d.Rodrigues(rvec, rotationMatrix);

Mat P = rotationMatrix.inv() * (cameraMatrix.inv() * uv - tvec);

Vector2 v = new Vector2((float)P.get(0, 0)[0], (float)P.get(1, 0)[0]);

dummyResult.anchoredPosition = v;

}

private MatOfPoint2f GetImagePointsFromHandlers()

{

MatOfPoint2f m = new MatOfPoint2f();

List<Point> points = new List<Point>();

foreach (RectTransform handler in handlers)

{

Point p = new Point(handler.anchoredPosition.x, handler.anchoredPosition.y);

points.Add(p);

}

m.fromList(points);

return m;

}

}

感谢您提前的任何帮助。