问题在于,在某些英特尔图形硬件上,VideoProcessor仅支持从DXGI_FORMAT_B8G8R8A8_UNORM转换为YUY2而不是NV12,我通过GetVideoProcessorOutputFormats确认了这一点。尽管VideoProcessor Blt没有任何错误,我可以看到输出视频中的帧有点像素化,但只有仔细观察才能注意到。

我猜VideoProcessor已经简单地转向下一个支持的输出格式(YUY2),而我无意中将其提供给了编码器,编码器认为输入已经配置成NV12。由于NV12和YUY2之间几乎没有差异,例如字节顺序和亚采样,因此没有失败或主要帧损坏。此外,在支持NV12转换的硬件上,我没有像素化问题。

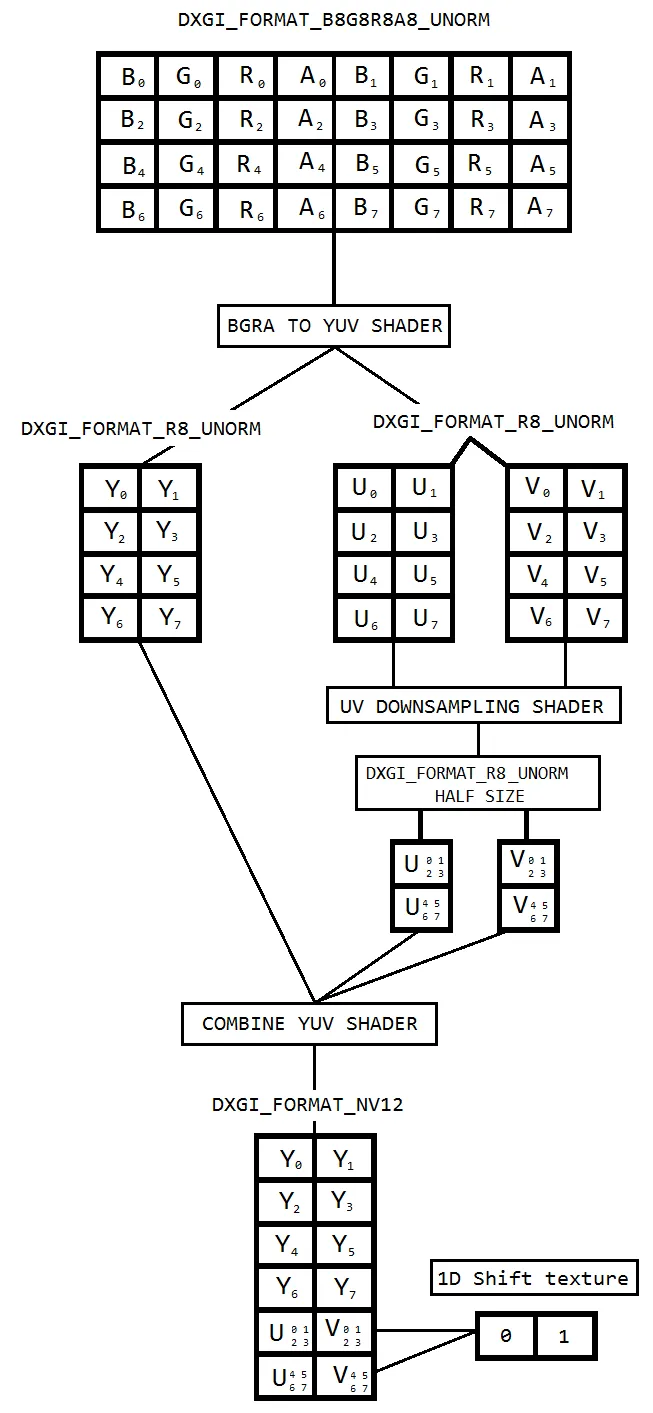

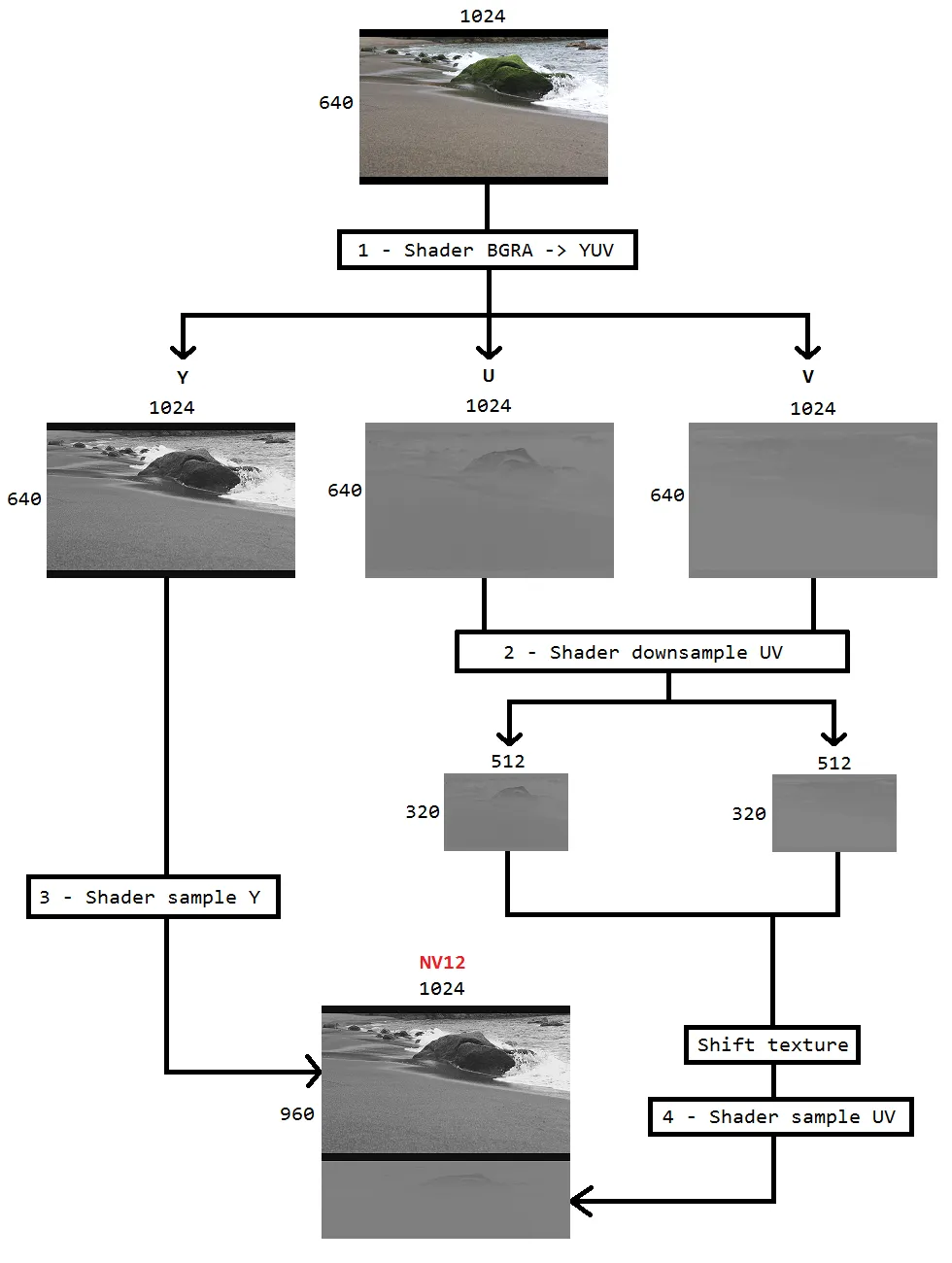

因此,我决定使用基于像素着色器的颜色转换,该着色器基于此代码(https://github.com/bavulapati/DXGICaptureDXColorSpaceConversionIntelEncode/blob/master/DXGICaptureDXColorSpaceConversionIntelEncode/DuplicationManager.cpp)。我能够使像素着色器工作,我已经上传了我的代码(https://codeshare.io/5PJjxP)供参考(尽可能简化)。

现在,我剩下两个通道,分别是色度和亮度(ID3D11Texture2D纹理)。我真的很困惑如何有效地将这两个单独的通道打包成一个ID3D11Texture2D纹理,以便可以将其提供给编码器。有没有一种在GPU中有效地将Y和UV通道打包到单个ID3D11Texture2D中的方法?由于CPU成本高昂,并且不能提供最佳帧速率,事实上,我对基于CPU的方法甚至不愿意复制纹理到CPU。我正在考虑在GPU中进行处理,而无需在CPU和GPU之间进行任何来回拷贝的方法。

我已经研究了相当长的一段时间,但没有取得任何进展,希望有所帮助。

/**

* This method is incomplete. It's just a template of what I want to achieve.

*/

HRESULT CreateNV12TextureFromLumaAndChromaSurface(ID3D11Texture2D** pOutputTexture)

{

HRESULT hr = S_OK;

try

{

//Copying from GPU to CPU. Bad :(

m_pD3D11DeviceContext->CopyResource(m_CPUAccessibleLuminanceSurf, m_LuminanceSurf);

D3D11_MAPPED_SUBRESOURCE resource;

UINT subresource = D3D11CalcSubresource(0, 0, 0);

HRESULT hr = m_pD3D11DeviceContext->Map(m_CPUAccessibleLuminanceSurf, subresource, D3D11_MAP_READ, 0, &resource);

BYTE* sptr = reinterpret_cast<BYTE*>(resource.pData);

BYTE* dptrY = nullptr; // point to the address of Y channel in output surface

//Store Image Pitch

int m_ImagePitch = resource.RowPitch;

int height = GetImageHeight();

int width = GetImageWidth();

for (int i = 0; i < height; i++)

{

memcpy_s(dptrY, m_ImagePitch, sptr, m_ImagePitch);

sptr += m_ImagePitch;

dptrY += m_ImagePitch;

}

m_pD3D11DeviceContext->Unmap(m_CPUAccessibleLuminanceSurf, subresource);

//Copying from GPU to CPU. Bad :(

m_pD3D11DeviceContext->CopyResource(m_CPUAccessibleChrominanceSurf, m_ChrominanceSurf);

hr = m_pD3D11DeviceContext->Map(m_CPUAccessibleChrominanceSurf, subresource, D3D11_MAP_READ, 0, &resource);

sptr = reinterpret_cast<BYTE*>(resource.pData);

BYTE* dptrUV = nullptr; // point to the address of UV channel in output surface

m_ImagePitch = resource.RowPitch;

height /= 2;

width /= 2;

for (int i = 0; i < height; i++)

{

memcpy_s(dptrUV, m_ImagePitch, sptr, m_ImagePitch);

sptr += m_ImagePitch;

dptrUV += m_ImagePitch;

}

m_pD3D11DeviceContext->Unmap(m_CPUAccessibleChrominanceSurf, subresource);

}

catch(HRESULT){}

return hr;

}

绘制 NV12:

//

// Draw frame for NV12 texture

//

HRESULT DrawNV12Frame(ID3D11Texture2D* inputTexture)

{

HRESULT hr;

// If window was resized, resize swapchain

if (!m_bIntialized)

{

HRESULT Ret = InitializeNV12Surfaces(inputTexture);

if (!SUCCEEDED(Ret))

{

return Ret;

}

m_bIntialized = true;

}

m_pD3D11DeviceContext->CopyResource(m_ShaderResourceSurf, inputTexture);

D3D11_TEXTURE2D_DESC FrameDesc;

m_ShaderResourceSurf->GetDesc(&FrameDesc);

D3D11_SHADER_RESOURCE_VIEW_DESC ShaderDesc;

ShaderDesc.Format = FrameDesc.Format;

ShaderDesc.ViewDimension = D3D11_SRV_DIMENSION_TEXTURE2D;

ShaderDesc.Texture2D.MostDetailedMip = FrameDesc.MipLevels - 1;

ShaderDesc.Texture2D.MipLevels = FrameDesc.MipLevels;

// Create new shader resource view

ID3D11ShaderResourceView* ShaderResource = nullptr;

hr = m_pD3D11Device->CreateShaderResourceView(m_ShaderResourceSurf, &ShaderDesc, &ShaderResource);

IF_FAILED_THROW(hr);

m_pD3D11DeviceContext->PSSetShaderResources(0, 1, &ShaderResource);

// Set resources

m_pD3D11DeviceContext->OMSetRenderTargets(1, &m_pLumaRT, nullptr);

m_pD3D11DeviceContext->PSSetShader(m_pPixelShaderLuma, nullptr, 0);

m_pD3D11DeviceContext->RSSetViewports(1, &m_VPLuminance);

// Draw textured quad onto render target

m_pD3D11DeviceContext->Draw(NUMVERTICES, 0);

m_pD3D11DeviceContext->OMSetRenderTargets(1, &m_pChromaRT, nullptr);

m_pD3D11DeviceContext->PSSetShader(m_pPixelShaderChroma, nullptr, 0);

m_pD3D11DeviceContext->RSSetViewports(1, &m_VPChrominance);

// Draw textured quad onto render target

m_pD3D11DeviceContext->Draw(NUMVERTICES, 0);

// Release shader resource

ShaderResource->Release();

ShaderResource = nullptr;

return S_OK;

}

初始化着色器:

void SetViewPort(D3D11_VIEWPORT* VP, UINT Width, UINT Height)

{

VP->Width = static_cast<FLOAT>(Width);

VP->Height = static_cast<FLOAT>(Height);

VP->MinDepth = 0.0f;

VP->MaxDepth = 1.0f;

VP->TopLeftX = 0;

VP->TopLeftY = 0;

}

HRESULT MakeRTV(ID3D11RenderTargetView** pRTV, ID3D11Texture2D* pSurf)

{

if (*pRTV)

{

(*pRTV)->Release();

*pRTV = nullptr;

}

// Create a render target view

HRESULT hr = m_pD3D11Device->CreateRenderTargetView(pSurf, nullptr, pRTV);

IF_FAILED_THROW(hr);

return S_OK;

}

HRESULT InitializeNV12Surfaces(ID3D11Texture2D* inputTexture)

{

ReleaseSurfaces();

D3D11_TEXTURE2D_DESC lOutputDuplDesc;

inputTexture->GetDesc(&lOutputDuplDesc);

// Create shared texture for all duplication threads to draw into

D3D11_TEXTURE2D_DESC DeskTexD;

RtlZeroMemory(&DeskTexD, sizeof(D3D11_TEXTURE2D_DESC));

DeskTexD.Width = lOutputDuplDesc.Width;

DeskTexD.Height = lOutputDuplDesc.Height;

DeskTexD.MipLevels = 1;

DeskTexD.ArraySize = 1;

DeskTexD.Format = lOutputDuplDesc.Format;

DeskTexD.SampleDesc.Count = 1;

DeskTexD.Usage = D3D11_USAGE_DEFAULT;

DeskTexD.BindFlags = D3D11_BIND_SHADER_RESOURCE;

HRESULT hr = m_pD3D11Device->CreateTexture2D(&DeskTexD, nullptr, &m_ShaderResourceSurf);

IF_FAILED_THROW(hr);

DeskTexD.Format = DXGI_FORMAT_R8_UNORM;

DeskTexD.BindFlags = D3D11_BIND_RENDER_TARGET;

hr = m_pD3D11Device->CreateTexture2D(&DeskTexD, nullptr, &m_LuminanceSurf);

IF_FAILED_THROW(hr);

DeskTexD.CPUAccessFlags = D3D11_CPU_ACCESS_READ;

DeskTexD.Usage = D3D11_USAGE_STAGING;

DeskTexD.BindFlags = 0;

hr = m_pD3D11Device->CreateTexture2D(&DeskTexD, NULL, &m_CPUAccessibleLuminanceSurf);

IF_FAILED_THROW(hr);

SetViewPort(&m_VPLuminance, DeskTexD.Width, DeskTexD.Height);

HRESULT Ret = MakeRTV(&m_pLumaRT, m_LuminanceSurf);

if (!SUCCEEDED(Ret))

return Ret;

DeskTexD.Width = lOutputDuplDesc.Width / 2;

DeskTexD.Height = lOutputDuplDesc.Height / 2;

DeskTexD.Format = DXGI_FORMAT_R8G8_UNORM;

DeskTexD.Usage = D3D11_USAGE_DEFAULT;

DeskTexD.CPUAccessFlags = 0;

DeskTexD.BindFlags = D3D11_BIND_RENDER_TARGET;

hr = m_pD3D11Device->CreateTexture2D(&DeskTexD, nullptr, &m_ChrominanceSurf);

IF_FAILED_THROW(hr);

DeskTexD.CPUAccessFlags = D3D11_CPU_ACCESS_READ;

DeskTexD.Usage = D3D11_USAGE_STAGING;

DeskTexD.BindFlags = 0;

hr = m_pD3D11Device->CreateTexture2D(&DeskTexD, NULL, &m_CPUAccessibleChrominanceSurf);

IF_FAILED_THROW(hr);

SetViewPort(&m_VPChrominance, DeskTexD.Width, DeskTexD.Height);

return MakeRTV(&m_pChromaRT, m_ChrominanceSurf);

}

HRESULT InitVertexShader(ID3D11VertexShader** ppID3D11VertexShader)

{

HRESULT hr = S_OK;

UINT Size = ARRAYSIZE(g_VS);

try

{

IF_FAILED_THROW(m_pD3D11Device->CreateVertexShader(g_VS, Size, NULL, ppID3D11VertexShader));;

m_pD3D11DeviceContext->VSSetShader(m_pVertexShader, nullptr, 0);

// Vertices for drawing whole texture

VERTEX Vertices[NUMVERTICES] =

{

{ XMFLOAT3(-1.0f, -1.0f, 0), XMFLOAT2(0.0f, 1.0f) },

{ XMFLOAT3(-1.0f, 1.0f, 0), XMFLOAT2(0.0f, 0.0f) },

{ XMFLOAT3(1.0f, -1.0f, 0), XMFLOAT2(1.0f, 1.0f) },

{ XMFLOAT3(1.0f, -1.0f, 0), XMFLOAT2(1.0f, 1.0f) },

{ XMFLOAT3(-1.0f, 1.0f, 0), XMFLOAT2(0.0f, 0.0f) },

{ XMFLOAT3(1.0f, 1.0f, 0), XMFLOAT2(1.0f, 0.0f) },

};

UINT Stride = sizeof(VERTEX);

UINT Offset = 0;

D3D11_BUFFER_DESC BufferDesc;

RtlZeroMemory(&BufferDesc, sizeof(BufferDesc));

BufferDesc.Usage = D3D11_USAGE_DEFAULT;

BufferDesc.ByteWidth = sizeof(VERTEX) * NUMVERTICES;

BufferDesc.BindFlags = D3D11_BIND_VERTEX_BUFFER;

BufferDesc.CPUAccessFlags = 0;

D3D11_SUBRESOURCE_DATA InitData;

RtlZeroMemory(&InitData, sizeof(InitData));

InitData.pSysMem = Vertices;

// Create vertex buffer

IF_FAILED_THROW(m_pD3D11Device->CreateBuffer(&BufferDesc, &InitData, &m_VertexBuffer));

m_pD3D11DeviceContext->IASetVertexBuffers(0, 1, &m_VertexBuffer, &Stride, &Offset);

m_pD3D11DeviceContext->IASetPrimitiveTopology(D3D11_PRIMITIVE_TOPOLOGY_TRIANGLELIST);

D3D11_INPUT_ELEMENT_DESC Layout[] =

{

{ "POSITION", 0, DXGI_FORMAT_R32G32B32_FLOAT, 0, 0, D3D11_INPUT_PER_VERTEX_DATA, 0 },

{ "TEXCOORD", 0, DXGI_FORMAT_R32G32_FLOAT, 0, 12, D3D11_INPUT_PER_VERTEX_DATA, 0 }

};

UINT NumElements = ARRAYSIZE(Layout);

hr = m_pD3D11Device->CreateInputLayout(Layout, NumElements, g_VS, Size, &m_pVertexLayout);

m_pD3D11DeviceContext->IASetInputLayout(m_pVertexLayout);

}

catch (HRESULT) {}

return hr;

}

HRESULT InitPixelShaders()

{

HRESULT hr = S_OK;

// Refer https://codeshare.io/5PJjxP for g_PS_Y & g_PS_UV blobs

try

{

UINT Size = ARRAYSIZE(g_PS_Y);

hr = m_pD3D11Device->CreatePixelShader(g_PS_Y, Size, nullptr, &m_pPixelShaderChroma);

IF_FAILED_THROW(hr);

Size = ARRAYSIZE(g_PS_UV);

hr = m_pD3D11Device->CreatePixelShader(g_PS_UV, Size, nullptr, &m_pPixelShaderLuma);

IF_FAILED_THROW(hr);

}

catch (HRESULT) {}

return hr;

}