第一部分:更新之前

我正在尝试使用两种不同的方法来估算相对位置:solvePNP和recoverPose,看起来R矩阵相对于某些误差是正常的,但是平移向量完全不同。我做错了什么?通常情况下,我需要使用这两种方法从第一帧到第二帧找到相对位置。

cv::solvePnP(constants::calibration::rig.rig3DPoints, corners1,

cameraMatrix, distortion, rvecPNP1, tvecPNP1);

cv::solvePnP(constants::calibration::rig.rig3DPoints, corners2,

cameraMatrix, distortion, rvecPNP2, tvecPNP2);

Mat rodriguesRPNP1, rodriguesRPNP2;

cv::Rodrigues(rvecPNP1, rodriguesRPNP1);

cv::Rodrigues(rvecPNP2, rodriguesRPNP2);

rvecPNP = rodriguesRPNP1.inv() * rodriguesRPNP2;

tvecPNP = rodriguesRPNP1.inv() * (tvecPNP2 - tvecPNP1);

Mat E = findEssentialMat(corners1, corners2, cameraMatrix);

recoverPose(E, corners1, corners2, cameraMatrix, rvecRecover, tvecRecover);

输出:

solvePnP: R:

[0.99998963, 0.0020884471, 0.0040569459;

-0.0020977913, 0.99999511, 0.0023003994;

-0.0040521105, -0.0023088832, 0.99998915]

solvePnP: t:

[0.0014444492; 0.00018377086; -0.00045027508]

recoverPose: R:

[0.9999900052294586, 0.0001464890570028249, 0.004468554816042664;

-0.0001480011106435358, 0.9999999319097322, 0.0003380476328946509;

-0.004468504991498534, -0.0003387056052618761, 0.9999899588204144]

recoverPose: t:

[0.1492094050828522; -0.007288328116585327; -0.9887787587261805]

第二部分:更新后

更新:在解决PnP后,我已更改了R和t的组成方式:

cv::solvePnP(constants::calibration::rig.rig3DPoints, corners1,

cameraMatrix, distortion, rvecPNP1, tvecPNP1);

cv::solvePnP(constants::calibration::rig.rig3DPoints, corners2,

cameraMatrix, distortion, rvecPNP2, tvecPNP2);

Mat rodriguesRPNP1, rodriguesRPNP2;

cv::Rodrigues(rvecPNP1, rodriguesRPNP1);

cv::Rodrigues(rvecPNP2, rodriguesRPNP2);

rvecPNP = rodriguesRPNP1.inv() * rodriguesRPNP2;

tvecPNP = rodriguesRPNP2 * tvecPNP2 - rodriguesRPNP1 * tvecPNP1;

这个构图经过实际摄像机的移动测试,似乎是正确的。

此外,recoverPose现在可以获取非平面物体的点,而这些点通常处于一般位置。所测试的运动也不是纯旋转,以避免退化情况,但仍然有很大差异的平移向量。

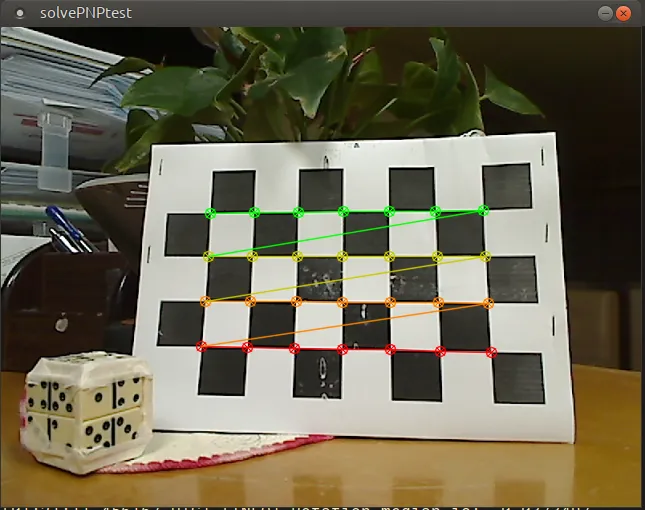

第一个帧:

第一个帧:绿色点被跟踪和匹配,并出现在第二个帧中(虽然在第二个帧中它们是蓝色的):

第一个帧:绿色点被跟踪和匹配,并出现在第二个帧中(虽然在第二个帧中它们是蓝色的):

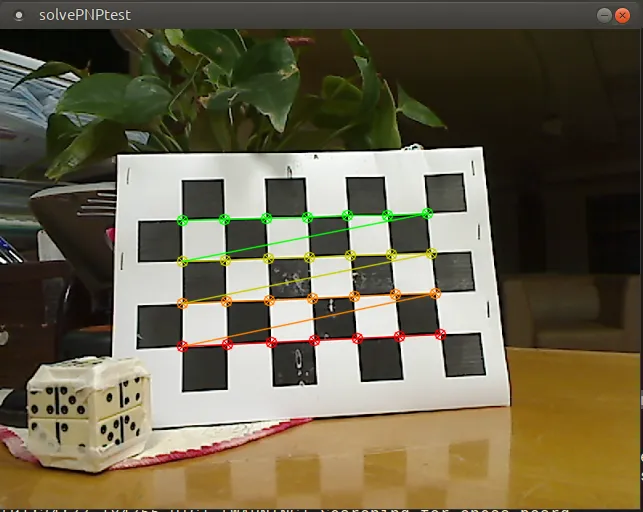

第二个帧:

第二个帧:

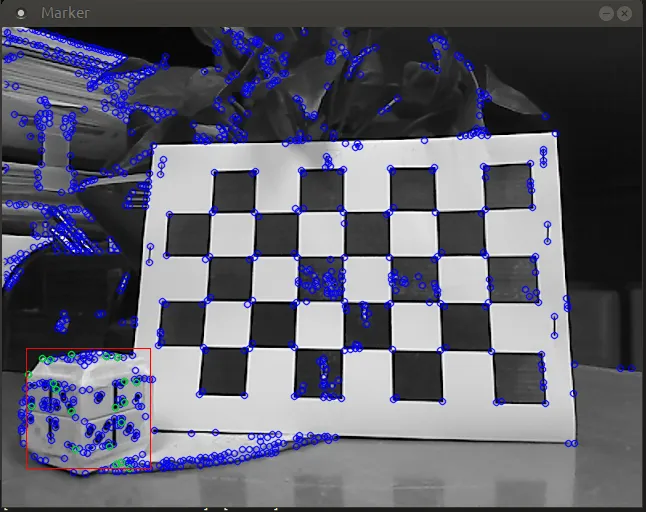

第二个帧:用于recoverPose的一般位置跟踪点:

第二个帧:用于recoverPose的一般位置跟踪点:

cv::solvePnP(constants::calibration::rig.rig3DPoints, corners1,

cameraMatrix, distortion, rvecPNP1, tvecPNP1);

cv::solvePnP(constants::calibration::rig.rig3DPoints, corners2,

cameraMatrix, distortion, rvecPNP2, tvecPNP2);

Mat rodriguesRPNP1, rodriguesRPNP2;

cv::Rodrigues(rvecPNP1, rodriguesRPNP1);

cv::Rodrigues(rvecPNP2, rodriguesRPNP2);

rvecPNP = rodriguesRPNP1.inv() * rodriguesRPNP2;

tvecPNP = rodriguesRPNP2 * tvecPNP2 - rodriguesRPNP1 * tvecPNP1;

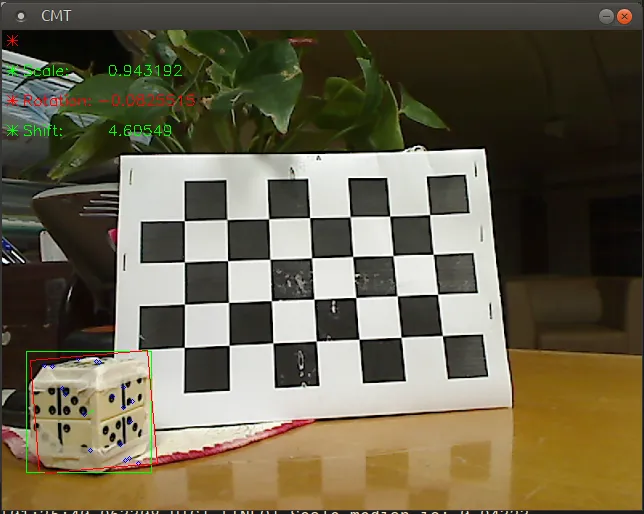

CMT cmt;

// ...

// ... cmt module finds and tracks points here

// ...

std::vector<Point2f> coords1 = cmt.getPoints();

std::vector<int> classes1 = cmt.getClasses();

cmt.processFrame(imGray2);

std::vector<Point2f> coords2 = cmt.getPoints();

std::vector<int> classes2 = cmt.getClasses();

std::vector<Point2f> coords3, coords4;

// Make sure that points and their classes are in the same order

// and the vectors of the same size

utils::fuse(coords1, classes1, coords2, classes2, coords3, coords4,

constants::marker::randomPointsInMark);

Mat E = findEssentialMat(coords3, coords4, cameraMatrix, cv::RANSAC, 0.9999);

int numOfInliers = recoverPose(E, coords3, coords4, cameraMatrix,

rvecRecover, tvecRecover);

输出:

solvePnP: R:

[ 0.97944641, 0.072178222, 0.18834825;

-0.07216832, 0.99736851, -0.0069195116;

-0.18835205, -0.0068155089, 0.98207784]

solvePnP: t:

[-0.041602995; 0.014756925; 0.025671512]

recoverPose: R:

[0.8115000456601129, 0.03013366385237642, -0.5835748779689431;

0.05045522914264587, 0.9913266281414459, 0.1213498503908197;

0.5821700316452212, -0.1279198133228133, 0.80294120308629]

recoverPose: t:

[0.6927871089455181; -0.1254653960405977; 0.7101439685551703]

recoverPose: numOfInliers: 18

我还尝试了相机静止的情况(没有R,没有t),R很接近但是翻译却不一样。那么我在这里错过了什么?