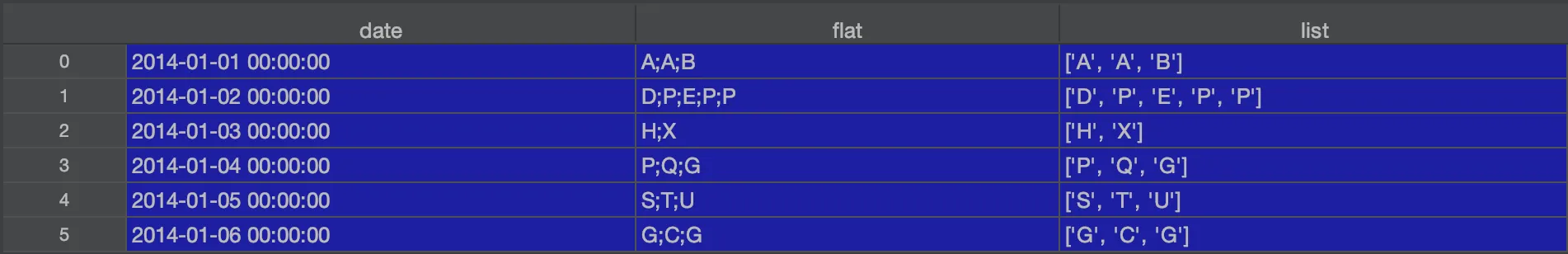

我有以下数据:

data = {'date': ['2014-01-01', '2014-01-02', '2014-01-03', '2014-01-04', '2014-01-05', '2014-01-06'],

'flat': ['A;A;B', 'D;P;E;P;P', 'H;X', 'P;Q;G', 'S;T;U', 'G;C;G']}

data['date'] = pd.to_datetime(data['date'])

data = pd.DataFrame(data)

data['date'] = pd.to_datetime(data['date'])

spark = SparkSession.builder \

.master('local[*]') \

.config("spark.driver.memory", "500g") \

.appName('my-pandasToSparkDF-app') \

.getOrCreate()

spark.conf.set("spark.sql.execution.arrow.enabled", "true")

spark.sparkContext.setLogLevel("OFF")

df=spark.createDataFrame(data)

new_frame = df.withColumn("list", F.split("flat", "\;"))

我想新增一列,用于存储每个不同元素的出现次数(按升序排序),另一列则存储最大值。

+-------------------+-----------+---------------------+-----------+----+

| date| flat | list |occurrences|max |

+-------------------+-----------+---------------------+-----------+----+

|2014-01-01 00:00:00|A;A;B |['A','A','B'] |[1,2] |2 |

|2014-01-02 00:00:00|D;P;E;P;P |['D','P','E','P','P']|[1,1,3] |3 |

|2014-01-03 00:00:00|H;X |['H','X'] |[1,1] |1 |

|2014-01-04 00:00:00|P;Q;G |['P','Q','G'] |[1,1,1] |1 |

|2014-01-05 00:00:00|S;T;U |['S','T','U'] |[1,1,1] |1 |

|2014-01-06 00:00:00|G;C;G |['G','C','G'] |[1,2] |2 |

+-------------------+-----------+---------------------+-----------+----+

非常感谢!