2个回答

6

很遗憾,在旧版的Spark MLlib 1.5.1中不提供该功能。

但是,在Spark MLlib 2.x的最近的Pipeline API中,您可以找到它,其名称为RandomForestClassifier:

import org.apache.spark.ml.Pipeline

import org.apache.spark.ml.classification.RandomForestClassifier

import org.apache.spark.ml.feature.{IndexToString, StringIndexer, VectorIndexer}

import org.apache.spark.mllib.util.MLUtils

// Load and parse the data file, converting it to a DataFrame.

val data = MLUtils.loadLibSVMFile(sc, "data/mllib/sample_libsvm_data.txt").toDF

// Index labels, adding metadata to the label column.

// Fit on whole dataset to include all labels in index.

val labelIndexer = new StringIndexer()

.setInputCol("label")

.setOutputCol("indexedLabel").fit(data)

// Automatically identify categorical features, and index them.

// Set maxCategories so features with > 4 distinct values are treated as continuous.

val featureIndexer = new VectorIndexer()

.setInputCol("features")

.setOutputCol("indexedFeatures")

.setMaxCategories(4).fit(data)

// Split the data into training and test sets (30% held out for testing)

val Array(trainingData, testData) = data.randomSplit(Array(0.7, 0.3))

// Train a RandomForest model.

val rf = new RandomForestClassifier()

.setLabelCol(labelIndexer.getOutputCol)

.setFeaturesCol(featureIndexer.getOutputCol)

.setNumTrees(10)

// Convert indexed labels back to original labels.

val labelConverter = new IndexToString()

.setInputCol("prediction")

.setOutputCol("predictedLabel")

.setLabels(labelIndexer.labels)

// Chain indexers and forest in a Pipeline

val pipeline = new Pipeline()

.setStages(Array(labelIndexer, featureIndexer, rf, labelConverter))

// Fit model. This also runs the indexers.

val model = pipeline.fit(trainingData)

// Make predictions.

val predictions = model.transform(testData)

// predictions: org.apache.spark.sql.DataFrame = [label: double, features: vector, indexedLabel: double, indexedFeatures: vector, rawPrediction: vector, probability: vector, prediction: double, predictedLabel: string]

predictions.show(10)

// +-----+--------------------+------------+--------------------+-------------+-----------+----------+--------------+

// |label| features|indexedLabel| indexedFeatures|rawPrediction|probability|prediction|predictedLabel|

// +-----+--------------------+------------+--------------------+-------------+-----------+----------+--------------+

// | 0.0|(692,[124,125,126...| 1.0|(692,[124,125,126...| [0.0,10.0]| [0.0,1.0]| 1.0| 0.0|

// | 0.0|(692,[124,125,126...| 1.0|(692,[124,125,126...| [1.0,9.0]| [0.1,0.9]| 1.0| 0.0|

// | 0.0|(692,[129,130,131...| 1.0|(692,[129,130,131...| [1.0,9.0]| [0.1,0.9]| 1.0| 0.0|

// | 0.0|(692,[154,155,156...| 1.0|(692,[154,155,156...| [1.0,9.0]| [0.1,0.9]| 1.0| 0.0|

// | 0.0|(692,[154,155,156...| 1.0|(692,[154,155,156...| [1.0,9.0]| [0.1,0.9]| 1.0| 0.0|

// | 0.0|(692,[181,182,183...| 1.0|(692,[181,182,183...| [1.0,9.0]| [0.1,0.9]| 1.0| 0.0|

// | 1.0|(692,[99,100,101,...| 0.0|(692,[99,100,101,...| [4.0,6.0]| [0.4,0.6]| 1.0| 0.0|

// | 1.0|(692,[123,124,125...| 0.0|(692,[123,124,125...| [10.0,0.0]| [1.0,0.0]| 0.0| 1.0|

// | 1.0|(692,[124,125,126...| 0.0|(692,[124,125,126...| [10.0,0.0]| [1.0,0.0]| 0.0| 1.0|

// | 1.0|(692,[125,126,127...| 0.0|(692,[125,126,127...| [10.0,0.0]| [1.0,0.0]| 0.0| 1.0|

// +-----+--------------------+------------+--------------------+-------------+-----------+----------+--------------+

// only showing top 10 rows

注意: 这个例子来自于Spark MLlib的ML - 随机森林分类器官方文档。

以下是一些输出列的解释:

predictionCol表示预测的标签。rawPredictionCol是一个长度为 #classes 的向量,其中包含使预测的树节点上的训练实例标签计数(仅适用于分类)。probabilityCol是长度为 # classes 的概率向量,等于将rawPrediction标准化到多项分布的向量(仅适用于分类)。

- eliasah

7

我明白了...为什么它想要创建ml-randomForest和mlliv-randomForest?这两个库有什么区别?为什么不将它们合并成一个? - Edamame

1spark.ml包旨在提供一组统一的高级API,构建在DataFrames之上,帮助用户创建和调整实用的机器学习管道,而原始的MLlib库处理RDDs。在DataFrame上构建算法在概念上与RDD上的传统map reduce操作非常不同。将这两个库统一起来并不像听起来那么简单。 - eliasah

我的训练数据非常大,存储在RDD中。有没有办法我可以使用RDD来训练ml-randomForest?或者有没有办法我可以使用mllib-randomForest来检索概率?谢谢! - Edamame

1你需要将它转换成DataFrame,没有其他方法。虽然类型转换仍未优化,但这不会太昂贵。但是,在Spark的Tungsten项目中,对DataFrames进行的操作得到了优化,从而在时间上表现更好。这意味着,当您应用算法时,可能会失去的时间可以在计算级别上获得。 - eliasah

1DataFrame是对RDD的结构性抽象,它不是一个RDD。从概念上讲,它与R/Pandas中的相同,但由于其内部结构实际上是一个RDD,因此它是分布式的。换句话说,如果它适合于您的RDD,它也适合于您的DataFrame。 - eliasah

显示剩余2条评论

4

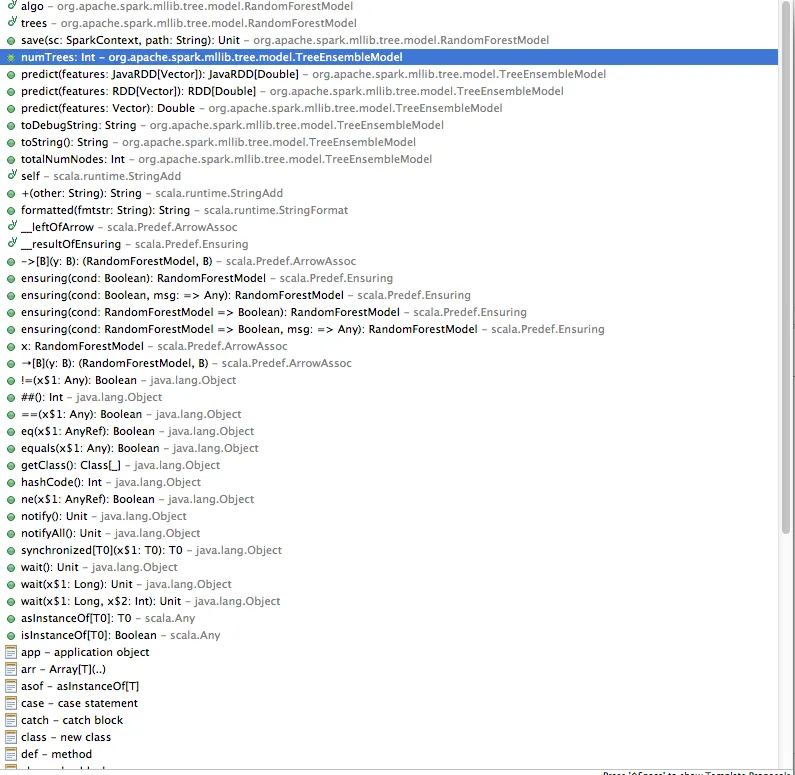

您无法直接获取分类概率,但自己计算相对容易。RandomForest是树的集合体,其输出概率是这些树的大部分投票除以总数。

由于MLib中的RandomForestModel提供了训练后的树,因此自己计算很容易。以下代码给出了二元分类问题的概率。对于多类分类问题,其推广也很简单。

def predict(points: RDD[LabeledPoint], model: RandomForestModel) = {

val numTrees = model.trees.length

val trees = points.sparkContext.broadcast(model.trees)

points.map { point =>

trees.value

.map(_.predict(point.features))

.sum / numTrees

}

对于多分类情况,您只需将map替换为.map(_.predict(point.features)- > 1.0),以键分组而不是求和,最后取值的最大值即可。

- TNM

7

谢谢TNM!但在我的使用情况中,我正在对数据点应用预测函数,而不是RDD。即我有modelObject.predict(myPoint),其中myPoint的类型为:org.apache.spark.mllib.linalg.Vector。我是否仍然可以计算这种情况下的概率?谢谢! - Edamame

1可以的。只需要将函数的输入类型替换为point:Vector,并删除points.map部分,改为直接开始trees.map{...}。 - TNM

谢谢TNM!当我试图执行以下操作时:val trees = point.sparkContext.broadcast(modelObject.trees),其中point的类型为:org.apache.spark.mllib.linalg.Vector,我收到了错误提示:value sparkContext不是org.apache.spark.mllib.linalg.Vector的成员。我有什么遗漏吗?有没有办法解决这个问题?谢谢! - Edamame

如果我这样做似乎可以工作:val prob = modelObject.trees.map(_.predict(point)).sum / modelObject.trees.length。这正确吗?谢谢! - Edamame

这是正确的。在原始答案中使用广播只是一种优化。 - TNM

显示剩余2条评论

网页内容由stack overflow 提供, 点击上面的可以查看英文原文,

原文链接

原文链接