自被采纳的答案以来,事情发生了变化。现在有一种替代分段的AVCaptureMovieFileOutput并且在iOS上创建新片段时不会丢帧的方法,这个替代方案就是AVAssetWriter!

iOS 14起,AVAssetWriter可以创建分段的MPEG4,这些实质上是内存中的MPEG 4文件。虽然旨在用于HLS流应用程序,但它也是缓存视频和音频内容非常方便的方法。

这种新功能是由Takayuki Mizuno在WWDC 2020会议上介绍的使用AVAssetWriter创作分段MPEG-4内容。

凭借分段MP4的AVAssetWriter,通过将mp4段写入磁盘,并使用多个AVQueuePlayer和AVPlayerLayers以不同的时间偏移播放它们,很容易创建解决该问题的解决方案。

因此,这将成为第四种解决方案:使用AVAssetWriter的mpeg4AppleHLS输出配置文件捕获摄像头流并将其写入磁盘分段的mp4,并使用AVQueuePlayers和AVPlayerLayers以不同的延迟播放视频。

如果您需要支持iOS 13及以下版本,则必须替换分段的AVAssetWriter,这可能会很快变得技术性,特别是如果您还想编写音频。谢谢,Takayuki Mizuno!

import UIKit

import AVFoundation

import UniformTypeIdentifiers

class ViewController: UIViewController {

let playbackDelays:[Int] = [5, 20, 30]

let segmentDuration = CMTime(value: 2, timescale: 1)

var assetWriter: AVAssetWriter!

var videoInput: AVAssetWriterInput!

var startTime: CMTime!

var writerStarted = false

let session = AVCaptureSession()

var segment = 0

var outputDir: URL!

var initializationData = Data()

var layers: [AVPlayerLayer] = []

var players: [AVQueuePlayer] = []

override func viewDidLoad() {

super.viewDidLoad()

for _ in 0..<playbackDelays.count {

let player = AVQueuePlayer()

player.automaticallyWaitsToMinimizeStalling = false

let layer = AVPlayerLayer(player: player)

layer.videoGravity = .resizeAspectFill

layers.append(layer)

players.append(player)

view.layer.addSublayer(layer)

}

outputDir = FileManager.default.urls(for: .documentDirectory, in:.userDomainMask).first!

assetWriter = AVAssetWriter(contentType: UTType.mpeg4Movie)

assetWriter.outputFileTypeProfile = .mpeg4AppleHLS

assetWriter.preferredOutputSegmentInterval = segmentDuration

assetWriter.initialSegmentStartTime = .zero

assetWriter.delegate = self

let videoOutputSettings: [String : Any] = [

AVVideoCodecKey: AVVideoCodecType.h264,

AVVideoWidthKey: 1024,

AVVideoHeightKey: 720

]

videoInput = AVAssetWriterInput(mediaType: .video, outputSettings: videoOutputSettings)

videoInput.expectsMediaDataInRealTime = true

assetWriter.add(videoInput)

let videoDevice = AVCaptureDevice.default(for: .video)!

let videoInput = try! AVCaptureDeviceInput(device: videoDevice)

session.addInput(videoInput)

let videoOutput = AVCaptureVideoDataOutput()

videoOutput.setSampleBufferDelegate(self, queue: DispatchQueue.main)

session.addOutput(videoOutput)

session.startRunning()

}

override func viewDidLayoutSubviews() {

let size = view.bounds.size

let layerWidth = size.width / CGFloat(layers.count)

for i in 0..<layers.count {

let layer = layers[i]

layer.frame = CGRect(x: CGFloat(i)*layerWidth, y: 0, width: layerWidth, height: size.height)

}

}

override var supportedInterfaceOrientations: UIInterfaceOrientationMask {

return .landscape

}

}

extension ViewController: AVCaptureVideoDataOutputSampleBufferDelegate {

func captureOutput(_ output: AVCaptureOutput, didOutput sampleBuffer: CMSampleBuffer, from connection: AVCaptureConnection) {

if startTime == nil {

let success = assetWriter.startWriting()

assert(success)

startTime = sampleBuffer.presentationTimeStamp

assetWriter.startSession(atSourceTime: startTime)

}

if videoInput.isReadyForMoreMediaData {

videoInput.append(sampleBuffer)

}

}

}

extension ViewController: AVAssetWriterDelegate {

func assetWriter(_ writer: AVAssetWriter, didOutputSegmentData segmentData: Data, segmentType: AVAssetSegmentType) {

print("segmentType: \(segmentType.rawValue) - size: \(segmentData.count)")

switch segmentType {

case .initialization:

initializationData = segmentData

case .separable:

let fileURL = outputDir.appendingPathComponent(String(format: "%.4i.mp4", segment))

segment += 1

let mp4Data = initializationData + segmentData

try! mp4Data.write(to: fileURL)

let asset = AVAsset(url: fileURL)

for i in 0..<players.count {

let player = players[i]

let playerItem = AVPlayerItem(asset: asset)

player.insert(playerItem, after: nil)

if player.rate == 0 && player.status == .readyToPlay {

let hostStartTime: CMTime = startTime + CMTime(value: CMTimeValue(playbackDelays[i]), timescale: 1)

player.preroll(atRate: 1) { prerolled in

guard prerolled else { return }

player.setRate(1, time: .invalid, atHostTime: hostStartTime)

}

}

}

@unknown default:

break

}

}

}

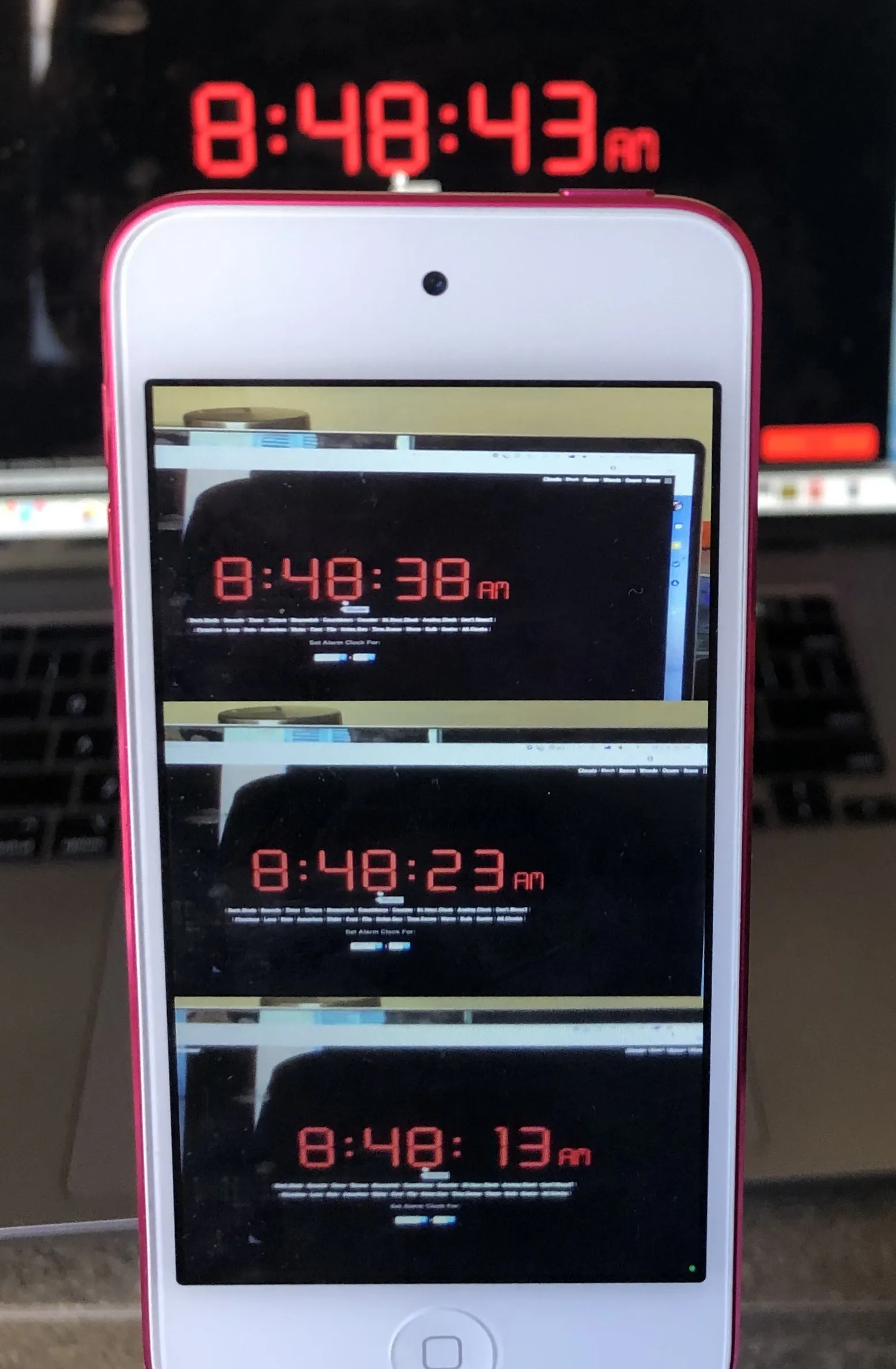

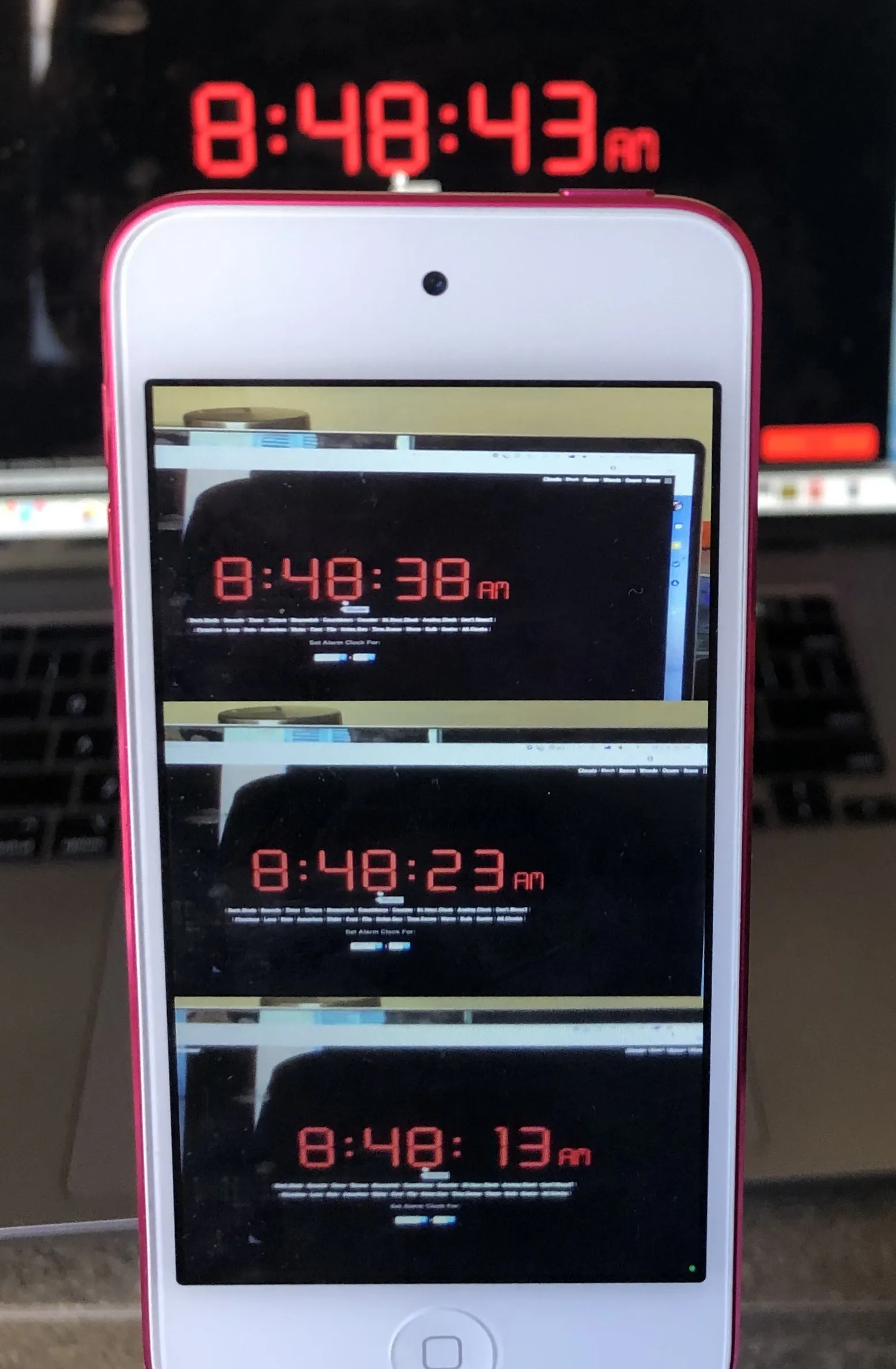

结果看起来像这样:

性能还算不错:我的 2019 年版 iPod 占用了 10-14% 的 CPU 和 38MB 的内存。