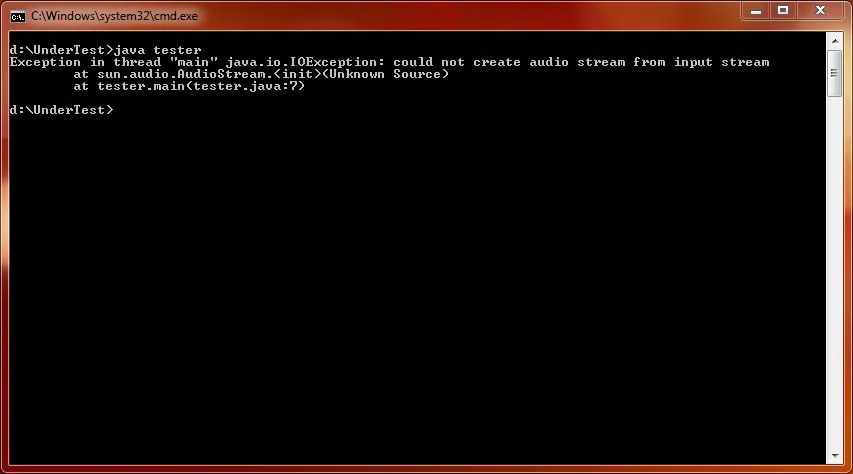

如上所述,Java Sound默认不支持MP3。 要查看特定JRE支持的类型,请检查

AudioSystem.getAudioFileTypes()。

添加对读取MP3的支持的一种方法是将基于JMF的

1mp3plugin.jar添加到应用程序的运行时类路径中。

- 已知该链接不是完全可靠的。 该Jar也可在我的软件共享驱动器中获得。

至于您实际的问题,虽然

javax.sound.sampled.Clip似乎非常适合这种任务,但不幸的是它只能容纳一秒钟的立体声、16位、44.1KHz音频。 这就是为什么我开发了

BigClip。(如果您解决了它的循环问题,请回报。)

package org.pscode.xui.sound.bigclip;

import java.awt.Component;

import javax.swing.*;

import javax.sound.sampled.*;

import java.io.*;

import java.util.logging.*;

import java.util.Arrays;

public class BigClip implements Clip, LineListener {

private SourceDataLine dataLine;

private byte[] audioData;

private ByteArrayInputStream inputStream;

private int loopCount;

private int countDown;

private int loopPointStart;

private int loopPointEnd;

private int framePosition;

private Thread thread;

private boolean active;

private long timelastPositionSet;

private int bufferUpdateFactor = 2;

Component parent = null;

private Logger logger = Logger.getAnonymousLogger();

public BigClip() {}

public BigClip(Clip clip) throws LineUnavailableException {

dataLine = AudioSystem.getSourceDataLine( clip.getFormat() );

}

public byte[] getAudioData() {

return audioData;

}

public void setParentComponent(Component parent) {

this.parent = parent;

}

private long convertFramesToMilliseconds(int frames) {

return (frames/(long)dataLine.getFormat().getSampleRate())*1000;

}

private int convertMillisecondsToFrames(long milliseconds) {

return (int)(milliseconds/dataLine.getFormat().getSampleRate());

}

@Override

public void update(LineEvent le) {

logger.log(Level.FINEST, "update: " + le );

}

@Override

public void loop(int count) {

logger.log(Level.FINEST, "loop(" + count + ") - framePosition: " + framePosition);

loopCount = count;

countDown = count;

active = true;

inputStream.reset();

start();

}

@Override

public void setLoopPoints(int start, int end) {

if (

start<0 ||

start>audioData.length-1 ||

end<0 ||

end>audioData.length

) {

throw new IllegalArgumentException(

"Loop points '" +

start +

"' and '" +

end +

"' cannot be set for buffer of size " +

audioData.length);

}

if (start>end) {

throw new IllegalArgumentException(

"End position " +

end +

" preceeds start position " + start);

}

loopPointStart = start;

framePosition = loopPointStart;

loopPointEnd = end;

}

@Override

public void setMicrosecondPosition(long milliseconds) {

framePosition = convertMillisecondsToFrames(milliseconds);

}

@Override

public long getMicrosecondPosition() {

return convertFramesToMilliseconds(getFramePosition());

}

@Override

public long getMicrosecondLength() {

return convertFramesToMilliseconds(getFrameLength());

}

@Override

public void setFramePosition(int frames) {

framePosition = frames;

int offset = framePosition*format.getFrameSize();

try {

inputStream.reset();

inputStream.read(new byte[offset]);

} catch(Exception e) {

e.printStackTrace();

}

}

@Override

public int getFramePosition() {

long timeSinceLastPositionSet = System.currentTimeMillis() - timelastPositionSet;

int size = dataLine.getBufferSize()*(format.getChannels()/2)/bufferUpdateFactor;

int framesSinceLast = (int)((timeSinceLastPositionSet/1000f)*

dataLine.getFormat().getFrameRate());

int framesRemainingTillTime = size - framesSinceLast;

return framePosition

- framesRemainingTillTime;

}

@Override

public int getFrameLength() {

return audioData.length/format.getFrameSize();

}

AudioFormat format;

@Override

public void open(AudioInputStream stream) throws

IOException,

LineUnavailableException {

AudioInputStream is1;

format = stream.getFormat();

if (format.getEncoding()!=AudioFormat.Encoding.PCM_SIGNED) {

is1 = AudioSystem.getAudioInputStream(

AudioFormat.Encoding.PCM_SIGNED, stream );

} else {

is1 = stream;

}

format = is1.getFormat();

InputStream is2;

if (parent!=null) {

ProgressMonitorInputStream pmis = new ProgressMonitorInputStream(

parent,

"Loading track..",

is1);

pmis.getProgressMonitor().setMillisToPopup(0);

is2 = pmis;

} else {

is2 = is1;

}

byte[] buf = new byte[ (int)Math.pow(2, 16) ];

int totalRead = 0;

int numRead = 0;

ByteArrayOutputStream baos = new ByteArrayOutputStream();

numRead = is2.read( buf );

while (numRead>-1) {

baos.write( buf, 0, numRead );

numRead = is2.read( buf, 0, buf.length );

totalRead += numRead;

}

is2.close();

audioData = baos.toByteArray();

AudioFormat afTemp;

if (format.getChannels()<2) {

afTemp = new AudioFormat(

format.getEncoding(),

format.getSampleRate(),

format.getSampleSizeInBits(),

2,

format.getSampleSizeInBits()*2/8,

format.getFrameRate(),

format.isBigEndian()

);

} else {

afTemp = format;

}

setLoopPoints(0,audioData.length);

dataLine = AudioSystem.getSourceDataLine(afTemp);

dataLine.open();

inputStream = new ByteArrayInputStream( audioData );

}

@Override

public void open(AudioFormat format,

byte[] data,

int offset,

int bufferSize)

throws LineUnavailableException {

byte[] input = new byte[bufferSize];

for (int ii=0; ii<input.length; ii++) {

input[ii] = data[offset+ii];

}

ByteArrayInputStream inputStream = new ByteArrayInputStream(input);

try {

AudioInputStream ais1 = AudioSystem.getAudioInputStream(inputStream);

AudioInputStream ais2 = AudioSystem.getAudioInputStream(format, ais1);

open(ais2);

} catch( UnsupportedAudioFileException uafe ) {

throw new IllegalArgumentException(uafe);

} catch( IOException ioe ) {

throw new IllegalArgumentException(ioe);

}

}

@Override

public float getLevel() {

return dataLine.getLevel();

}

@Override

public long getLongFramePosition() {

return dataLine.getLongFramePosition()*2/format.getChannels();

}

@Override

public int available() {

return dataLine.available();

}

@Override

public int getBufferSize() {

return dataLine.getBufferSize();

}

@Override

public AudioFormat getFormat() {

return format;

}

@Override

public boolean isActive() {

return dataLine.isActive();

}

@Override

public boolean isRunning() {

return dataLine.isRunning();

}

@Override

public boolean isOpen() {

return dataLine.isOpen();

}

@Override

public void stop() {

logger.log(Level.FINEST, "BigClip.stop()");

active = false;

dataLine.stop();

if (thread!=null) {

try {

active = false;

thread.join();

} catch(InterruptedException wakeAndContinue) {

}

}

}

public byte[] convertMonoToStereo(byte[] data, int bytesRead) {

byte[] tempData = new byte[bytesRead*2];

if (format.getSampleSizeInBits()==8) {

for(int ii=0; ii<bytesRead; ii++) {

byte b = data[ii];

tempData[ii*2] = b;

tempData[ii*2+1] = b;

}

} else {

for(int ii=0; ii<bytesRead-1; ii+=2) {

byte b1 = data[ii];

byte b2 = data[ii+1];

tempData[ii*2] = b1;

tempData[ii*2+1] = b2;

tempData[ii*2+2] = b1;

tempData[ii*2+3] = b2;

}

}

return tempData;

}

boolean fastForward;

boolean fastRewind;

public void setFastForward(boolean fastForward) {

logger.log(Level.FINEST, "FastForward " + fastForward);

this.fastForward = fastForward;

fastRewind = false;

flush();

}

public boolean getFastForward() {

return fastForward;

}

public void setFastRewind(boolean fastRewind) {

logger.log(Level.FINEST, "FastRewind " + fastRewind);

this.fastRewind = fastRewind;

fastForward = false;

flush();

}

public boolean getFastRewind() {

return fastRewind;

}

@Override

public void start() {

Runnable r = new Runnable() {

public void run() {

try {

dataLine.open();

dataLine.start();

int bytesRead = 0;

int frameSize = dataLine.getFormat().getFrameSize();

int bufSize = dataLine.getBufferSize();

boolean startOrMove = true;

byte[] data = new byte[bufSize];

int offset = framePosition*frameSize;

int totalBytes = offset;

inputStream.read(new byte[offset], 0, offset);

logger.log(Level.FINEST, "loopCount " + loopCount );

while ((bytesRead = inputStream.read(data,0,data.length))

!= -1 &&

(loopCount==Clip.LOOP_CONTINUOUSLY ||

countDown>0) &&

active ) {

logger.log(Level.FINEST,

"BigClip.start() loop " + framePosition );

totalBytes += bytesRead;

int framesRead;

byte[] tempData;

if (format.getChannels()<2) {

tempData = convertMonoToStereo(data, bytesRead);

framesRead = bytesRead/

format.getFrameSize();

bytesRead*=2;

} else {

framesRead = bytesRead/

dataLine.getFormat().getFrameSize();

tempData = Arrays.copyOfRange(data, 0, bytesRead);

}

framePosition += framesRead;

if (framePosition>=loopPointEnd) {

framePosition = loopPointStart;

inputStream.reset();

countDown--;

logger.log(Level.FINEST,

"Loop Count: " + countDown );

}

timelastPositionSet = System.currentTimeMillis();

byte[] newData;

if (fastForward) {

newData = getEveryNthFrame(tempData, 2);

} else if (fastRewind) {

byte[] temp = getEveryNthFrame(tempData, 2);

newData = reverseFrames(temp);

inputStream.reset();

totalBytes -= 2*bytesRead;

framePosition -= 2*framesRead;

if (totalBytes<0) {

setFastRewind(false);

totalBytes = 0;

}

inputStream.skip(totalBytes);

logger.log(Level.INFO, "totalBytes " + totalBytes);

} else {

newData = tempData;

}

dataLine.write(newData, 0, newData.length);

if (startOrMove) {

data = new byte[bufSize/

bufferUpdateFactor];

startOrMove = false;

}

}

logger.log(Level.FINEST,

"BigClip.start() loop ENDED" + framePosition );

active = false;

dataLine.drain();

dataLine.stop();

dataLine.close();

} catch (LineUnavailableException lue) {

logger.log( Level.SEVERE,

"No sound line available!", lue );

if (parent!=null) {

JOptionPane.showMessageDialog(

parent,

"Clear the sound lines to proceed",

"No audio lines available!",

JOptionPane.ERROR_MESSAGE);

}

}

}

};

thread= new Thread(r);

thread.setDaemon(true);

thread.start();

}

public byte[] reverseFrames(byte[] data) {

byte[] reversed = new byte[data.length];

byte[] frame = new byte[4];

for (int ii=0; ii<data.length/4; ii++) {

int first = (data.length)-((ii+1)*4)+0;

int last = (data.length)-((ii+1)*4)+3;

frame[0] = data[first];

frame[1] = data[(data.length)-((ii+1)*4)+1];

frame[2] = data[(data.length)-((ii+1)*4)+2];

frame[3] = data[last];

reversed[ii*4+0] = frame[0];

reversed[ii*4+1] = frame[1];

reversed[ii*4+2] = frame[2];

reversed[ii*4+3] = frame[3];

if (ii<5 || ii>(data.length/4)-5) {

logger.log(Level.FINER, "From \t" + first + " \tlast " + last );

logger.log(Level.FINER, "To \t" + ((ii*4)+0) + " \tlast " + ((ii*4)+3) );

}

}

return reversed;

}

public byte[] getEveryNthFrame(byte[] data, int skip) {

int length = data.length/skip;

length = (length/4)*4;

logger.log(Level.FINEST, "length " + data.length + " \t" + length);

byte[] b = new byte[length];

for (int ii=0; ii<b.length/4; ii++) {

b[ii*4+0] = data[ii*skip*4+0];

b[ii*4+1] = data[ii*skip*4+1];

b[ii*4+2] = data[ii*skip*4+2];

b[ii*4+3] = data[ii*skip*4+3];

}

return b;

}

@Override

public void flush() {

dataLine.flush();

}

@Override

public void drain() {

dataLine.drain();

}

@Override

public void removeLineListener(LineListener listener) {

dataLine.removeLineListener(listener);

}

@Override

public void addLineListener(LineListener listener) {

dataLine.addLineListener(listener);

}

@Override

public Control getControl(Control.Type control) {

return dataLine.getControl(control);

}

@Override

public Control[] getControls() {

if (dataLine==null) {

return new Control[0];

} else {

return dataLine.getControls();

}

}

@Override

public boolean isControlSupported(Control.Type control) {

return dataLine.isControlSupported(control);

}

@Override

public void close() {

dataLine.close();

}

@Override

public void open() throws LineUnavailableException {

throw new IllegalArgumentException("illegal call to open() in interface Clip");

}

@Override

public Line.Info getLineInfo() {

return dataLine.getLineInfo();

}

public double getLargestSampleSize() {

int largest = 0;

int current;

boolean signed = (format.getEncoding()==AudioFormat.Encoding.PCM_SIGNED);

int bitDepth = format.getSampleSizeInBits();

boolean bigEndian = format.isBigEndian();

int samples = audioData.length*8/bitDepth;

if (signed) {

if (bitDepth/8==2) {

if (bigEndian) {

for (int cc = 0; cc < samples; cc++) {

current = (audioData[cc*2]*256 + (audioData[cc*2+1] & 0xFF));

if (Math.abs(current)>largest) {

largest = Math.abs(current);

}

}

} else {

for (int cc = 0; cc < samples; cc++) {

current = (audioData[cc*2+1]*256 + (audioData[cc*2] & 0xFF));

if (Math.abs(current)>largest) {

largest = Math.abs(current);

}

}

}

} else {

for (int cc = 0; cc < samples; cc++) {

current = (audioData[cc] & 0xFF);

if (Math.abs(current)>largest) {

largest = Math.abs(current);

}

}

}

} else {

if (bitDepth/8==2) {

if (bigEndian) {

for (int cc = 0; cc < samples; cc++) {

current = (audioData[cc*2]*256 + (audioData[cc*2+1] - 0x80));

if (Math.abs(current)>largest) {

largest = Math.abs(current);

}

}

} else {

for (int cc = 0; cc < samples; cc++) {

current = (audioData[cc*2+1]*256 + (audioData[cc*2] - 0x80));

if (Math.abs(current)>largest) {

largest = Math.abs(current);

}

}

}

} else {

for (int cc = 0; cc < samples; cc++) {

if ( audioData[cc]>0 ) {

current = (audioData[cc] - 0x80);

if (Math.abs(current)>largest) {

largest = Math.abs(current);

}

} else {

current = (audioData[cc] + 0x80);

if (Math.abs(current)>largest) {

largest = Math.abs(current);

}

}

}

}

}

logger.log(Level.FINEST, "Max signal level: " + (double)largest/(Math.pow(2, bitDepth-1)));

return (double)largest/(Math.pow(2, bitDepth-1));

}

}

BigClip主要基于 J2SE 的Clip。搜索使用Clip的代码,您应该能够轻松地将其替换为BigClip。 - Andrew ThompsonMediaPlayer来播放MP3。 - Andrew Thompson