我对Scala和Spark还很陌生。在使用IntelliJ时,我发现很难让事情顺利运行。目前,我无法运行以下代码。我相信这只是一些简单的问题,但我无法使它正常工作。

我正在尝试运行:

import org.apache.spark.{SparkConf, SparkContext}

object TestScala {

def main(args: Array[String]): Unit = {

val conf = new SparkConf()

conf.setAppName("Datasets Test")

conf.setMaster("local[2]")

val sc = new SparkContext(conf)

println(sc)

}

}

我遇到的错误是:

Exception in thread "main" java.lang.NoSuchMethodError: scala.Predef$.refArrayOps([Ljava/lang/Object;)Lscala/collection/mutable/ArrayOps;

at org.apache.spark.util.Utils$.getCallSite(Utils.scala:1413)

at org.apache.spark.SparkContext.<init>(SparkContext.scala:77)

at TestScala$.main(TestScala.scala:13)

at TestScala.main(TestScala.scala)

at sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method)

at sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:62)

at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43)

at java.lang.reflect.Method.invoke(Method.java:498)

at com.intellij.rt.execution.application.AppMain.main(AppMain.java:147)

我的build.sbt文件:

name := "sparkBook"

version := "1.0"

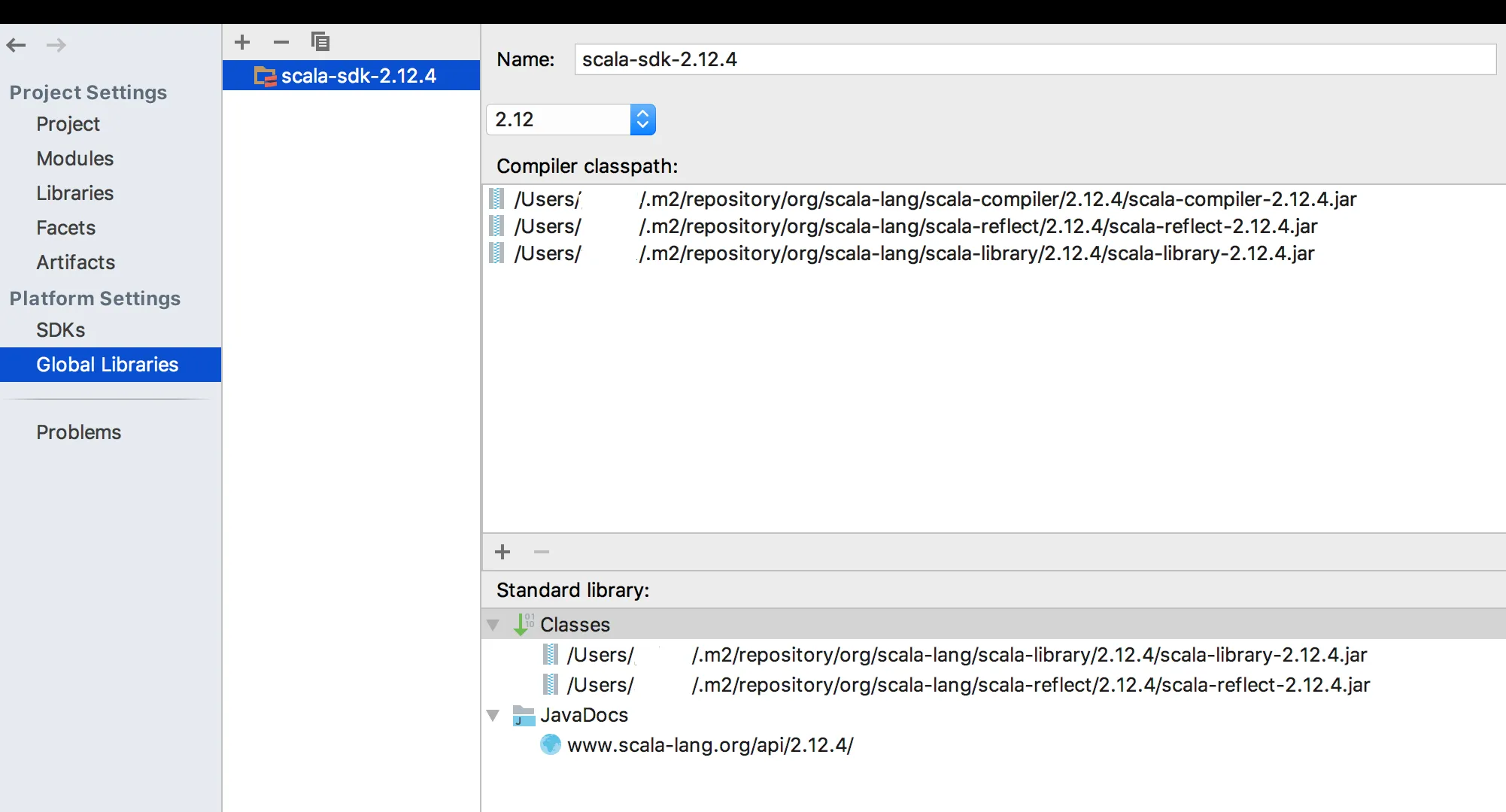

scalaVersion := "2.12.1"

build.sbt文件? - Nagarjuna Pamubuild.sbt文件中没有包含Spark依赖项。为什么? - Nagarjuna Pamu