有人知道一种能将xPath转换为JSoup的工具吗?我从Chrome中获取到以下的xPath:

//*[@id="docs"]/div[1]/h4/a

我想将其转换为Jsoup查询。该路径包含一个我正在尝试引用的href。

这很容易手动转换。

类似于这样(未经测试)

document.select("#docs > div:eq(1) > h4 > a").attr("href");

文档:

http://jsoup.org/cookbook/extracting-data/selector-syntax

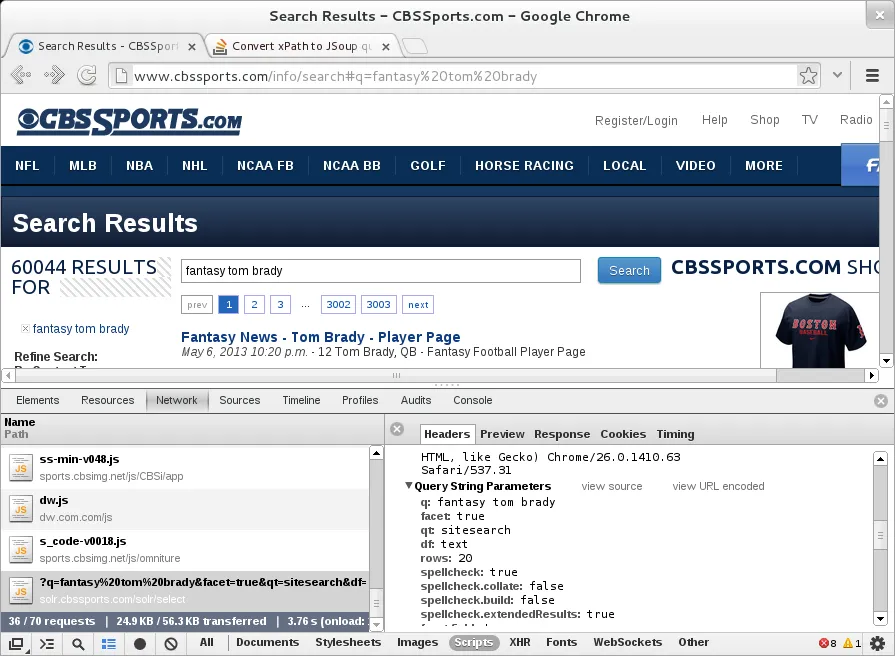

尝试获取此处第一个结果的href: cbssports.com/info/search#q=fantasy%20tom%20brady

代码

Elements select = Jsoup.connect("http://solr.cbssports.com/solr/select/?q=fantasy%20tom%20brady")

.get()

.select("response > result > doc > str[name=url]");

for (Element element : select) {

System.out.println(element.html());

}

结果

http://fantasynews.cbssports.com/fantasyfootball/players/playerpage/187741/tom-brady

http://www.cbssports.com/nfl/players/playerpage/187741/tom-brady

http://fantasynews.cbssports.com/fantasycollegefootball/players/playerpage/1825265/brady-lisoski

http://fantasynews.cbssports.com/fantasycollegefootball/players/playerpage/1766777/blake-brady

http://fantasynews.cbssports.com/fantasycollegefootball/players/playerpage/1851211/brady-foltz

http://fantasynews.cbssports.com/fantasycollegefootball/players/playerpage/1860955/brady-earnhardt

http://fantasynews.cbssports.com/fantasycollegefootball/players/playerpage/1673397/brady-amack

开发者控制台截图 - 获取URL

#id标签。你能帮忙检查一下吗? - Murtaza Haji您不一定需要将Xpath转换为JSoup特定的选择器。

相反,您可以使用基于JSoup并支持Xpath的XSoup。

https://github.com/code4craft/xsoup

以下是使用XSoup的示例文档。

@Test

public void testSelect() {

String html = "<html><div><a href='https://github.com'>github.com</a></div>" +

"<table><tr><td>a</td><td>b</td></tr></table></html>";

Document document = Jsoup.parse(html);

String result = Xsoup.compile("//a/@href").evaluate(document).get();

Assert.assertEquals("https://github.com", result);

List<String> list = Xsoup.compile("//tr/td/text()").evaluate(document).list();

Assert.assertEquals("a", list.get(0));

Assert.assertEquals("b", list.get(1));

}

这是一个使用Xsoup和Jsoup的独立代码片段:

import java.util.List;

import org.jsoup.Jsoup;

import org.jsoup.nodes.Document;

import us.codecraft.xsoup.Xsoup;

public class TestXsoup {

public static void main(String[] args){

String html = "<html><div><a href='https://github.com'>github.com</a></div>" +

"<table><tr><td>a</td><td>b</td></tr></table></html>";

Document document = Jsoup.parse(html);

List<String> filasFiltradas = Xsoup.compile("//tr/td/text()").evaluate(document).list();

System.out.println(filasFiltradas);

}

}

输出:

[a, b]

包含的库:

xsoup-0.3.1.jar jsoup-1.103.jar

我已经测试了以下XPath和Jsoup,它们可以正常工作。

示例1:

[XPath]

//*[@id="docs"]/div[1]/h4/a

[JSoup]

document.select("#docs > div > h4 > a").attr("href");

例子2:

[XPath]

//*[@id="action-bar-container"]/div/div[2]/a[2]

[JSoup]

document.select("#action-bar-container > div > div:eq(1) > a:eq(1)").attr("href");

File downloadedPage = new File("/path/to/your/page.html");

String xPathSelector = "//*[@id="docs"]/div[1]/h4/a";

Document document = Jsoup.parse(downloadedPage, "UTF-8");

Elements elements = document.selectXpath(xPathSelector);

您可以迭代返回的元素!

这要看你想要什么。

Document doc = JSoup.parse(googleURL);

doc.select("cite") //to get all the cite elements in the page

doc.select("li > cite") //to get all the <cites>'s that only exist under the <li>'s

doc.select("li.g cite") //to only get the <cite> tags under <li class=g> tags

public static void main(String[] args) throws IOException {

String html = getHTML();

Document doc = Jsoup.parse(html);

Elements elems = doc.select("li.g > cite");

for(Element elem: elems){

System.out.println(elem.toString());

}

}