在查找图像之间的差异/相似性之前,不要使用像素化来处理图像,只需使用cv2.GaussianBlur()方法对它们进行一些模糊处理,然后使用cv2.matchTemplate()方法来查找它们之间的相似性:

import cv2

import numpy as np

def process(img):

img_gray = cv2.cvtColor(img, cv2.COLOR_BGR2GRAY)

return cv2.GaussianBlur(img_gray, (43, 43), 21)

def confidence(img1, img2):

res = cv2.matchTemplate(process(img1), process(img2), cv2.TM_CCOEFF_NORMED)

return res.max()

img1s = list(map(cv2.imread, ["img1_1.jpg", "img1_2.jpg", "img1_3.jpg"]))

img2s = list(map(cv2.imread, ["img2_1.jpg", "img2_2.jpg", "img2_3.jpg"]))

for img1, img2 in zip(img1s, img2s):

conf = confidence(img1, img2)

print(f"Confidence: {round(conf * 100, 2)}%")

输出:

Confidence: 83.6%

Confidence: 84.62%

Confidence: 87.24%

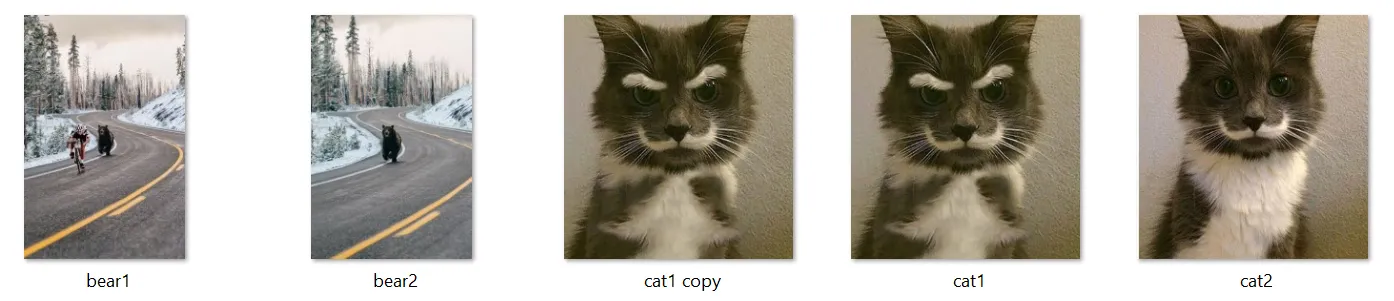

以下是上述程序中使用的图像:

img1_1.jpg和img2_1.jpg:

img1_2.jpg和img2_2.jpg:

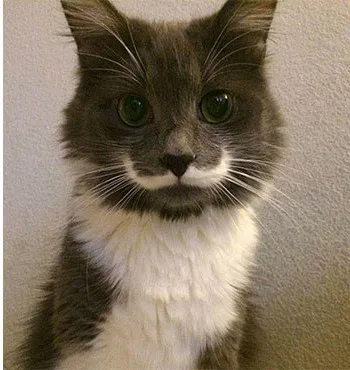

img1_3.jpg和img2_3.jpg:

为证明模糊操作不会产生过多的假阳性,我运行了这个程序:

import cv2

import numpy as np

def process(img):

h, w, _ = img.shape

img = cv2.resize(img, (350, h * w // 350))

img_gray = cv2.cvtColor(img, cv2.COLOR_BGR2GRAY)

return cv2.GaussianBlur(img_gray, (43, 43), 21)

def confidence(img1, img2):

res = cv2.matchTemplate(process(img1), process(img2), cv2.TM_CCOEFF_NORMED)

return res.max()

img1s = list(map(cv2.imread, ["img1_1.jpg", "img1_2.jpg", "img1_3.jpg"]))

img2s = list(map(cv2.imread, ["img2_1.jpg", "img2_2.jpg", "img2_3.jpg"]))

for i, img1 in enumerate(img1s, 1):

for j, img2 in enumerate(img2s, 1):

conf = confidence(img1, img2)

print(f"img1_{i} img2_{j} Confidence: {round(conf * 100, 2)}%")

输出:

img1_1 img2_1 Confidence: 84.2%

img1_1 img2_2 Confidence: -10.86%

img1_1 img2_3 Confidence: 16.11%

img1_2 img2_1 Confidence: -2.5%

img1_2 img2_2 Confidence: 84.61%

img1_2 img2_3 Confidence: 43.91%

img1_3 img2_1 Confidence: 14.49%

img1_3 img2_2 Confidence: 59.15%

img1_3 img2_3 Confidence: 87.25%

注意只有当将图像与其对应的图像匹配时,程序才会输出高置信度水平(84%以上)。

为了比较,这里是未经过模糊处理的结果:

import cv2

import numpy as np

def process(img):

h, w, _ = img.shape

img = cv2.resize(img, (350, h * w // 350))

return cv2.cvtColor(img, cv2.COLOR_BGR2GRAY)

def confidence(img1, img2):

res = cv2.matchTemplate(process(img1), process(img2), cv2.TM_CCOEFF_NORMED)

return res.max()

img1s = list(map(cv2.imread, ["img1_1.jpg", "img1_2.jpg", "img1_3.jpg"]))

img2s = list(map(cv2.imread, ["img2_1.jpg", "img2_2.jpg", "img2_3.jpg"]))

for i, img1 in enumerate(img1s, 1):

for j, img2 in enumerate(img2s, 1):

conf = confidence(img1, img2)

print(f"img1_{i} img2_{j} Confidence: {round(conf * 100, 2)}%")

输出:

img1_1 img2_1 Confidence: 66.73%

img1_1 img2_2 Confidence: -6.97%

img1_1 img2_3 Confidence: 11.01%

img1_2 img2_1 Confidence: 0.31%

img1_2 img2_2 Confidence: 65.33%

img1_2 img2_3 Confidence: 31.8%

img1_3 img2_1 Confidence: 9.57%

img1_3 img2_2 Confidence: 39.74%

img1_3 img2_3 Confidence: 61.16%