我正在尝试从一个网站上下载多个文件并将其保存到文件夹中。我正在尝试获取高速公路数据,该网站(http://www.wsdot.wa.gov/mapsdata/tools/InterchangeViewer/SR5.htm)上有一系列PDF链接。我想创建一个代码,可以提取在他们的网站上找到的众多PDF。也许创建一个循环,遍历网站并提取并保存每个文件到我的桌面本地文件夹中。有人知道我该怎么做吗?

3个回答

3

这是一个需要编程解决的问题。我可以指导你使用一些工具来完成,但无法提供完整的代码解决方案。

请求库:与HTTP服务器(网站)通信

请求库:与HTTP服务器(网站)通信

http://docs.python-requests.org/en/latest/

BeautifulSoup: HTML解析器(网站源代码解析)

http://www.crummy.com/software/BeautifulSoup/bs4/doc/

例子:

>>> import requests

>>> from bs4 import BeautifulSoup as BS

>>>

>>> response = requests.get('http://news.ycombinator.com')

>>> response.status_code # 200 == OK

200

>>>

>>> soup = BS(response.text) # Create a html parsing object

>>>

>>> soup.title # Heres the browser title tag

<title>Hacker News</title>

>>>

>>> soup.title.text # The contents of the tag

u'Hacker News'

>>>

>>> # Heres some article posts

...

>>> post_containers = soup.find_all('tr', attrs={'class':'athing'})

>>>

>>> print 'There are %d article posts.' % len(post_containers)

There are 30 article posts.

>>>

>>>

>>> # The article name is the 3rd and last object in a post_container

...

>>> for container in post_containers:

... title = container.contents[-1] # The last tag

... title.a.text # Grab the `a` tag inside our titile tag, print the text

...

u'Show HN: \u201cWho is hiring?\u201d Map'

u'\u2018Flash Boys\u2019 Programmer in Goldman Case Prevails Second Time'

u'Forthcoming OpenSSL releases'

u'Show HN: YouTube Filesystem \u2013 YTFS'

u'Google launches Uber rival RideWith'

u'Finish your stuff'

u'The Plan to Feed the World by Hacking Photosynthesis'

u'New electric engine improves safety of light aircraft'

u'Hacking Team hacked, attackers claim 400GB in dumped data'

u'Show HN: Proof of concept \u2013 Realtime single page apps'

u'Berkeley CS 61AS \u2013 Structure and Interpretation of Computer Programs, Self-Paced'

u'An evaluation of Erlang global process registries: meet Syn'

u'Show HN: Nearby Buzz \u2013\xa0Take control of your online reviews'

u"The Grateful Dead's Wall of Sound"

u'The Effects of Intermittent Fasting on Human and Animal Health'

u'JsCoq'

u'Taking stock of startup innovation in the Netherlands'

u'Hangout: Becoming a freelance developer'

u'Panning for Pangrams: The Search for the New Quick Brown Fox'

u'Show HN: MUI \u2013 Lightweight CSS Framework for Material Design'

u"Intel's 10nm 'Cannonlake' delayed, replaced by 14nm 'Kaby Lake'"

u'VP of Logistics \u2013 EasyPost (YC S13) Hiring'

u'Colorado\u2019s Effort Against Teenage Pregnancies Is a Startling Success'

u'Lexical Scanning in Go (2011)'

u'Avoiding traps in software development with systems thinking'

u"Apache Cordova: after 10 months, I won't using it anymore"

u'An exercise in profiling a Go program'

u"The Science of Pixar's \u2018Inside Out\u2019"

u'Ask HN: What tech blogs, podcasts do you follow outside of HN?'

u'NASA\u2019s New Horizons Plans July 7 Return to Normal Science Operations'

>>>

- Brandon Nadeau

0

Python的解决方案是使用urllib下载PDF文件。请参见使用urllib下载pdf?。

要获取要下载的PDF列表,请使用xml模块。

website = urllib.urlopen('http://www.wsdot.wa.gov/mapsdata/tools/InterchangeViewer/SR5.htm').read()

root = ET.fromstring(website)

list = root.findall('table')

hrefs = list.findall('a')

for a in hrefs:

download(a)

- bcdan

-5

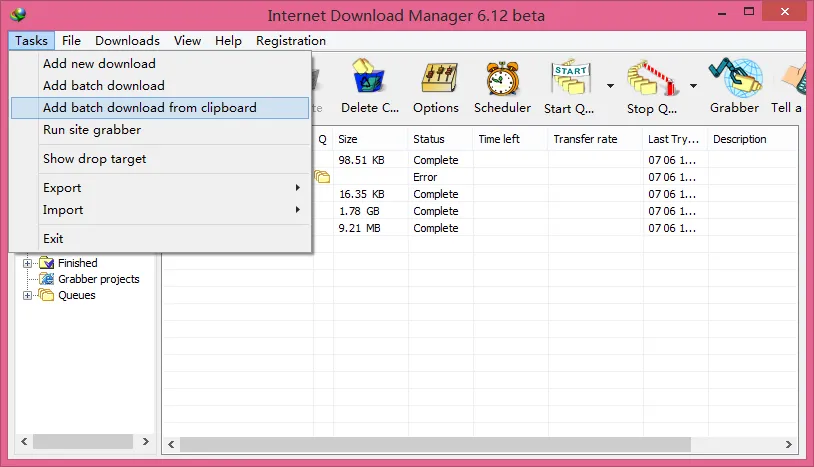

由于您的目标是批量下载PDF文件,最简单的方法不是编写脚本,而是使用商业软件。Internet Download Manager可以在两个步骤中完成您所需的操作:

- 复制包括链接在内的所有文本。

- 选择任务 > 从剪贴板添加批量下载。

- meelo

2

4问题要求提供一段代码,而非像IDM这样的软件。 - TrigonaMinima

Meelo,有没有免费的互联网下载管理器,没有试用版本? - newGIS

网页内容由stack overflow 提供, 点击上面的可以查看英文原文,

原文链接

原文链接