我对信号处理几乎一无所知,目前我正尝试在Swift中实现一个函数,当声压级增加时(例如人类尖叫时)触发事件。

我通过回调来连接AVAudioEngine的输入节点,如下所示:

let recordingFormat = inputNode.outputFormat(forBus: 0)

inputNode.installTap(onBus: 0, bufferSize: 1024, format: recordingFormat){

(buffer : AVAudioPCMBuffer?, when : AVAudioTime) in

let arraySize = Int(buffer.frameLength)

let samples = Array(UnsafeBufferPointer(start: buffer.floatChannelData![0], count:arraySize))

//do something with samples

let volume = 20 * log10(floatArray.reduce(0){ $0 + $1} / Float(arraySize))

if(!volume.isNaN){

print("this is the current volume: \(volume)")

}

}

将其转换为浮点数组后,我尝试通过计算平均值来粗略估计声压级。

但即使iPad只是静静地放在一个安静的房间里,这也会导致值波动很大:

this is the current volume: -123.971

this is the current volume: -119.698

this is the current volume: -147.053

this is the current volume: -119.749

this is the current volume: -118.815

this is the current volume: -123.26

this is the current volume: -118.953

this is the current volume: -117.273

this is the current volume: -116.869

this is the current volume: -110.633

this is the current volume: -130.988

this is the current volume: -119.475

this is the current volume: -116.422

this is the current volume: -158.268

this is the current volume: -118.933

如果我在麦克风附近拍手,这个值的确会显著增加。

所以我可以在准备阶段先计算这些音量的平均值,然后比较事件触发阶段差异是否显著增加:

if(!volume.isNaN){

if(isInThePreparingPhase){

print("this is the current volume: \(volume)")

volumeSum += volume

volumeCount += 1

}else if(isInTheEventTriggeringPhase){

if(volume > meanVolume){

//triggers an event

}

}

}

平均音量是在准备阶段过渡到触发事件阶段期间计算的:meanVolume = volumeSum / Float(volumeCount)

....

然而,如果我在麦克风之外播放大声音乐,似乎没有明显的增加。而且在极少数情况下,即使环境中的音量没有明显增加(对人耳可听到),音量仍然大于平均音量。那么,从AVAudioPCMBuffer中提取声压级的正确方法是什么?

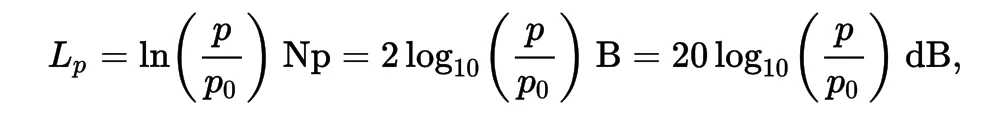

维基百科给出了以下公式:

其中p是均方根声压,p0是参考声压。

但我不知道AVAudioPCMBuffer.floatChannelData中的浮点数值代表什么。苹果页面只说:

缓冲区的音频样本作为浮点值。

我该如何处理它们?

floatArray是什么?在这里...let volume = 20 * log10(floatArray.reduce(0){ $0 + $1} / Float(arraySize)) ....- MikeMaus