我遇到了错误

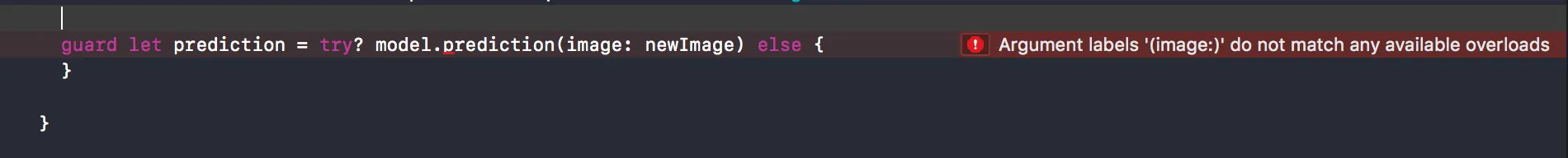

参数标签'(image:)'与任何可用的重载不匹配

我按照这里的教程和苹果的文档操作,但是当我尝试从React Native接受图像时,我开始收到这个错误。Swift和React Native之间的桥梁正在工作,只有在我开始尝试使用CoreML时才出现错误。

我认为这与新的Swift语法有关,但我不确定如何修复它,我也没有看到任何人在React Native中使用CoreML。

这是我的完整函数:

import Foundation

import CoreML

@objc(Printer)

class Printer: NSObject {

@objc func imageRec(_ image:CGImage) -> CVPixelBuffer? {

let model = Inceptionv3();

UIGraphicsBeginImageContextWithOptions(CGSize(width: 299, height: 299), true, 1.0)

//image.draw(in: CGRect(x: 0, y: 0, width: 299, height: 299))

let newImage = UIGraphicsGetImageFromCurrentImageContext()!

UIGraphicsEndImageContext()

let attrs = [kCVPixelBufferCGImageCompatibilityKey: kCFBooleanTrue, kCVPixelBufferCGBitmapContextCompatibilityKey: kCFBooleanTrue] as CFDictionary

var pixelBuffer : CVPixelBuffer?

let status = CVPixelBufferCreate(kCFAllocatorDefault, Int(newImage.size.width), Int(newImage.size.height), kCVPixelFormatType_32ARGB, attrs, &pixelBuffer)

guard (status == kCVReturnSuccess) else {

return nil

}

CVPixelBufferLockBaseAddress(pixelBuffer!, CVPixelBufferLockFlags(rawValue: 0))

let pixelData = CVPixelBufferGetBaseAddress(pixelBuffer!)

let rgbColorSpace = CGColorSpaceCreateDeviceRGB()

let context = CGContext(data: pixelData, width: Int(newImage.size.width), height: Int(newImage.size.height), bitsPerComponent: 8, bytesPerRow: CVPixelBufferGetBytesPerRow(pixelBuffer!), space: rgbColorSpace, bitmapInfo: CGImageAlphaInfo.noneSkipFirst.rawValue)

context?.translateBy(x: 0, y: newImage.size.height)

context?.scaleBy(x: 1.0, y: -1.0)

UIGraphicsPushContext(context!)

newImage.draw(in: CGRect(x: 0, y: 0, width: newImage.size.width, height: newImage.size.height))

UIGraphicsPopContext()

CVPixelBufferUnlockBaseAddress(pixelBuffer!, CVPixelBufferLockFlags(rawValue: 0))

guard let prediction = try? model.prediction(image: newImage) else {

}

}

}