自从使用这个函数对图像进行模糊处理后,我经常会收到 CoreImage 的崩溃报告:

// Code exactly as in app

extension UserImage {

func blurImage(_ radius: CGFloat) -> UIImage? {

guard let ciImage = CIImage(image: self) else {

return nil

}

let clampedImage = ciImage.clampedToExtent()

let blurFilter = CIFilter(name: "CIGaussianBlur", parameters: [

kCIInputImageKey: clampedImage,

kCIInputRadiusKey: radius])

var filterImage = blurFilter?.outputImage

filterImage = filterImage?.cropped(to: ciImage.extent)

guard let finalImage = filterImage else {

return nil

}

return UIImage(ciImage: finalImage)

}

}

// Code stripped down, contains more in app

class MyImage {

var blurredImage: UIImage?

func setBlurredImage() {

DispatchQueue.global(qos: DispatchQoS.QoSClass.userInitiated).async {

let blurredImage = self.getImage().blurImage(100)

DispatchQueue.main.async {

guard let blurredImage = blurredImage else { return }

self.blurredImage = blurredImage

}

}

}

}

根据Crashlytics的报告:

- 崩溃仅发生在一小部分会话中

- 崩溃发生在各种iOS版本,从11.x到12.x不等

- 崩溃发生时0%的设备处于后台状态

我无法复现此崩溃,该过程如下:

MyImageView对象(UIImageView的子对象)接收到一个Notification- 有时(取决于其他逻辑),在线程

DispatchQueue.global(qos: DispatchQoS.QoSClass.userInitiated).async上创建了一个UIImage的模糊版本 - 在主线程上,该对象使用

self.image = ...设置UIImage

根据崩溃日志(UIImageView setImage)显示,应用程序似乎在第3步之后崩溃。另一方面,崩溃日志中的CIImage指示问题出现在第2步,即使用CIFilter创建图像的模糊版本。注意:MyImageView有时用于UICollectionViewCell。

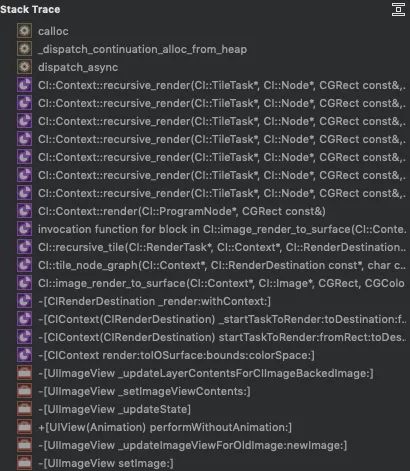

崩溃日志:

EXC_BAD_ACCESS KERN_INVALID_ADDRESS 0x0000000000000000

Crashed: com.apple.main-thread

0 CoreImage 0x1c18128c0 CI::Context::recursive_render(CI::TileTask*, CI::Node*, CGRect const&, CI::Node*, bool) + 2388

1 CoreImage 0x1c18128c0 CI::Context::recursive_render(CI::TileTask*, CI::Node*, CGRect const&, CI::Node*, bool) + 2388

2 CoreImage 0x1c18122e8 CI::Context::recursive_render(CI::TileTask*, CI::Node*, CGRect const&, CI::Node*, bool) + 892

3 CoreImage 0x1c18122e8 CI::Context::recursive_render(CI::TileTask*, CI::Node*, CGRect const&, CI::Node*, bool) + 892

4 CoreImage 0x1c18122e8 CI::Context::recursive_render(CI::TileTask*, CI::Node*, CGRect const&, CI::Node*, bool) + 892

5 CoreImage 0x1c18122e8 CI::Context::recursive_render(CI::TileTask*, CI::Node*, CGRect const&, CI::Node*, bool) + 892

6 CoreImage 0x1c18122e8 CI::Context::recursive_render(CI::TileTask*, CI::Node*, CGRect const&, CI::Node*, bool) + 892

7 CoreImage 0x1c18122e8 CI::Context::recursive_render(CI::TileTask*, CI::Node*, CGRect const&, CI::Node*, bool) + 892

8 CoreImage 0x1c18122e8 CI::Context::recursive_render(CI::TileTask*, CI::Node*, CGRect const&, CI::Node*, bool) + 892

9 CoreImage 0x1c18122e8 CI::Context::recursive_render(CI::TileTask*, CI::Node*, CGRect const&, CI::Node*, bool) + 892

10 CoreImage 0x1c18122e8 CI::Context::recursive_render(CI::TileTask*, CI::Node*, CGRect const&, CI::Node*, bool) + 892

11 CoreImage 0x1c1812f04 CI::Context::render(CI::ProgramNode*, CGRect const&) + 116

12 CoreImage 0x1c182ca3c invocation function for block in CI::image_render_to_surface(CI::Context*, CI::Image*, CGRect, CGColorSpace*, __IOSurface*, CGPoint, CI::PixelFormat, CI::RenderDestination const*) + 40

13 CoreImage 0x1c18300bc CI::recursive_tile(CI::RenderTask*, CI::Context*, CI::RenderDestination const*, char const*, CI::Node*, CGRect const&, CI::PixelFormat, CI::swizzle_info const&, CI::TileTask* (CI::ProgramNode*, CGRect) block_pointer) + 608

14 CoreImage 0x1c182b740 CI::tile_node_graph(CI::Context*, CI::RenderDestination const*, char const*, CI::Node*, CGRect const&, CI::PixelFormat, CI::swizzle_info const&, CI::TileTask* (CI::ProgramNode*, CGRect) block_pointer) + 396

15 CoreImage 0x1c182c308 CI::image_render_to_surface(CI::Context*, CI::Image*, CGRect, CGColorSpace*, __IOSurface*, CGPoint, CI::PixelFormat, CI::RenderDestination const*) + 1340

16 CoreImage 0x1c18781c0 -[CIContext(CIRenderDestination) _startTaskToRender:toDestination:forPrepareRender:error:] + 2488

17 CoreImage 0x1c18777ec -[CIContext(CIRenderDestination) startTaskToRender:fromRect:toDestination:atPoint:error:] + 140

18 CoreImage 0x1c17c9e4c -[CIContext render:toIOSurface:bounds:colorSpace:] + 268

19 UIKitCore 0x1e8f41244 -[UIImageView _updateLayerContentsForCIImageBackedImage:] + 880

20 UIKitCore 0x1e8f38968 -[UIImageView _setImageViewContents:] + 872

21 UIKitCore 0x1e8f39fd8 -[UIImageView _updateState] + 664

22 UIKitCore 0x1e8f79650 +[UIView(Animation) performWithoutAnimation:] + 104

23 UIKitCore 0x1e8f3ff28 -[UIImageView _updateImageViewForOldImage:newImage:] + 504

24 UIKitCore 0x1e8f3b0ac -[UIImageView setImage:] + 340

25 App 0x100482434 MyImageView.updateImageView() (<compiler-generated>)

26 App 0x10048343c closure #1 in MyImageView.handleNotification(_:) + 281 (MyImageView.swift:281)

27 App 0x1004f1870 thunk for @escaping @callee_guaranteed () -> () (<compiler-generated>)

28 libdispatch.dylib 0x1bbbf4a38 _dispatch_call_block_and_release + 24

29 libdispatch.dylib 0x1bbbf57d4 _dispatch_client_callout + 16

30 libdispatch.dylib 0x1bbbd59e4 _dispatch_main_queue_callback_4CF$VARIANT$armv81 + 1008

31 CoreFoundation 0x1bc146c1c __CFRUNLOOP_IS_SERVICING_THE_MAIN_DISPATCH_QUEUE__ + 12

32 CoreFoundation 0x1bc141b54 __CFRunLoopRun + 1924

33 CoreFoundation 0x1bc1410b0 CFRunLoopRunSpecific + 436

34 GraphicsServices 0x1be34179c GSEventRunModal + 104

35 UIKitCore 0x1e8aef978 UIApplicationMain + 212

36 App 0x1002a3544 main + 18 (AppDelegate.swift:18)

37 libdyld.dylib 0x1bbc068e0 start + 4

可能导致崩溃的原因是什么?

更新

可能与CIImage内存泄漏有关。在分析时,我看到了很多与崩溃日志中相同的堆栈跟踪的CIImage 内存泄漏:

可能与Core Image和内存泄漏,swift 3.0有关。我发现图像存储在内存中的数组中,onReceiveMemoryWarning没有被正确处理,也没有清除该数组。因此,在某些情况下,应用程序会因内存问题而崩溃。也许这可以解决这个问题,我会在这里发布更新。

更新2

似乎我能够重现这个崩溃。在iPhone Xs Max上测试一个5MB的JPEG图像。

- 当显示未模糊全屏幕的图像时,应用程序的内存使用量为160MB。

- 当显示图像在1/4屏幕大小的状态下被模糊时,内存使用量为380MB。

- 当显示图像在全屏幕中被模糊时,内存使用量跳到>1.6GB,大多数情况下应用程序会崩溃,并显示:

来自调试器的消息:由于内存问题终止

我很惊讶一个5MB的图像可以导致“简单”的模糊处理产生>1.6GB的内存使用量。我需要手动释放任何东西吗,如CIContext,CIImage等,还是这是正常现象,我需要在模糊处理之前手动调整图像大小到~kB?

更新3

添加多个显示模糊图像的图像视图会导致内存使用量每次增加几百MB,直到移除视图,即使每次只有1个图像可见。也许不应该使用CIFilter来显示图像,因为它占用的内存比呈现图像本身还要多。

所以我将模糊处理函数更改为在上下文中渲染图像,效果显著,内存仅在短时间内增加,用于渲染图像,并在模糊处理之前回到原始水平。

这里是更新后的方法:

func blurImage(_ radius: CGFloat) -> UIImage? {

guard let ciImage = CIImage(image: self) else {

return nil

}

let clampedImage = ciImage.clampedToExtent()

let blurFilter = CIFilter(name: "CIGaussianBlur", withInputParameters: [

kCIInputImageKey: clampedImage,

kCIInputRadiusKey: radius])

var filteredImage = blurFilter?.outputImage

filteredImage = filteredImage?.cropped(to: ciImage.extent)

guard let blurredCiImage = filteredImage else {

return nil

}

let rect = CGRect(origin: CGPoint.zero, size: size)

UIGraphicsBeginImageContext(rect.size)

UIImage(ciImage: blurredCiImage).draw(in: rect)

let blurredImage = UIGraphicsGetImageFromCurrentImageContext()

UIGraphicsEndImageContext()

return blurredImage

}

此外,感谢在评论中建议通过将图像降采样后再进行模糊处理来减轻高内存消耗的@matt和@FrankSchlegel。这让我也采取了同样的措施。即使是300x300px大小的图像也会导致内存使用量急剧增加约500MB,这令人惊讶。考虑到应用程序被终止的极限为2GB,我将在应用程序更新后发布更新通知。

第4次更新: 我添加了此代码来将图像降采样至最大300x300px后再进行模糊处理:

func resizeImageWithAspectFit(_ boundSize: CGSize) -> UIImage {

let ratio = self.size.width / self.size.height

let maxRatio = boundSize.width / boundSize.height

var scaleFactor: CGFloat

if ratio > maxRatio {

scaleFactor = boundSize.width / self.size.width

} else {

scaleFactor = boundSize.height / self.size.height

}

let newWidth = self.size.width * scaleFactor

let newHeight = self.size.height * scaleFactor

let rect = CGRect(x: 0.0, y: 0.0, width: newWidth, height: newHeight)

UIGraphicsBeginImageContext(rect.size)

self.draw(in: rect)

let newImage = UIGraphicsGetImageFromCurrentImageContext()

UIGraphicsEndImageContext()

return newImage!

}

现在这些崩溃看起来不同了,但我不确定这些崩溃是发生在降采样还是绘制模糊图像时,因为两者都使用了UIGraphicsImageContext。

EXC_BAD_ACCESS KERN_INVALID_ADDRESS 0x0000000000000010

Crashed: com.apple.root.user-initiated-qos

0 libobjc.A.dylib 0x1ce457530 objc_msgSend + 16

1 CoreImage 0x1d48773dc -[CIContext initWithOptions:] + 96

2 CoreImage 0x1d4877358 +[CIContext contextWithOptions:] + 52

3 UIKitCore 0x1fb7ea794 -[UIImage drawInRect:blendMode:alpha:] + 984

4 MyApp 0x1005bb478 UIImage.blurImage(_:) (<compiler-generated>)

5 MyApp 0x100449f58 closure #1 in MyImage.getBlurredImage() + 153 (UIImage+Extension.swift:153)

6 MyApp 0x1005cda48 thunk for @escaping @callee_guaranteed () -> () (<compiler-generated>)

7 libdispatch.dylib 0x1ceca4a38 _dispatch_call_block_and_release + 24

8 libdispatch.dylib 0x1ceca57d4 _dispatch_client_callout + 16

9 libdispatch.dylib 0x1cec88afc _dispatch_root_queue_drain + 636

10 libdispatch.dylib 0x1cec89248 _dispatch_worker_thread2 + 116

11 libsystem_pthread.dylib 0x1cee851b4 _pthread_wqthread + 464

12 libsystem_pthread.dylib 0x1cee87cd4 start_wqthread + 4

这里是用于调整大小和模糊图像的线程(blurImage() 是第三次更新中描述的方法):

class MyImage {

var originalImage: UIImage?

var blurredImage: UIImage?

// Called on the main thread

func getBlurredImage() -> UIImage {

DispatchQueue.global(qos: DispatchQoS.QoSClass.userInitiated).async {

// Create resized image

let smallImage = self.originalImage.resizeImageWithAspectFitToSizeLimit(CGSize(width: 1000, height: 1000))

// Create blurred image

let blurredImage = smallImage.blurImage()

DispatchQueue.main.async {

self.blurredImage = blurredImage

// Notify observers to display `blurredImage` in UIImageView on the main thread

NotificationCenter.default.post(name: BlurredImageIsReady, object: nil, userInfo: ni)

}

}

}

}

}

CIImage和更新视图的代码? - Frank RupprechtCIFilter的outputImage并不会应用滤镜。将CIImage视为创建图像的“配方”,当实际需要图像内容(在此情况下,当图像视图需要实际像素来显示时)时,首先对其进行评估。因此,CIImage本质上是懒加载的。 - Frank RupprechtUIImage(ciImage: finalImage)并没有做任何事情;它实际上使用了零内存,因为您所做的只是创建制作图像的指令,而您还没有实际制作图像。因此,问题在于图像的创建和处理。 - matt