我有以下矩阵乘法代码,使用CUDA 3.2和VS 2008实现。我在Windows Server 2008 R2企业版上运行。我正在运行Nvidia GTX 480。以下代码适用于“宽度”(矩阵宽度)值为2500左右。

int size = Width*Width*sizeof(float);

float* Md, *Nd, *Pd;

cudaError_t err = cudaSuccess;

//Allocate Device Memory for M, N and P

err = cudaMalloc((void**)&Md, size);

err = cudaMalloc((void**)&Nd, size);

err = cudaMalloc((void**)&Pd, size);

//Copy Matrix from Host Memory to Device Memory

err = cudaMemcpy(Md, M, size, cudaMemcpyHostToDevice);

err = cudaMemcpy(Nd, N, size, cudaMemcpyHostToDevice);

//Setup the execution configuration

dim3 dimBlock(TileWidth, TileWidth, 1);

dim3 dimGrid(ceil((float)(Width)/TileWidth), ceil((float)(Width)/TileWidth), 1);

MatrixMultiplicationMultiBlock_Kernel<<<dimGrid, dimBlock>>>(Md, Nd, Pd, Width);

err = cudaMemcpy(P, Pd, size, cudaMemcpyDeviceToHost);

//Free Device Memory

cudaFree(Md);

cudaFree(Nd);

cudaFree(Pd);

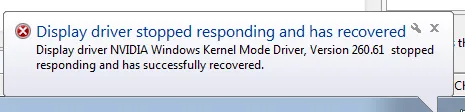

当我将“Width”设置为3000或更大时,黑屏后出现以下错误:

我在网上查找后发现,有些人之所以会遇到这个问题,是因为看门狗在挂起超过5秒后会杀死内核。我尝试编辑注册表中的“TdrDelay”,这样可以延迟黑屏时间,但同样的错误也会出现。因此,我得出结论这不是我的问题。

我在网上查找后发现,有些人之所以会遇到这个问题,是因为看门狗在挂起超过5秒后会杀死内核。我尝试编辑注册表中的“TdrDelay”,这样可以延迟黑屏时间,但同样的错误也会出现。因此,我得出结论这不是我的问题。我对我的代码进行了调试,并发现以下一行是罪魁祸首:

err = cudaMemcpy(P, Pd, size, cudaMemcpyDeviceToHost);

这是我用来返回设备中矩阵乘法核函数调用后结果集的代码。在此之前,一切似乎都运行正常。我相信我正确地分配了内存,但是无法弄清楚为什么会出现这种情况。我认为可能是我的显卡上没有足够的内存,但是难道不应该在cudaMalloc时返回错误吗?(我在调试过程中确认它没有返回错误)。

有任何想法/帮助都将不胜感激!...非常感谢大家!!

核心代码:

//Matrix Multiplication Kernel - Multi-Block Implementation

__global__ void MatrixMultiplicationMultiBlock_Kernel (float* Md, float* Nd, float* Pd, int Width)

{

int TileWidth = blockDim.x;

//Get row and column from block and thread ids

int Row = (TileWidth*blockIdx.y) + threadIdx.y;

int Column = (TileWidth*blockIdx.x) + threadIdx.x;

//Pvalue store the Pd element that is computed by the thread

float Pvalue = 0;

for (int i = 0; i < Width; ++i)

{

float Mdelement = Md[Row * Width + i];

float Ndelement = Nd[i * Width + Column];

Pvalue += Mdelement * Ndelement;

}

//Write the matrix to device memory each thread writes one element

Pd[Row * Width + Column] = Pvalue;

}

我也有另一个使用共享内存的函数,它也会出现相同的错误:

调用:

MatrixMultiplicationSharedMemory_Kernel<<<dimGrid, dimBlock, sizeof(float)*TileWidth*TileWidth*2>>>(Md, Nd, Pd, Width);

内核代码:

//Matrix Multiplication Kernel - Shared Memory Implementation

__global__ void MatrixMultiplicationSharedMemory_Kernel (float* Md, float* Nd, float* Pd, int Width)

{

int TileWidth = blockDim.x;

//Initialize shared memory

extern __shared__ float sharedArrays[];

float* Mds = (float*) &sharedArrays;

float* Nds = (float*) &Mds[TileWidth*TileWidth];

int tx = threadIdx.x;

int ty = threadIdx.y;

//Get row and column from block and thread ids

int Row = (TileWidth*blockIdx.y) + ty;

int Column = (TileWidth*blockIdx.x) + tx;

float Pvalue = 0;

//For each tile, load the element into shared memory

for( int i = 0; i < ceil((float)Width/TileWidth); ++i)

{

Mds[ty*TileWidth+tx] = Md[Row*Width + (i*TileWidth + tx)];

Nds[ty*TileWidth+tx] = Nd[(ty + (i * TileWidth))*Width + Column];

__syncthreads();

for( int j = 0; j < TileWidth; ++j)

{

Pvalue += Mds[ty*TileWidth+j] * Nds[j*TileWidth+tx];

}

__syncthreads();

}

//Write the matrix to device memory each thread writes one element

Pd[Row * Width + Column] = Pvalue;

}

cuda-memcheck,对此感到抱歉。它应该能立即检测到越界访问。 - Tom