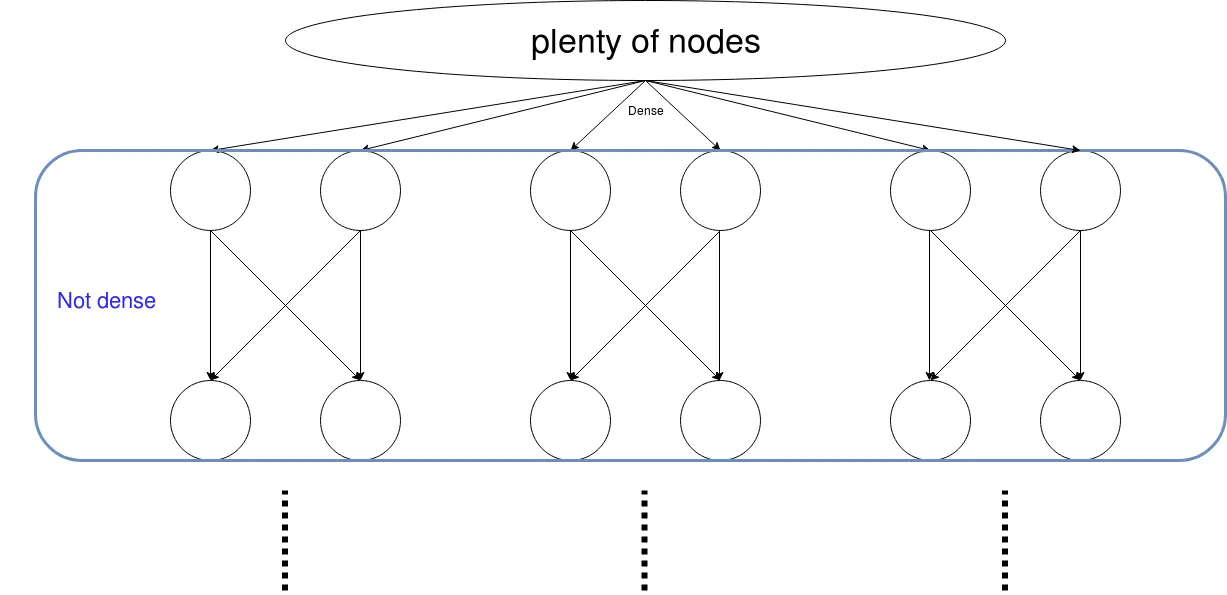

Tensorflow自定义层:使用可训练参数创建稀疏矩阵

6

- Marko Karbevski

5

那么你具体想要什么?你想知道如何在TensorFlow中创建稀疏矩阵,还是你遇到了什么问题? - eugen

@eugen 是的,我想创建一个稀疏可训练矩阵,至少要遵循上述描述的模式。 - Marko Karbevski

好的,我已经发布了一个答案,请查看。 - eugen

我相信有更好的方法,但你可以创建一个密集权重矩阵,并在激活函数之前将其乘以你的分块对角矩阵。这样,所有与分块对角矩阵中位置0相关联的权重的梯度都将被清零,权重不会被改变。 - foglerit

@foglerit 这会使事情变得相当慢。TF 在优化时仍会考虑所有变量,对吗? - Marko Karbevski

2个回答

3

我已经编写了这样一层:

https://github.com/ArnovanHilten/GenNet/blob/master/GenNet_utils/LocallyDirectedConnected_tf2.py

它以稀疏矩阵作为输入,并允许您决定如何在层之间进行连接。该层使用稀疏张量和矩阵乘法。

- Arno

2

编辑

所以评论是:这是一个可训练的对象吗?

答案:不是。您目前不能使用稀疏矩阵并使其可训练。相反,您可以使用掩码矩阵(请参见末尾)。

但是,如果您需要使用稀疏矩阵,则只需使用tf.sparse.sparse_dense_matmul()或tf.sparse_tensor_to_dense(),其中您的稀疏矩阵与密集矩阵交互。我从此处获取了一个简单的XOR示例,并将密集矩阵替换为稀疏矩阵:

#Declaring necessary modules

import tensorflow as tf

import numpy as np

"""

A simple numpy implementation of a XOR gate to understand the backpropagation

algorithm

"""

x = tf.placeholder(tf.float32,shape = [4,2],name = "x")

#declaring a place holder for input x

y = tf.placeholder(tf.float32,shape = [4,1],name = "y")

#declaring a place holder for desired output y

m = np.shape(x)[0]#number of training examples

n = np.shape(x)[1]#number of features

hidden_s = 2 #number of nodes in the hidden layer

l_r = 1#learning rate initialization

theta1 = tf.SparseTensor(indices=[[0, 0],[0, 1], [1, 1]], values=[0.1, 0.2, 0.1], dense_shape=[3, 2])

#theta1 = tf.cast(tf.Variable(tf.random_normal([3,hidden_s]),name = "theta1"),tf.float64)

theta2 = tf.cast(tf.Variable(tf.random_normal([hidden_s+1,1]),name = "theta2"),tf.float32)

#conducting forward propagation

a1 = tf.concat([np.c_[np.ones(x.shape[0])],x],1)

#the weights of the first layer are multiplied by the input of the first layer

#z1 = tf.sparse_tensor_dense_matmul(theta1, a1)

z1 = tf.matmul(a1,tf.sparse_tensor_to_dense(theta1))

#the input of the second layer is the output of the first layer, passed through the

a2 = tf.concat([np.c_[np.ones(x.shape[0])],tf.sigmoid(z1)],1)

#the input of the second layer is multiplied by the weights

z3 = tf.matmul(a2,theta2)

#the output is passed through the activation function to obtain the final probability

h3 = tf.sigmoid(z3)

cost_func = -tf.reduce_sum(y*tf.log(h3)+(1-y)*tf.log(1-h3),axis = 1)

#built in tensorflow optimizer that conducts gradient descent using specified

optimiser = tf.train.GradientDescentOptimizer(learning_rate = l_r).minimize(cost_func)

#setting required X and Y values to perform XOR operation

X = [[0,0],[0,1],[1,0],[1,1]]

Y = [[0],[1],[1],[0]]

#initializing all variables, creating a session and running a tensorflow session

init = tf.global_variables_initializer()

sess = tf.Session()

sess.run(init)

#running gradient descent for each iterati

for i in range(200):

sess.run(optimiser, feed_dict = {x:X,y:Y})#setting place holder values using feed_dict

if i%100==0:

print("Epoch:",i)

print(sess.run(theta1))

输出结果为:

Epoch: 0

SparseTensorValue(indices=array([[0, 0],

[0, 1],

[1, 1]]), values=array([0.1, 0.2, 0.1], dtype=float32), dense_shape=array([3, 2]))

Epoch: 100

SparseTensorValue(indices=array([[0, 0],

[0, 1],

[1, 1]]), values=array([0.1, 0.2, 0.1], dtype=float32), dense_shape=array([3, 2]))

所以唯一的方法是使用掩码矩阵。你可以通过乘法或tf.where来使用它。 1) 乘法:你可以创建所需形状的掩码矩阵,并将其与权重矩阵相乘:

mask = tf.Variable([[1,0,0],[0,1,0],[0,0,1]],name ='mask', trainable=False)

weight = tf.cast(tf.Variable(tf.random_normal([3,3])),tf.float32)

desired_tensor = tf.matmul(weight, mask)

2) tf.where

mask = tf.Variable([[1,0,0],[0,1,0],[0,0,1]],name ='mask', trainable=False)

weight = tf.cast(tf.Variable(tf.random_normal([3,3])),tf.float32)

desired_tensor = tf.where(mask > 0, tf.ones_like(weight), weight)

希望这能有所帮助。

您可以通过使用稀疏张量来实现此操作,如下所示:

SparseTensor(indices=[[0, 0], [1, 2]], values=[1, 2], dense_shape=[3, 4])

输出结果为:

[[1, 0, 0, 0]

[0, 0, 2, 0]

[0, 0, 0, 0]]

您可以在这里查阅更多关于稀疏张量的文档:

https://www.tensorflow.org/api_docs/python/tf/sparse/SparseTensor

希望对您有所帮助!

- eugen

1

这是一个可训练的对象吗? - Marko Karbevski

网页内容由stack overflow 提供, 点击上面的可以查看英文原文,

原文链接

原文链接