我正在使用 GPU 版本的 Keras 对一个预训练的网络进行迁移学习。我不理解如何定义参数max_queue_size、workers和use_multiprocessing。如果我更改这些参数(主要是为了加速学习),我不确定每个 epoch 是否仍然会看到所有数据。

max_queue_size:

内部训练队列的最大大小,用于“预缓存”来自生成器的样本

问题:这是指在 CPU 上准备多少批次?它与

workers如何相关?如何最优地定义它?

workers:

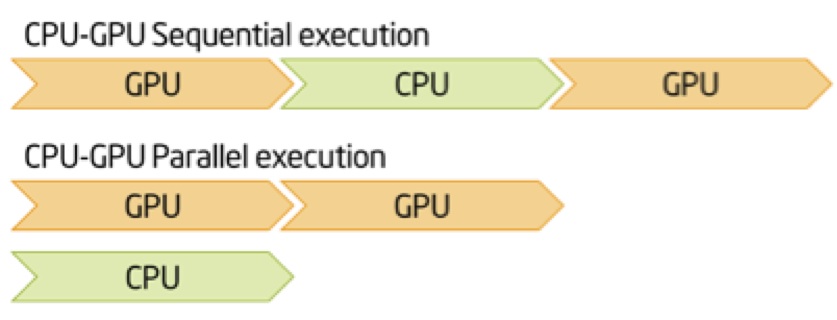

并行生成批次的线程数。批次在 CPU 上并行计算,然后即时传递到 GPU 进行神经网络计算

问题:如何找出我的 CPU 可以/应该生成多少个批次并行处理?

use_multiprocessing:

是否使用基于进程的线程

问题:如果我更改了

workers,是否必须将此参数设置为 true?它与 CPU 使用率有关吗?

相关问题 可在以下链接中找到:

我正在使用以下代码调用 fit_generator():

history = model.fit_generator(generator=trainGenerator,

steps_per_epoch=trainGenerator.samples//nBatches, # total number of steps (batches of samples)

epochs=nEpochs, # number of epochs to train the model

verbose=2, # verbosity mode. 0 = silent, 1 = progress bar, 2 = one line per epoch

callbacks=callback, # keras.callbacks.Callback instances to apply during training

validation_data=valGenerator, # generator or tuple on which to evaluate the loss and any model metrics at the end of each epoch

validation_steps=

valGenerator.samples//nBatches, # number of steps (batches of samples) to yield from validation_data generator before stopping at the end of every epoch

class_weight=classWeights, # optional dictionary mapping class indices (integers) to a weight (float) value, used for weighting the loss function

max_queue_size=10, # maximum size for the generator queue

workers=1, # maximum number of processes to spin up when using process-based threading

use_multiprocessing=False, # whether to use process-based threading

shuffle=True, # whether to shuffle the order of the batches at the beginning of each epoch

initial_epoch=0)

我的机器规格是:

CPU : 2xXeon E5-2260 2.6 GHz

Cores: 10

Graphic card: Titan X, Maxwell, GM200

RAM: 128 GB

HDD: 4TB

SSD: 512 GB