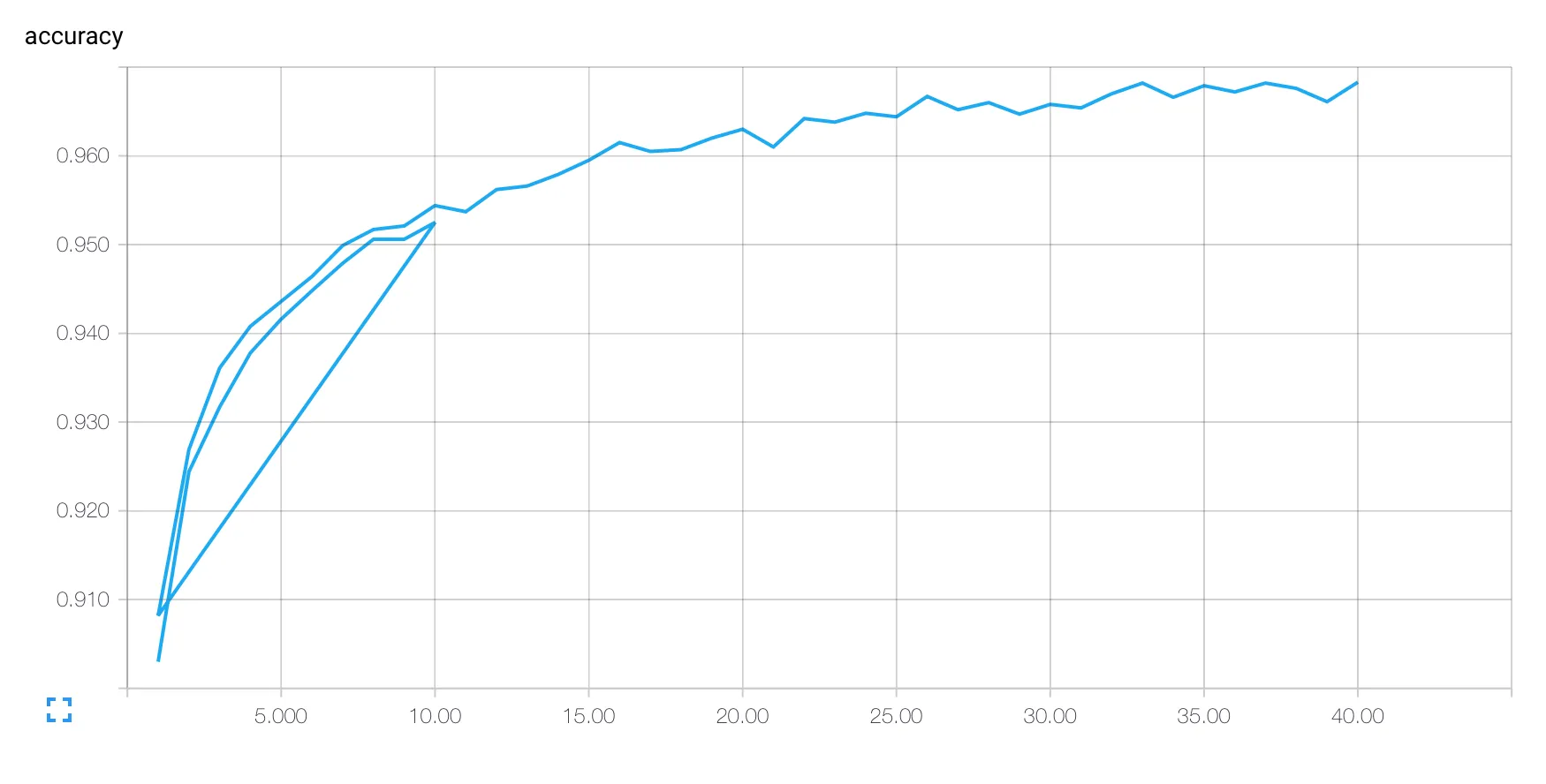

我的TensorBoard图表将我连续运行的TensorFlow代码视为同一次运行。例如,如果我首先使用FLAGS.epochs == 10运行下面的代码,然后再使用FLAGS.epochs == 40重新运行它,我会得到:

这个图表在第一次运行结束时“回到”第二次运行的开头。

有没有一种方法可以将我的多次运行视为不同的日志,以便可以进行比较或单独查看?

from __future__ import (absolute_import, print_function, division, unicode_literals)

import tensorflow as tf

from tensorflow.examples.tutorials.mnist import input_data

# Basic model parameters as external flags.

flags = tf.app.flags

FLAGS = flags.FLAGS

flags.DEFINE_float('epochs', 40, 'Epochs to run')

flags.DEFINE_integer('mb_size', 40, 'Mini-batch size. Must divide evenly into the dataset sizes.')

lags.DEFINE_float('learning_rate', 0.15, 'Initial learning rate.')

flags.DEFINE_float('regularization_weight', 0.1 / 1000, 'Regularization lambda.')

flags.DEFINE_string('data_dir', './data', 'Directory to hold training and test data.')

flags.DEFINE_string('train_dir', './_tmp/train', 'Directory to log training (and the network def).')

flags.DEFINE_string('test_dir', './_tmp/test', 'Directory to log testing.')

def variable_summaries(var, name):

with tf.name_scope("summaries"):

mean = tf.reduce_mean(var)

tf.scalar_summary('mean/' + name, mean)

with tf.name_scope('stddev'):

stddev = tf.sqrt(tf.reduce_sum(tf.square(var - mean)))

tf.scalar_summary('sttdev/' + name, stddev)

tf.scalar_summary('max/' + name, tf.reduce_max(var))

tf.scalar_summary('min/' + name, tf.reduce_min(var))

tf.histogram_summary(name, var)

def nn_layer(input_tensor, input_dim, output_dim, neuron_fn, layer_name):

with tf.name_scope(layer_name):

# This Variable will hold the state of the weights for the layer

with tf.name_scope("weights"):

weights = tf.Variable(tf.truncated_normal([input_dim, output_dim], stddev=0.1))

variable_summaries(weights, layer_name + '/weights')

with tf.name_scope("biases"):

biases = tf.Variable(tf.constant(0.1, shape=[output_dim]))

variable_summaries(biases, layer_name + '/biases')

with tf.name_scope('activations'):

with tf.name_scope('weighted_inputs'):

weighted_inputs = tf.matmul(input_tensor, weights) + biases

tf.histogram_summary(layer_name + '/weighted_inputs', weighted_inputs)

output = neuron_fn(weighted_inputs)

tf.histogram_summary(layer_name + '/output', output)

return output, weights

# Collect data

mnist = input_data.read_data_sets(FLAGS.data_dir, one_hot=True)

# Inputs and outputs

x = tf.placeholder(tf.float32, shape=[None, 784])

y_ = tf.placeholder(tf.float32, shape=[None, 10])

# Network structure

o1, W1 = nn_layer(x, 784, 30, tf.nn.sigmoid, 'hidden_layer')

y, W2 = nn_layer(o1, 30, 10, tf.nn.softmax, 'output_layer')

with tf.name_scope('accuracy'):

with tf.name_scope('loss'):

cost = tf.reduce_mean(-tf.reduce_sum(y_ * tf.log(y), reduction_indices=[1]))

loss = cost + FLAGS.regularization_weight * (tf.nn.l2_loss(W1) + tf.nn.l2_loss(W2))

with tf.name_scope('correct_prediction'):

correct_prediction = tf.equal(tf.argmax(y, 1), tf.argmax(y_, 1))

with tf.name_scope('accuracy'):

accuracy = tf.reduce_mean(tf.cast(correct_prediction, tf.float32))

tf.scalar_summary('accuracy', accuracy)

tf.scalar_summary('loss', loss)

train_step = tf.train.GradientDescentOptimizer(FLAGS.learning_rate).minimize(loss)

# Logging

train_writer = tf.train.SummaryWriter(FLAGS.train_dir, tf.get_default_graph())

test_writer = tf.train.SummaryWriter(FLAGS.test_dir)

merged = tf.merge_all_summaries()

with tf.Session() as sess:

sess.run(tf.initialize_all_variables())

for ep in range(FLAGS.epochs):

for mb in range(int(len(mnist.train.images)/FLAGS.mb_size)):

batch_xs, batch_ys = mnist.train.next_batch(FLAGS.mb_size)

sess.run(train_step, feed_dict={x: batch_xs, y_: batch_ys})

summary = sess.run(merged, feed_dict={x: mnist.test.images, y_: mnist.test.labels})

test_writer.add_summary(summary, ep+1)