我正在按照Flink的快速入门示例监控维基百科编辑流进行操作。

这个示例是用Java编写的,而我正在使用Scala进行实现,如下:

/**

* Wikipedia Edit Monitoring

*/

object WikipediaEditMonitoring {

def main(args: Array[String]) {

// set up the execution environment

val env: StreamExecutionEnvironment = StreamExecutionEnvironment.getExecutionEnvironment

val edits: DataStream[WikipediaEditEvent] = env.addSource(new WikipediaEditsSource)

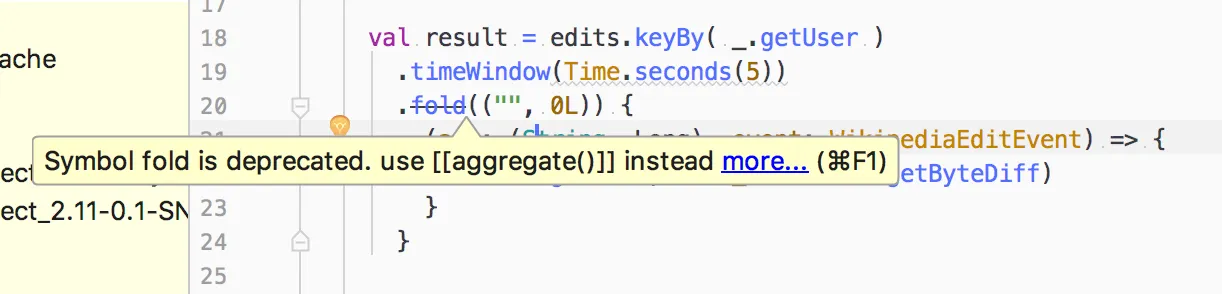

val result = edits.keyBy( _.getUser )

.timeWindow(Time.seconds(5))

.fold(("", 0L)) {

(acc: (String, Long), event: WikipediaEditEvent) => {

(event.getUser, acc._2 + event.getByteDiff)

}

}

result.print

// execute program

env.execute("Wikipedia Edit Monitoring")

}

}

然而,Flink中的fold函数已经弃用,建议使用aggregate函数。

但我没有找到有关如何将弃用的 fold 转换为 aggregrate 的示例或教程。

有什么想法吗?可能不仅仅是应用 aggregrate。

更新

我有另一个实现如下:

/**

* Wikipedia Edit Monitoring

*/

object WikipediaEditMonitoring {

def main(args: Array[String]) {

// set up the execution environment

val env: StreamExecutionEnvironment = StreamExecutionEnvironment.getExecutionEnvironment

val edits: DataStream[WikipediaEditEvent] = env.addSource(new WikipediaEditsSource)

val result = edits

.map( e => UserWithEdits(e.getUser, e.getByteDiff) )

.keyBy( "user" )

.timeWindow(Time.seconds(5))

.sum("edits")

result.print

// execute program

env.execute("Wikipedia Edit Monitoring")

}

/** Data type for words with count */

case class UserWithEdits(user: String, edits: Long)

}

我也想知道如何使用自定义

AggregateFunction 进行实现。

更新

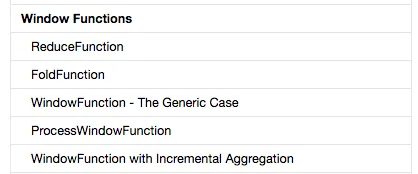

我遵循了这个文档:AggregateFunction,但是有以下问题:在版本 1.3 的接口

AggregateFunction 的源代码中,您将看到 add 确实返回 void:void add(IN value, ACC accumulator);

但是对于版本1.4 AggregateFunction,它返回:

ACC add(IN value, ACC accumulator);

我该如何处理这个问题?

我正在使用的Flink版本是1.3.2,但该版本的文档中没有AggregateFunction,而artifactory中尚未发布1.4版本。