我一直在关注《走向数据科学》有关word2vec和skip-gram模型的教程,但我遇到了一个问题,尽管我搜索了很多并尝试了多次不成功的解决方案,但仍无法解决。

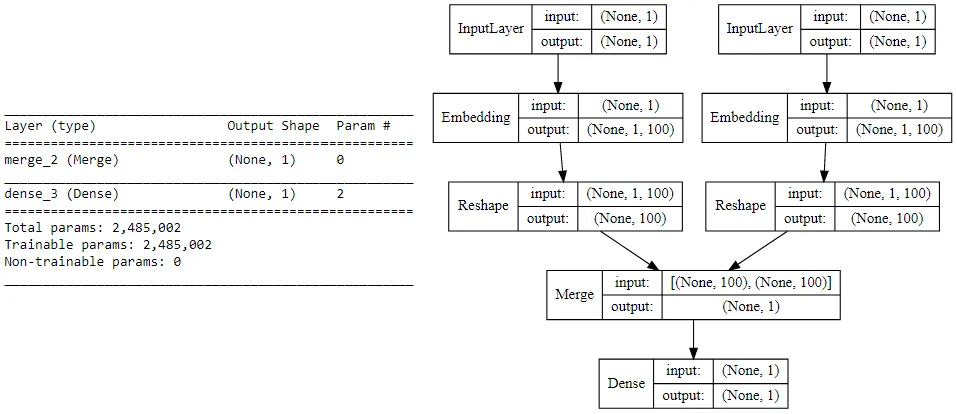

它展示了如何构建skip-gram模型架构的步骤似乎已经过时,因为使用了keras.layers中的Merge层。我尝试着将他所编写的代码(在Keras的Sequential API中实现)翻译成Functional API,以解决Merge层的弃用问题,并用keras.layers.Dot层代替它。然而,我仍然卡在将两个模型(单词和上下文)合并成最终模型的步骤中,其架构必须如下所示: 这是作者使用的代码:from keras.layers import Merge

from keras.layers.core import Dense, Reshape

from keras.layers.embeddings import Embedding

from keras.models import Sequential

# build skip-gram architecture

word_model = Sequential()

word_model.add(Embedding(vocab_size, embed_size,

embeddings_initializer="glorot_uniform",

input_length=1))

word_model.add(Reshape((embed_size, )))

context_model = Sequential()

context_model.add(Embedding(vocab_size, embed_size,

embeddings_initializer="glorot_uniform",

input_length=1))

context_model.add(Reshape((embed_size,)))

model = Sequential()

model.add(Merge([word_model, context_model], mode="dot"))

model.add(Dense(1, kernel_initializer="glorot_uniform", activation="sigmoid"))

model.compile(loss="mean_squared_error", optimizer="rmsprop")

以下是我将顺序代码实现翻译成函数式代码的尝试:

from keras import models

from keras import layers

from keras import Input, Model

word_input = Input(shape=(1,))

word_x = layers.Embedding(vocab_size, embed_size, embeddings_initializer='glorot_uniform')(word_input)

word_reshape = layers.Reshape((embed_size,))(word_x)

word_model = Model(word_input, word_reshape)

context_input = Input(shape=(1,))

context_x = layers.Embedding(vocab_size, embed_size, embeddings_initializer='glorot_uniform')(context_input)

context_reshape = layers.Reshape((embed_size,))(context_x)

context_model = Model(context_input, context_reshape)

model_input = layers.dot([word_model, context_model], axes=1, normalize=False)

model_output = layers.Dense(1, kernel_initializer='glorot_uniform', activation='sigmoid')

model = Model(model_input, model_output)

然而,当执行时,会返回以下错误:

我是Keras函数API的完全新手,如果您能在这种情况下为我提供一些指导,告诉我如何将上下文和单词模型输入到点层中以实现图像中的架构,我将不胜感激。ValueError: Layer dot_5 was called with an input that isn't a symbolic tensor. Received type: . Full input: [, ]. All inputs to the layer should be tensors.