我有一个相对较大的数据集(1,750,000行,5列),其中包含带有唯一ID值(第一列)的记录,由四个标准(另外四列)描述。一个小例子如下:

# example

library(data.table)

dt <- data.table(id=c("a1","b3","c7","d5","e3","f4","g2","h1","i9","j6"),

s1=c("a","b","c","l","l","v","v","v",NA,NA),

s2=c("d","d","e","k","k","o","o","o",NA,NA),

s3=c("f","g","f","n","n","s","r","u","w","z"),

s4=c("h","i","j","m","m","t","t","t",NA,NA))

它看起来像这样:

id s1 s2 s3 s4

1: a1 a d f h

2: b3 b d g i

3: c7 c e f j

4: d5 l k n m

5: e3 l k n m

6: f4 v o s t

7: g2 v o r t

8: h1 v o u t

9: i9 <NA> <NA> w <NA>

10: j6 <NA> <NA> z <NA>

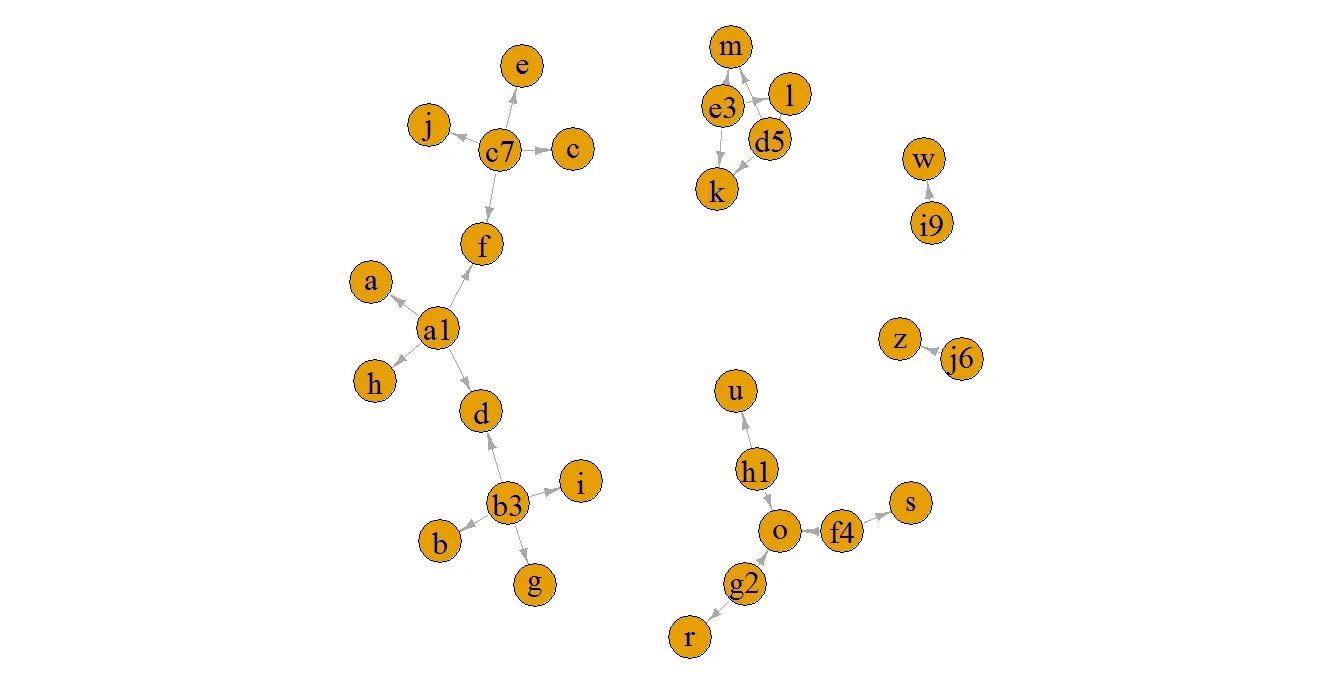

我的最终目标是找到所有描述列中具有相同字符的记录(忽略NAs),并将它们分组为一个新的ID,以便我可以轻松地识别重复的记录。这些ID通过连接每行的ID构建而成。

由于我可以直接和间接地找到具有重复描述的记录,因此事情变得更加混乱。因此,我目前正在分两步进行此操作。

第一步 - 基于直接重复构建重复的ID

# grouping ids with duplicated info in any of the columns

#sorry, I could not find search for duplicates using multiple columns simultaneously...

dt[!is.na(dt$s1),ids1:= paste(id,collapse="|"), by = list(s1)]

dt[!is.na(dt$s1),ids2:= paste(id,collapse="|"), by = list(s2)]

dt[!is.na(dt$s1),ids3:= paste(id,collapse="|"), by = list(s3)]

dt[!is.na(dt$s1),ids4:= paste(id,collapse="|"), by = list(s4)]

# getting a unique duplicated ID for each row

dt$new.id <- apply(dt[,.(ids1,ids2,ids3,ids4)], 1, paste, collapse="|")

dt$new.id <- apply(dt[,"new.id",drop=FALSE], 1, function(x) paste(unique(strsplit(x,"\\|")[[1]]),collapse="|"))

这个操作会产生以下结果,其中唯一的重复ID被定义为“new.id”:

id s1 s2 s3 s4 ids1 ids2 ids3 ids4 new.id

1: a1 a d f h a1 a1|b3 a1|c7 a1 a1|b3|c7

2: b3 b d g i b3 a1|b3 b3 b3 b3|a1

3: c7 c e f j c7 c7 a1|c7 c7 c7|a1

4: d5 l k n m d5|e3 d5|e3 d5|e3 d5|e3 d5|e3

5: e3 l k n m d5|e3 d5|e3 d5|e3 d5|e3 d5|e3

6: f4 v o s t f4|g2|h1 f4|g2|h1 f4 f4|g2|h1 f4|g2|h1

7: g2 v o r t f4|g2|h1 f4|g2|h1 g2 f4|g2|h1 f4|g2|h1

8: h1 v o u t f4|g2|h1 f4|g2|h1 h1 f4|g2|h1 f4|g2|h1

9: i9 <NA> <NA> w <NA> <NA> <NA> <NA> <NA> NA

10: j6 <NA> <NA> z <NA> <NA> <NA> <NA> <NA> NA

请注意,记录“b3”和“c7”通过“a1”间接重复(所有其他示例都是直接重复,应保持不变)。这就是为什么我们需要下一步的原因。

第二步 - 根据间接重复更新重复的ID。

#filtering the relevant columns for the indirect search

dt = dt[,.(id,new.id)]

#creating the patterns to be used by grepl() for the look-up for each row

dt[,patt:= .(paste(paste("^",id,"\\||",sep=""),paste("\\|",id,"\\||",sep=""),paste("\\|",id,"$",sep=""),collapse = "" ,sep="")), by = list(id)]

#Transforming the ID vector into factor and setting it as a 'key' to the data.table (speed up the processing)

dt$new.id = as.factor(dt$new.id)

setkeyv(dt, c("new.id"))

#Performing the loop using sapply

library(stringr)

for(i in 1:nrow(dt)) {

pat = dt$patt[i] # retrieving the research pattern

tmp = dt[new.id %like% pat] # searching the pattern using grepl()

if(dim(tmp)[1]>1) {

x = which.max(str_count(tmp$new.id, "\\|"))

dt$new.id[i] = as.character(tmp$new.id[x])

}

}

#filtering the final columns

dt = dt[,.(id,new.id)]

最终表格如下:

id new.id

1: a1 a1|b3|c7

2: b3 a1|b3|c7

3: c7 a1|b3|c7

4: d5 d5|e3

5: e3 d5|e3

6: f4 f4|g2|h1

7: g2 f4|g2|h1

8: h1 f4|g2|h1

9: i9 NA

10: j6 NA

请注意,现在前三条记录(“a1”,“b3”,“c7”)被归为更广泛的重复ID下,其中包含直接和间接记录。

一切运行得很好,但我的代码非常慢。它花了两天时间运行半个数据集(〜800,0000)。我可以将循环并行化到不同的核心中,但仍需要几个小时。我几乎可以确定我可以更好地使用data.table功能,也许在循环中使用 'set' 。今天我花了几个小时尝试使用data.table实现相同的代码,但我对其语法还不熟悉,我真的很难。有什么建议可以优化这段代码吗?

注意:代码中最慢的部分是循环,循环内最低效的步骤是数据表内模式的grepl()。似乎设置数据表的'key'可以加速此过程,但在我的情况下未改变grepl()所需时间。

s2列中,其含义是否与出现在s4列中相同。例如,如果第1行(id ==“a1”)中s4列中的“h”变成了“k”,预期结果将是c(rep('a1|b3|c7|d5|e3', 5), rep('f4|g2|h1', 3))吗? - Uwe